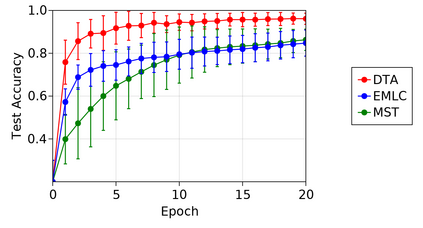

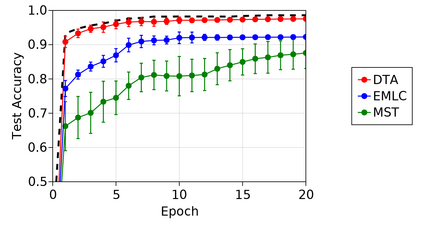

We introduce a new supervised learning algorithm based to train spiking neural networks for classification. The algorithm overcomes a limitation of existing multi-spike learning methods: it solves the problem of interference between interacting output spikes during a learning trial. This problem of learning interference causes learning performance in existing approaches to decrease as the number of output spikes increases, and represents an important limitation in existing multi-spike learning approaches. We address learning interference by introducing a novel mechanism to balance the magnitudes of weight adjustments during learning, which in theory allows every spike to simultaneously converge to their desired timings. Our results indicate that our method achieves significantly higher memory capacity and faster convergence compared to existing approaches for multi-spike classification. In the ubiquitous Iris and MNIST datasets, our algorithm achieves competitive predictive performance with state-of-the-art approaches.

翻译:我们引入了一种新的有监督的学习算法,用于培训神经神经网络进行分类。算法克服了现有多功能学习方法的局限性:它解决了学习试验期间互动产出峰值之间的干扰问题。学习干扰问题导致现有方法的学习绩效随着产出峰值的增加而下降,这是现有多功能学习方法的一个重要限制。我们引入了一种新的机制来平衡学习期间体重调整的幅度,从而解决学习干扰问题,在理论上允许每股峰值同时与预期的时间趋同。我们的结果表明,我们的方法与现有的多功能分类方法相比,记忆能力要高得多,并更快地融合。在普遍存在的Iris和MNIST数据集中,我们的算法通过最先进的方法实现竞争性预测性表现。