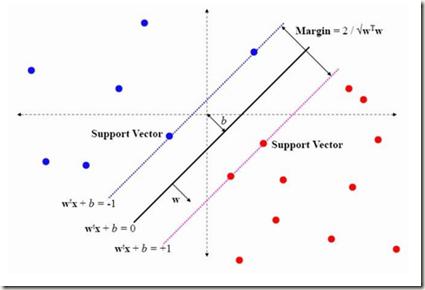

Recent research shows that the dynamics of an infinitely wide neural network (NN) trained by gradient descent can be characterized by Neural Tangent Kernel (NTK) \citep{jacot2018neural}. Under the squared loss, the infinite-width NN trained by gradient descent with an infinitely small learning rate is equivalent to kernel regression with NTK \citep{arora2019exact}. However, the equivalence is only known for ridge regression currently \citep{arora2019harnessing}, while the equivalence between NN and other kernel machines (KMs), e.g. support vector machine (SVM), remains unknown. Therefore, in this work, we propose to establish the equivalence between NN and SVM, and specifically, the infinitely wide NN trained by soft margin loss and the standard soft margin SVM with NTK trained by subgradient descent. Our main theoretical results include establishing the equivalence between NN and a broad family of $\ell_2$ regularized KMs with finite-width bounds, which cannot be handled by prior work, and showing that every finite-width NN trained by such regularized loss functions is approximately a KM. Furthermore, we demonstrate our theory can enable three practical applications, including (i) \textit{non-vacuous} generalization bound of NN via the corresponding KM; (ii) \textit{non-trivial} robustness certificate for the infinite-width NN (while existing robustness verification methods would provide vacuous bounds); (iii) intrinsically more robust infinite-width NNs than those from previous kernel regression. Our code for the experiments are available at \url{https://github.com/leslie-CH/equiv-nn-svm}.

翻译:最近的研究显示,由梯度下移所训练的无限宽度神经网络(NN)的动态只能以Neal Tangent Kernel (NTK)\ citep{jacot2018neur}来描述。在平方损失下,由梯度下移所训练的无限宽度NNN,其学习率极小相当于内核回归,NTK \ citep{arora2019exact}。然而,只有目前峰值回归所知道的等值,而NN和其他内核机(KM)之间的等值,例如支持矢量机(SVM)的等值。因此,在这项工作中,我们提议在NNN和SVM(T)之间建立无限宽度的等值,而SVM(NNT) 和宽度的直流(NNF) 等值的等值,而NM-lority-lations的等值(K) 等值正值的等等值, 以硬度校正值校准的校准的校正三。