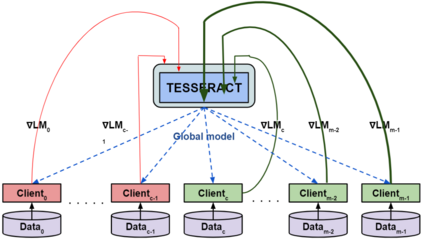

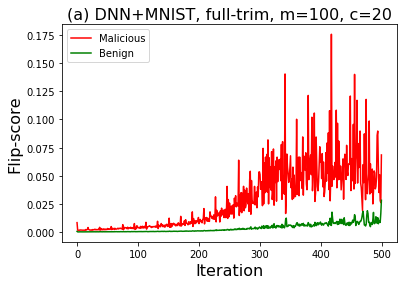

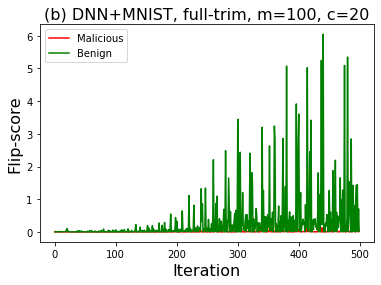

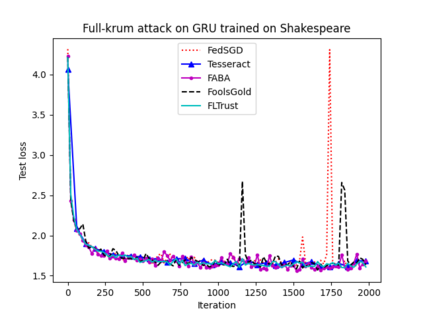

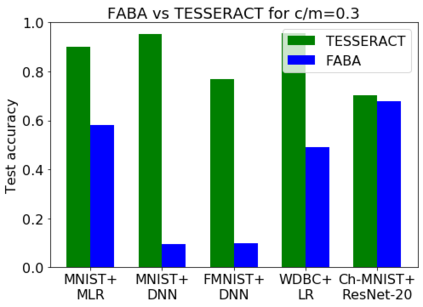

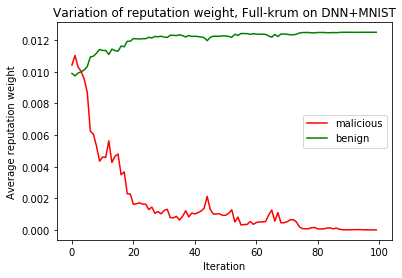

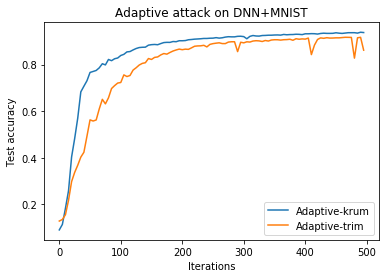

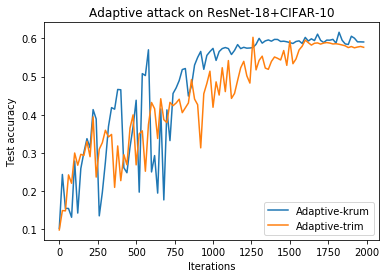

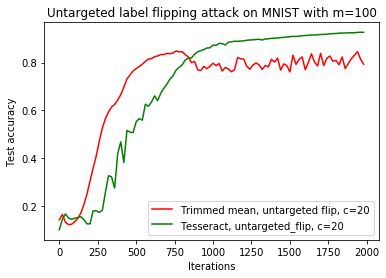

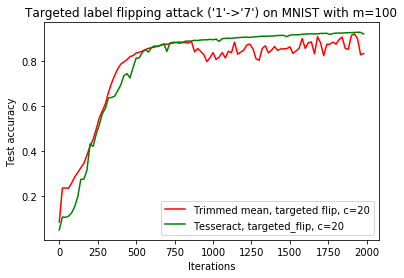

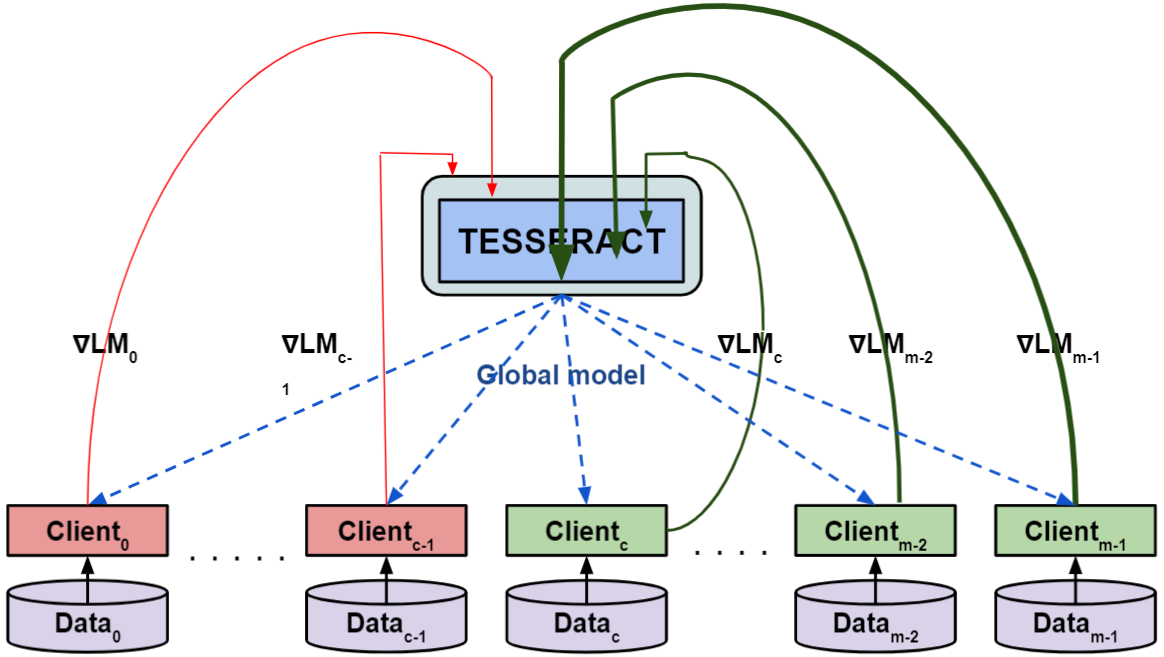

Federated learning---multi-party, distributed learning in a decentralized environment---is vulnerable to model poisoning attacks, even more so than centralized learning approaches. This is because malicious clients can collude and send in carefully tailored model updates to make the global model inaccurate. This motivated the development of Byzantine-resilient federated learning algorithms, such as Krum, Bulyan, FABA, and FoolsGold. However, a recently developed untargeted model poisoning attack showed that all prior defenses can be bypassed. The attack uses the intuition that simply by changing the sign of the gradient updates that the optimizer is computing, for a set of malicious clients, a model can be diverted from the optima to increase the test error rate. In this work, we develop TESSERACT---a defense against this directed deviation attack, a state-of-the-art model poisoning attack. TESSERACT is based on a simple intuition that in a federated learning setting, certain patterns of gradient flips are indicative of an attack. This intuition is remarkably stable across different learning algorithms, models, and datasets. TESSERACT assigns reputation scores to the participating clients based on their behavior during the training phase and then takes a weighted contribution of the clients. We show that TESSERACT provides robustness against even a white-box version of the attack.

翻译:联邦学习-多党,在分散化的环境中分散学习,容易受典型中毒袭击,甚至比集中化的学习方法更加容易。这是因为恶意客户可以串通并发送精心定制的模型更新,以使全球模型不准确。这促使开发了Byzantine-弹性联合学习算法,如Krum、Bulyan、FABA和FoupsGold。然而,最近开发的不有针对性的模型中毒袭击表明,所有先前的防御都可以被绕过。袭击使用的直觉仅仅是改变优化者正在计算的梯度更新信号,对于一群恶意客户来说,一个模型可以从optima中被转用,以提高测试错误率。在这项工作中,我们开发了TESSERACT-一个防御这种定向偏差攻击、最先进的模型中毒袭击。TESSERACT基于一种简单的直觉,在联合化学习环境中,某些梯度翻转模式是攻击的标志。这种直觉在不同的学习算法、模型和数据精确度之间非常稳定,对于不同的学习算法,对于一系列恶意客户来说,这种直观的准确性是用来进行TESACT-ACT的升级的客户在参加阶段培训期间,我们向SERACT的信用记录。