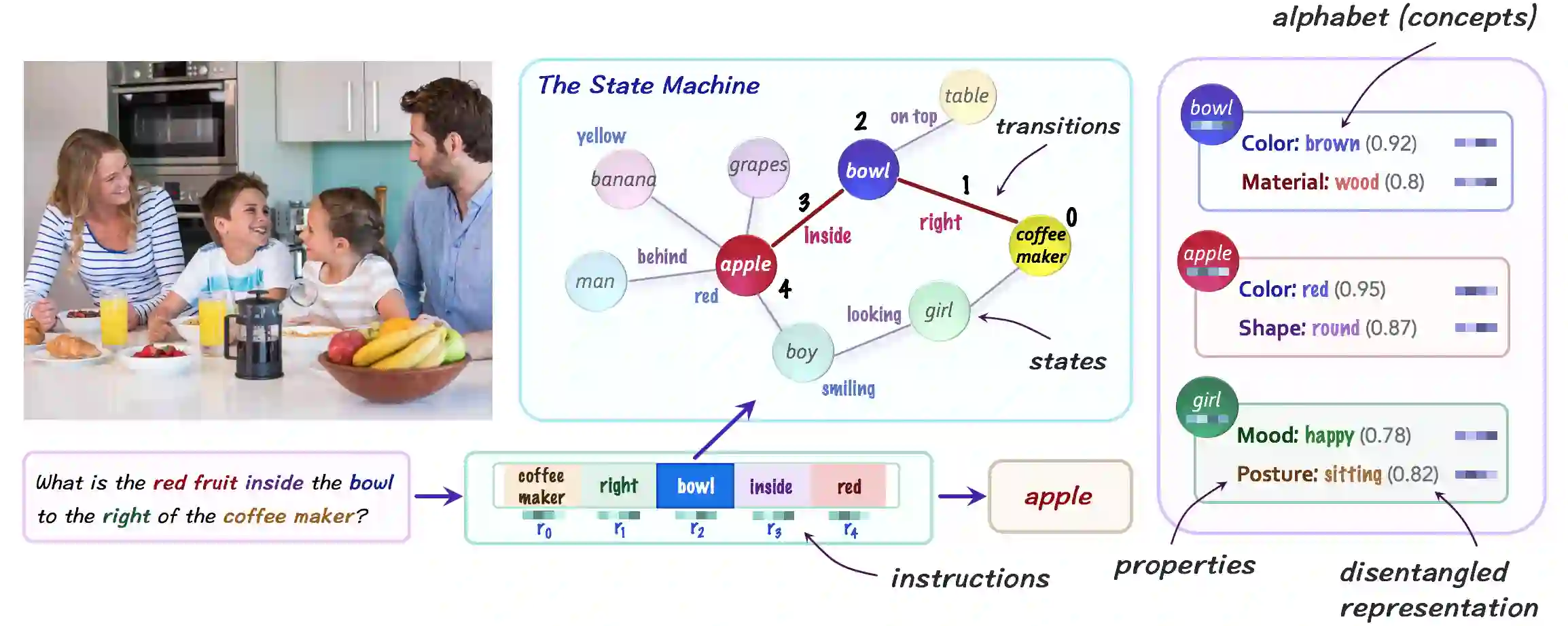

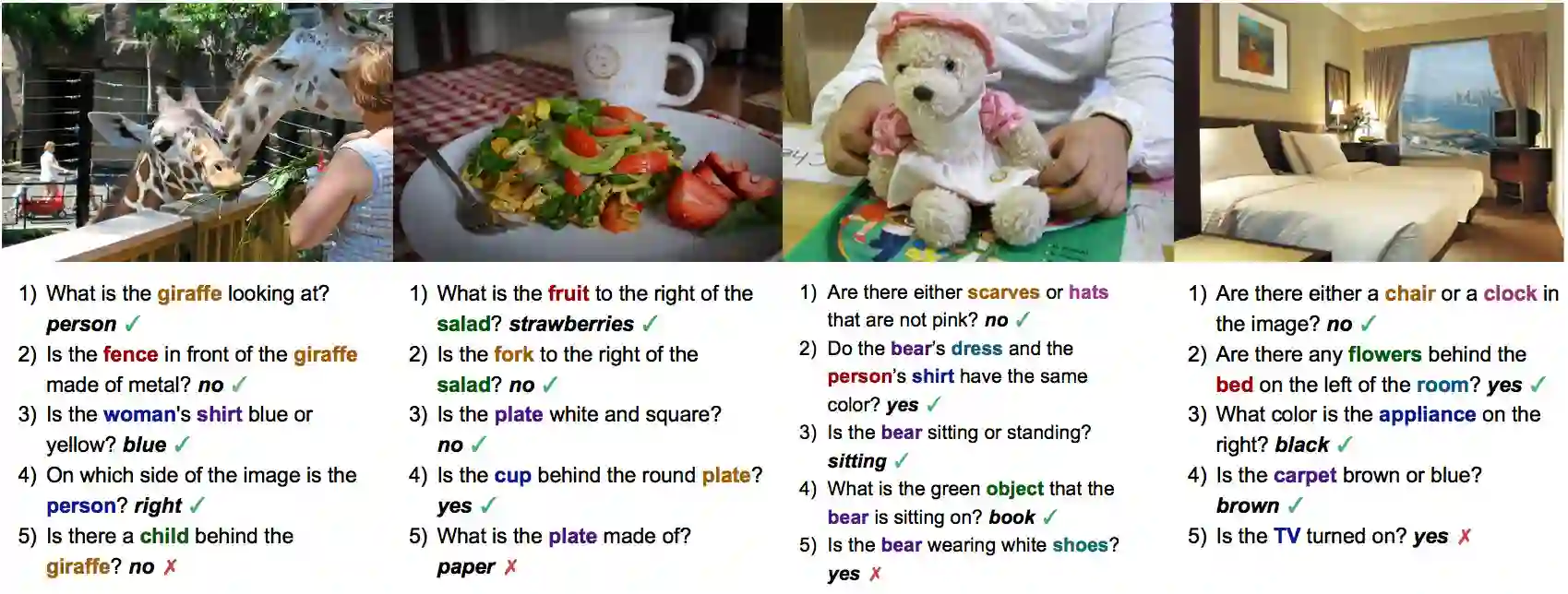

We introduce the Neural State Machine, seeking to bridge the gap between the neural and symbolic views of AI and integrate their complementary strengths for the task of visual reasoning. Given an image, we first predict a probabilistic graph that represents its underlying semantics and serves as a structured world model. Then, we perform sequential reasoning over the graph, iteratively traversing its nodes to answer a given question or draw a new inference. In contrast to most neural architectures that are designed to closely interact with the raw sensory data, our model operates instead in an abstract latent space, by transforming both the visual and linguistic modalities into semantic concept-based representations, thereby achieving enhanced transparency and modularity. We evaluate our model on VQA-CP and GQA, two recent VQA datasets that involve compositionality, multi-step inference and diverse reasoning skills, achieving state-of-the-art results in both cases. We provide further experiments that illustrate the model's strong generalization capacity across multiple dimensions, including novel compositions of concepts, changes in the answer distribution, and unseen linguistic structures, demonstrating the qualities and efficacy of our approach.

翻译:我们引入神经国家机器,试图弥合AI神经和象征观点之间的差距,并整合其与视觉推理任务之间的互补优势。根据图像,我们首先预测一个代表其基本语义和结构化世界模型的概率图。然后,我们对图形进行顺序推理,反复地穿行其节点以回答一个特定问题或得出新的推理。与大多数旨在与原始感官数据密切互动的神经结构形成对比,我们模型在抽象的潜在空间运作,将视觉和语言模式转化为基于语义概念的表达方式,从而提高透明度和模块性。我们评估了我们关于VQA-CP和GQA的模型,最近两个VQA数据集涉及构成性、多步推论和不同推理技巧,在两种情况下都取得了最新推理结果。我们提供了进一步实验,以说明模型在多个层面的强大概括能力,包括新概念的构成、答案分布的变化以及不可见的语言结构,展示了我们方法的素质和效力。