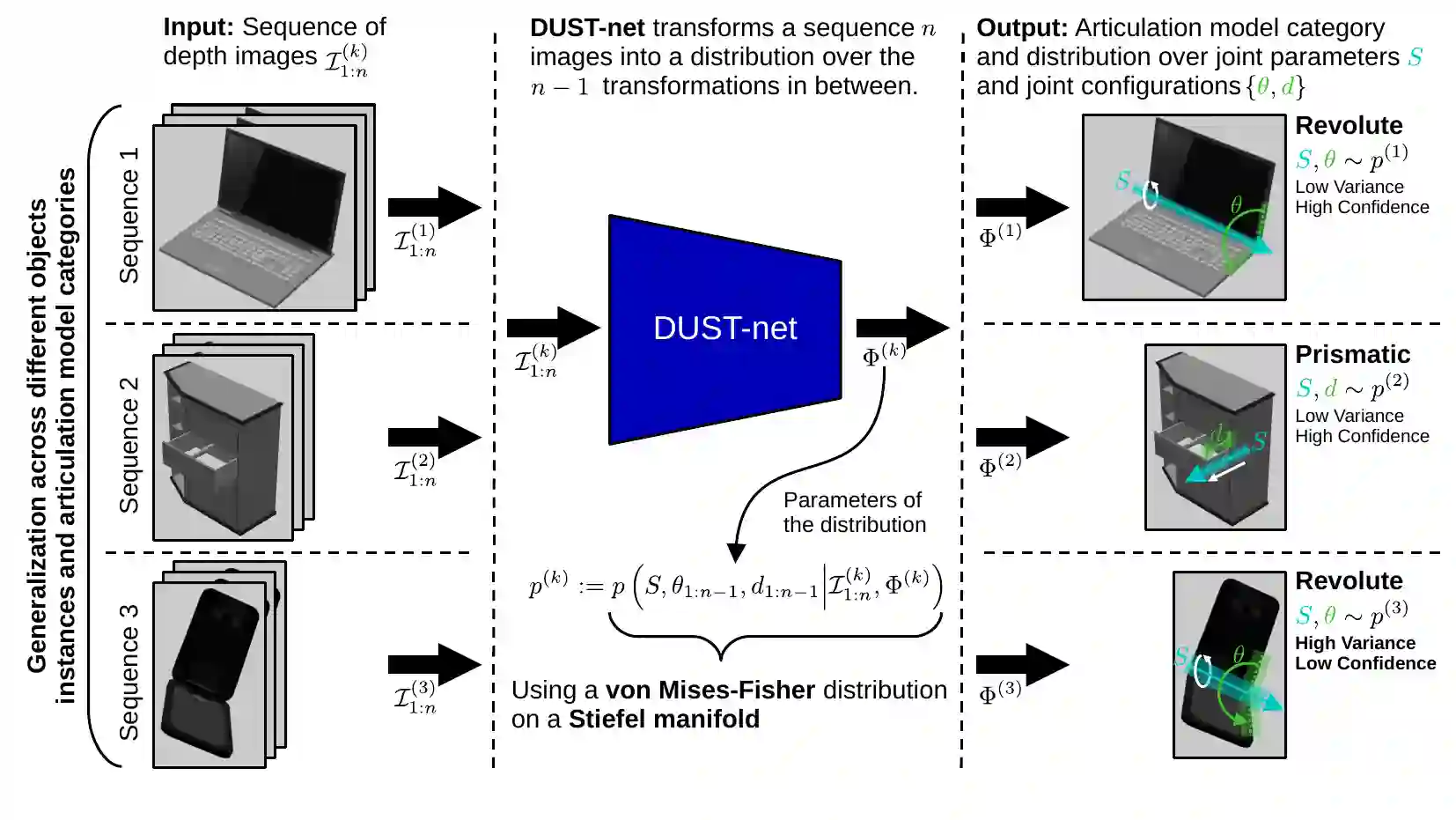

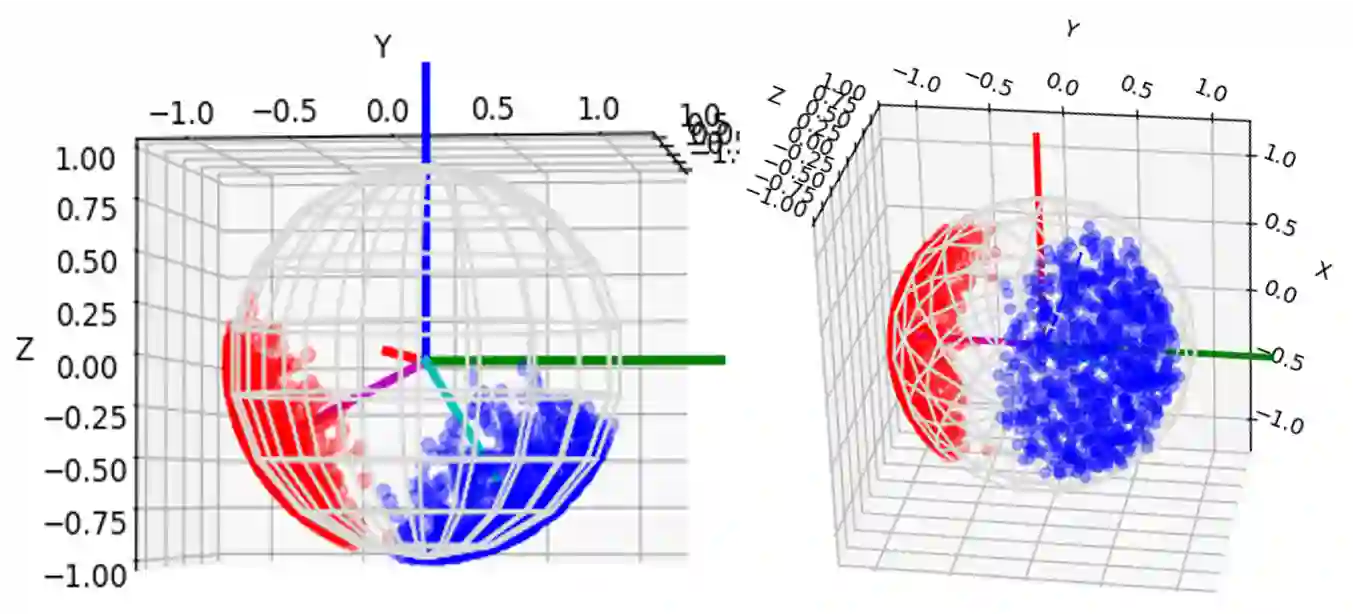

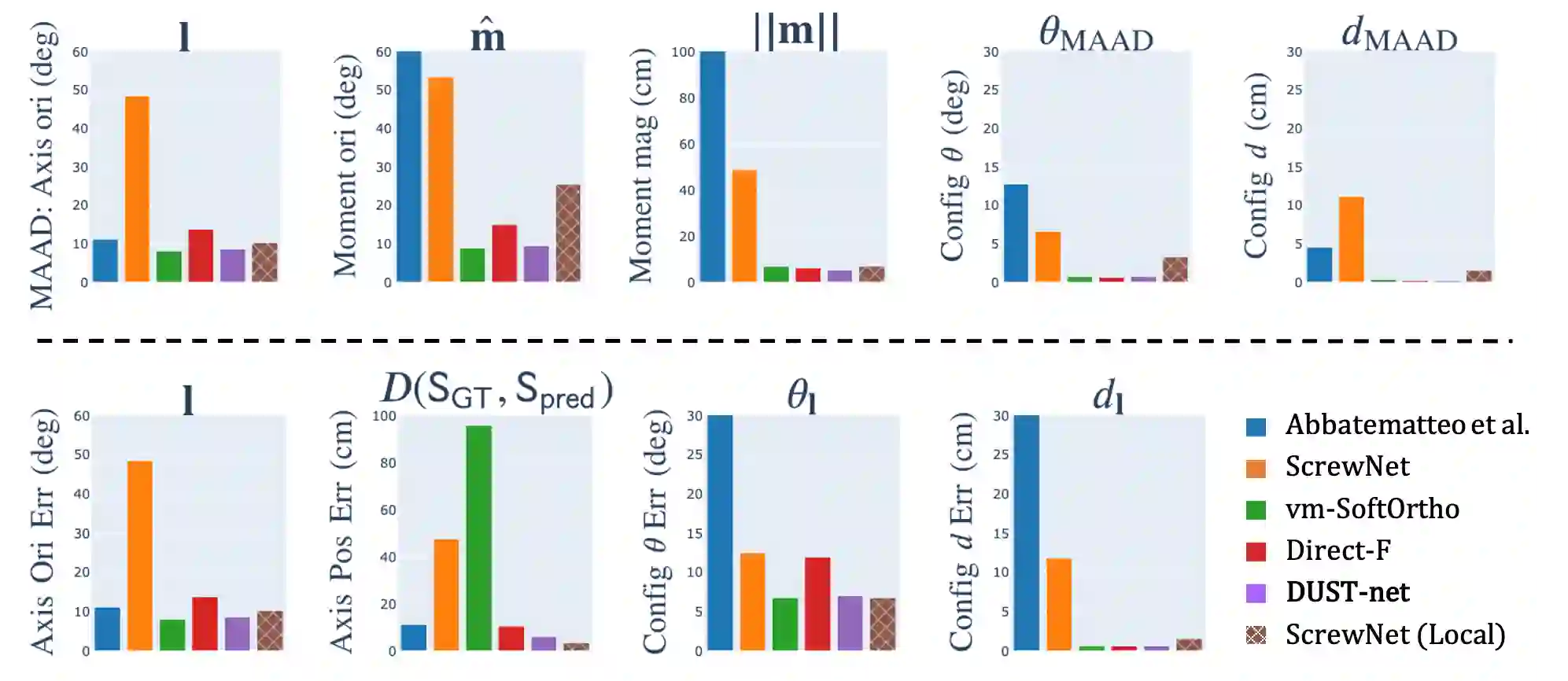

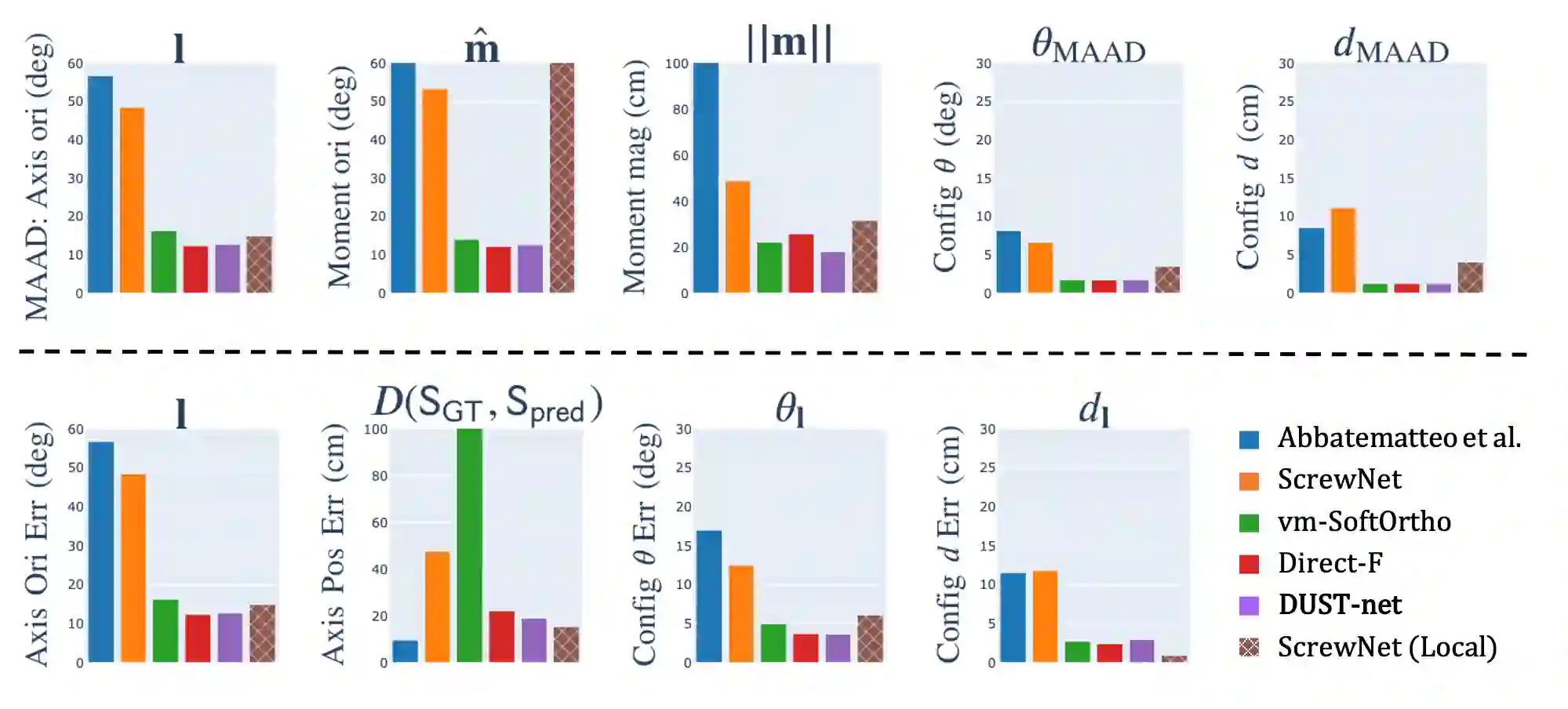

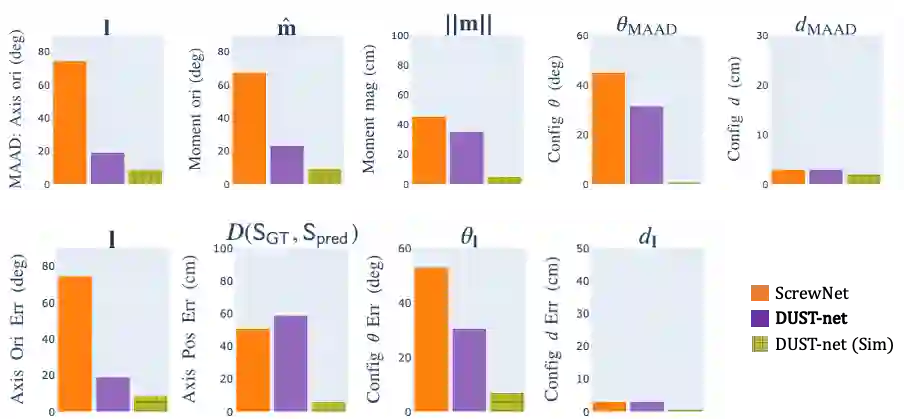

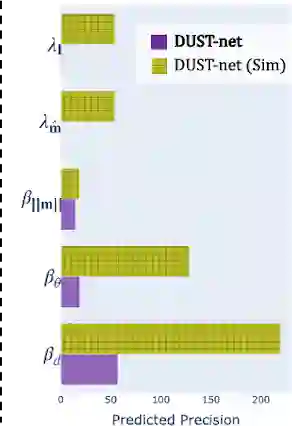

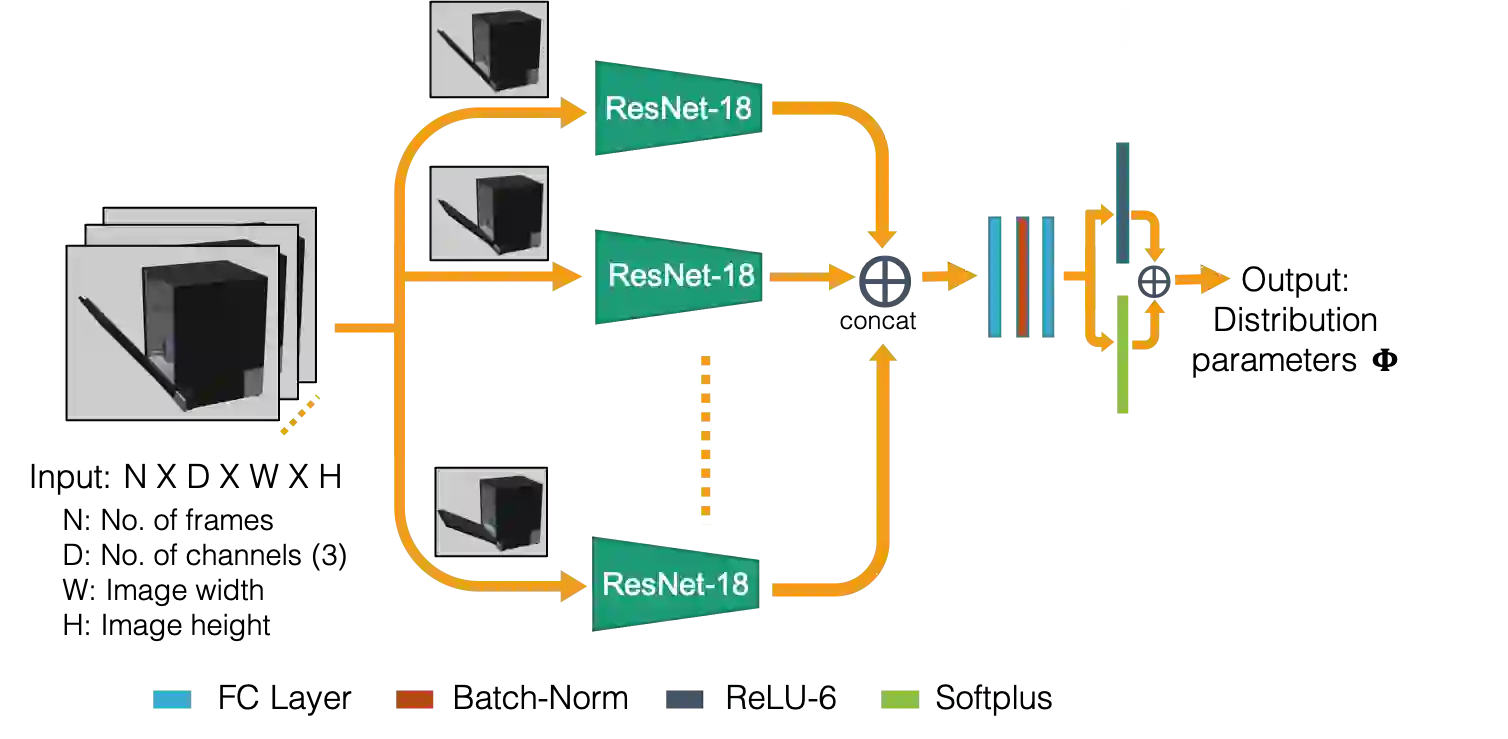

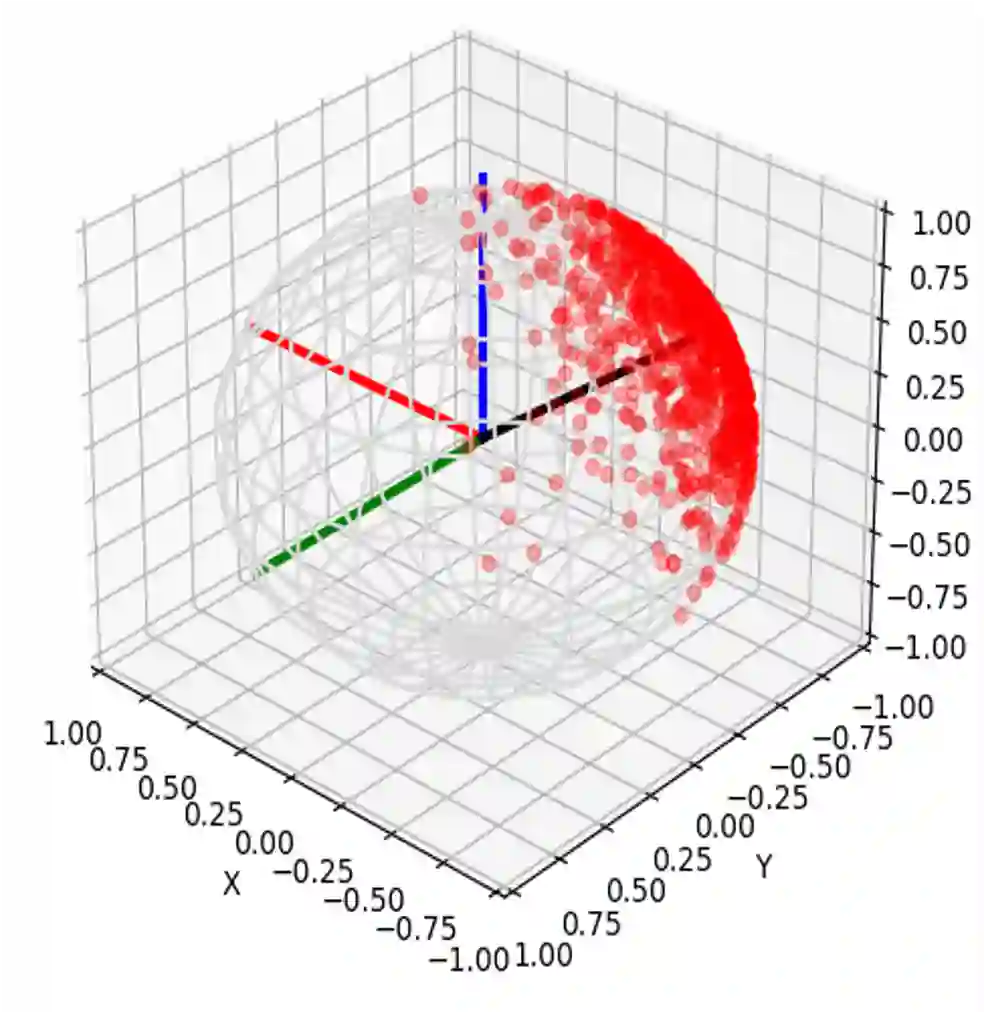

We propose a method that efficiently learns distributions over articulation model parameters directly from depth images without the need to know articulation model categories a priori. By contrast, existing methods that learn articulation models from raw observations typically only predict point estimates of the model parameters, which are insufficient to guarantee the safe manipulation of articulated objects. Our core contributions include a novel representation for distributions over rigid body transformations and articulation model parameters based on screw theory, von Mises-Fisher distributions, and Stiefel manifolds. Combining these concepts allows for an efficient, mathematically sound representation that implicitly satisfies the constraints that rigid body transformations and articulations must adhere to. Leveraging this representation, we introduce a novel deep learning based approach, DUST-net, that performs category-independent articulation model estimation while also providing model uncertainties. We evaluate our approach on several benchmarking datasets and real-world objects and compare its performance with two current state-of-the-art methods. Our results demonstrate that DUST-net can successfully learn distributions over articulation models for novel objects across articulation model categories, which generate point estimates with better accuracy than state-of-the-art methods and effectively capture the uncertainty over predicted model parameters due to noisy inputs.

翻译:我们建议一种方法,即从深度图像直接从表达模型参数上有效学习分布,而不需要先验地了解表达模型的模型类别。相比之下,从原始观测中学习表达模型的现有方法通常只是预测模型参数的点数估计数,这些估计数不足以保证对表达对象的安全操作。我们的核心贡献包括:以螺丝理论、冯·米塞-费舍分布和Stiefel 等标准为基础,对僵硬体变形和变形模型参数的分布进行新颖的表述;将这些概念结合起来,就能够实现高效、数学上健全的表述,隐含地满足僵硬体变形和变形必须遵守的限制因素。利用这一表述,我们引入了一种新的深层次的基于学习的方法,即Dust-net,该方法在进行独立的分类变形模型估计的同时提供模型不确定性。我们评估了几个基准数据集和真实世界对象的分布方法,并将其业绩与目前两种最先进的方法进行比较。我们的结果表明,Dust-net能够成功地了解新对象的变形模型的分布模式,从而产生比状态更精确的精确的点估计数,并有效测量预测模型的参数。