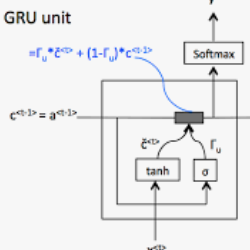

Recurrent neural networks are key tools for sequential data processing. Existing architectures support only a limited class of operations that these networks can apply to their memory state. In this paper, we address this issue and introduce a recurrent neural module called Deep Memory Update (DMU). This module is an alternative to well-established LSTM and GRU. However, it uses a universal function approximator to process its lagged memory state. In addition, the module normalizes the lagged memory to avoid gradient exploding or vanishing in backpropagation through time. The subnetwork that transforms the memory state of DMU can be arbitrary. Experimental results presented here confirm that the previously mentioned properties of the network allow it to compete with and often outperform state-of-the-art architectures such as LSTM and GRU.

翻译:经常性神经网络是连续处理数据的关键工具。 现有的结构只支持这些网络可以应用于其记忆状态的有限一类操作。 在本文中, 我们解决这个问题, 并引入一个名为深记忆更新( DMU) 的经常性神经模块。 这个模块是建立完善的 LSTM 和 GRU 的替代模块。 但是, 它使用一个通用功能近似器来处理其滞后的记忆状态。 此外, 模块使陈旧的记忆正常化, 以避免在时间回推进中梯度爆炸或消失。 改变 DMU 记忆状态的子网络可能是任意的。 此处提供的实验结果证实, 先前提到的网络特性允许它与LSTM 和 GRU 等最先进的结构进行竞争, 并且往往优于这些结构。