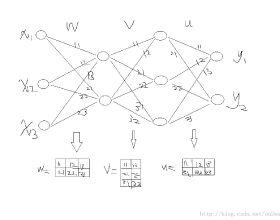

PCANet and its variants provided good accuracy results for classification tasks. However, despite the importance of network depth in achieving good classification accuracy, these networks were trained with a maximum of nine layers. In this paper, we introduce a residual compensation convolutional network, which is the first PCANet-like network trained with hundreds of layers while improving classification accuracy. The design of the proposed network consists of several convolutional layers, each followed by post-processing steps and a classifier. To correct the classification errors and significantly increase the network's depth, we train each layer with new labels derived from the residual information of all its preceding layers. This learning mechanism is accomplished by traversing the network's layers in a single forward pass without backpropagation or gradient computations. Our experiments on four distinct classification benchmarks (MNIST, CIFAR-10, CIFAR-100, and TinyImageNet) show that our deep network outperforms all existing PCANet-like networks and is competitive with several traditional gradient-based models.

翻译:PCANet及其变体为分类任务提供了良好的准确性结果,然而,尽管网络深度对于实现良好的分类准确性十分重要,这些网络还是经过最多9个层次的培训。在本文件中,我们引入了一个残余的补偿演变网络,这是第一个具有数百个层次的类似于CPANet的网络,同时提高了分类准确性。拟议网络的设计由多个革命层组成,每个层随后都有后处理步骤和一个分类器。为了纠正分类错误并大大提高网络的深度,我们用来自前所有层次残余信息的新的标签对每一层进行训练。这一学习机制是通过在不进行反向调整或梯度计算的情况下在单个远端跨过网络层完成的。我们在4个不同的分类基准(MNIST、CIFAR-10、CIFAR-100和TinyImageNet)上的实验表明,我们的深网络超越了所有现有的CPANet类似网络,并且与若干传统的梯度模型具有竞争力。