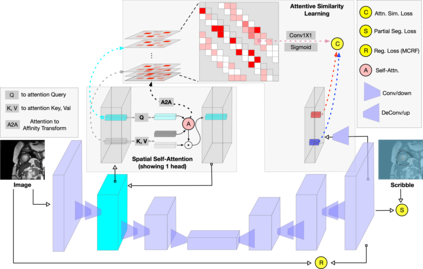

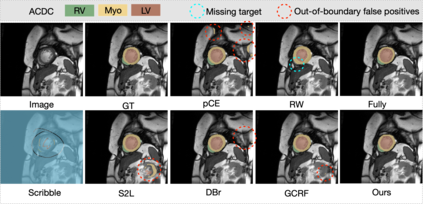

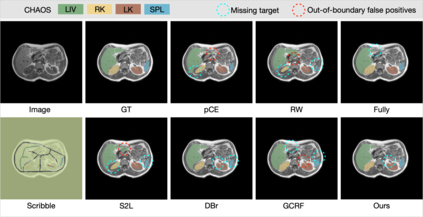

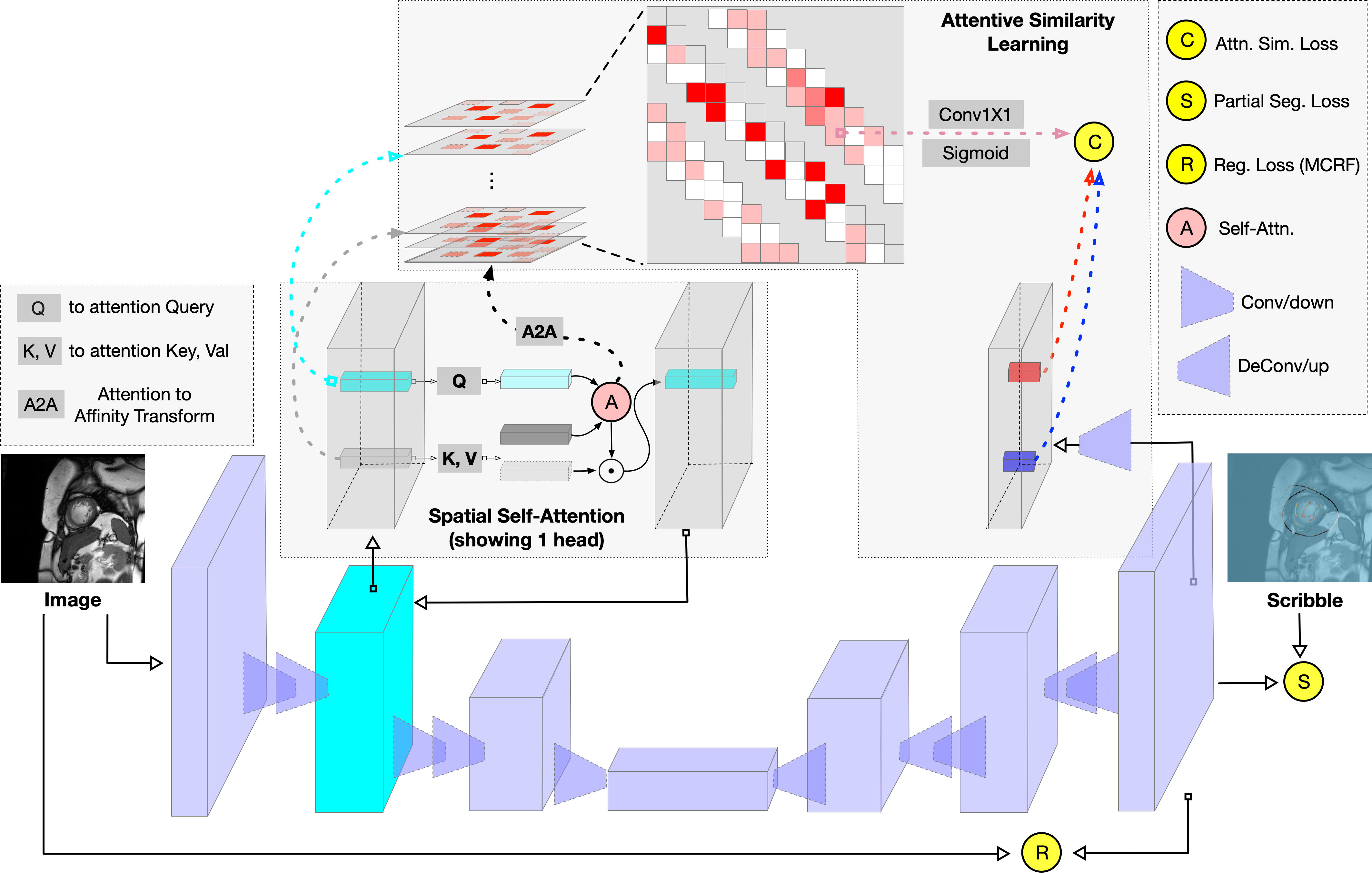

The success of deep networks in medical image segmentation relies heavily on massive labeled training data. However, acquiring dense annotations is a time-consuming process. Weakly-supervised methods normally employ less expensive forms of supervision, among which scribbles started to gain popularity lately thanks to its flexibility. However, due to lack of shape and boundary information, it is extremely challenging to train a deep network on scribbles that generalizes on unlabeled pixels. In this paper, we present a straightforward yet effective scribble supervised learning framework. Inspired by recent advances of transformer based segmentation, we create a pluggable spatial self-attention module which could be attached on top of any internal feature layers of arbitrary fully convolutional network (FCN) backbone. The module infuses global interaction while keeping the efficiency of convolutions. Descended from this module, we construct a similarity metric based on normalized and symmetrized attention. This attentive similarity leads to a novel regularization loss that imposes consistency between segmentation prediction and visual affinity. This attentive similarity loss optimizes the alignment of FCN encoders, attention mapping and model prediction. Ultimately, the proposed FCN+Attention architecture can be trained end-to-end guided by a combination of three learning objectives: partial segmentation loss, a customized masked conditional random fields and the proposed attentive similarity loss. Extensive experiments on public datasets (ACDC and CHAOS) showed that our framework not just out-performs existing state-of-the-art, but also delivers close performance to fully-supervised benchmark. Code will be available upon publication.

翻译:暂无翻译