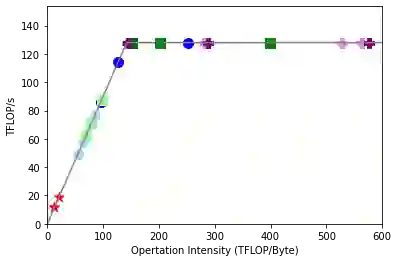

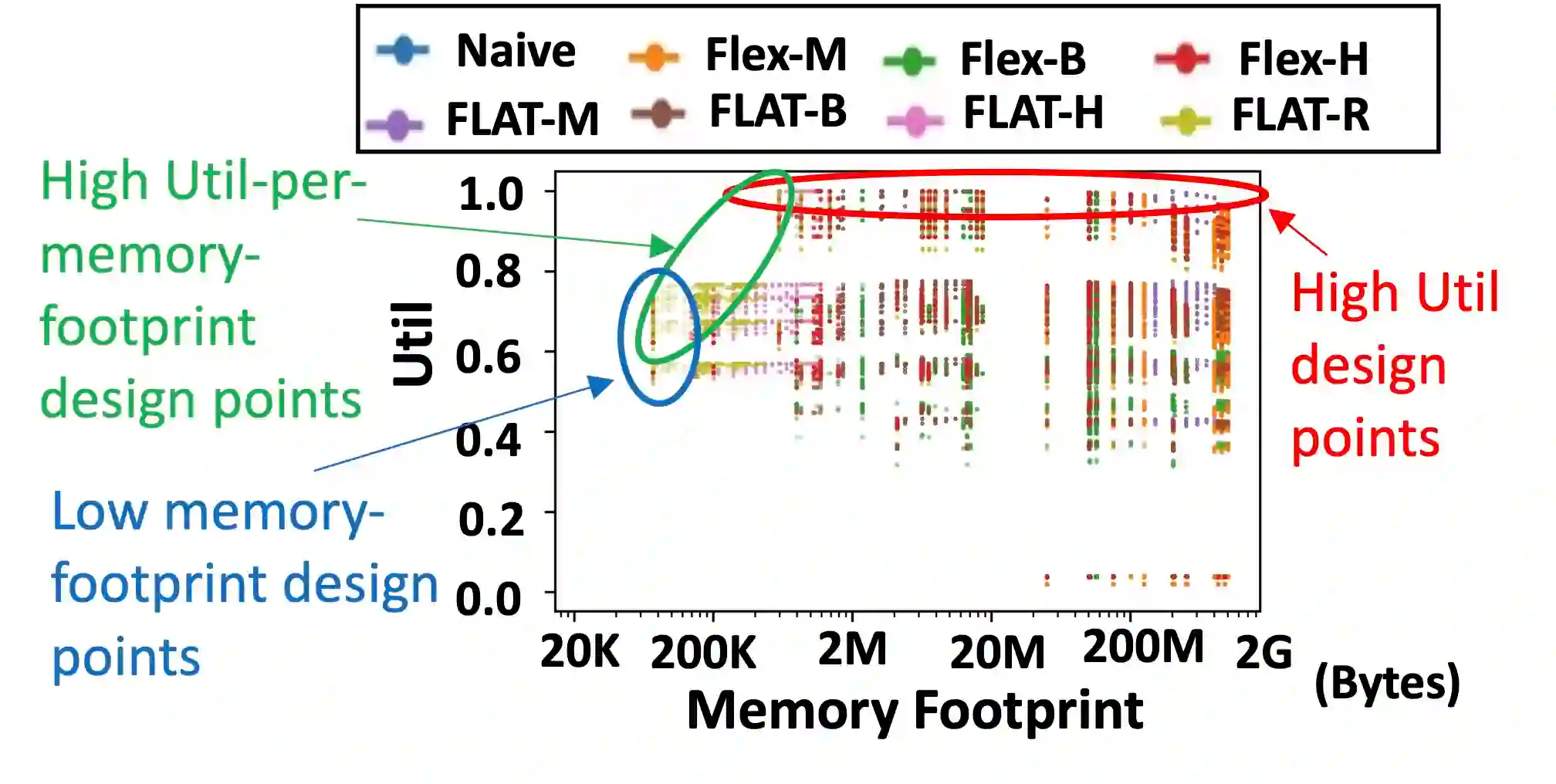

Attention mechanisms, primarily designed to capture pairwise correlations between words, have become the backbone of machine learning, expanding beyond natural language processing into other domains. This growth in adaptation comes at the cost of prohibitively large memory requirements and computational complexity, especially at higher number of input elements. This limitation is due to inherently limited data reuse opportunities and quadratic growth in memory footprints, leading to severe memory-boundedness and limited scalability of input elements. This work addresses these challenges by devising a tailored dataflow optimization, called FLAT, for attention mechanisms without altering their functionality. This dataflow processes costly attention operations through a unique fusion mechanism, transforming the memory footprint quadratic growth to merely a linear one. To realize the full potential of this bespoke mechanism, we propose a tiling approach to enhance the data reuse across attention operations. Our method both mitigates the off-chip bandwidth bottleneck as well as reduces the on-chip memory requirement. Across a diverse range of models, FLAT delivers 1.94x (1.76x) speedup and 49% and (42%) of energy savings compared to the state-of-the-art edge (cloud) accelerators with no customized dataflow optimization. Our evaluations demonstrate that state-of-the-art DNN dataflows applied to attention operations reach the efficiency limit for inputs above 512 elements. In contrast, FLAT unblocks transformer models for inputs with up to 64 K elements in edge and cloud accelerators.

翻译:关注机制主要是为了捕捉言词之间的对等关系,它已经成为机器学习的主干,超越自然语言处理,扩展到其他领域。适应的增加是以惊人的庞大记忆要求和计算复杂性的代价,特别是投入元素数量较多的代价。这一限制是由于数据再利用机会内在有限,记忆足迹的二次增长,导致严重的内存限制和输入元素的可缩缩放性。这项工作通过设计一个定制的数据流优化(称为FLAT),在不改变功能的情况下用于关注边缘机制,来应对这些挑战。这一数据流通过一个独特的聚合机制处理昂贵的注意力操作,将记忆足部二次增长转变为仅仅是直线性机制。为了实现这一表达机制的全部潜力,我们建议采用一种平铺式方法,以加强注意力作业之间的数据再利用。我们的方法既可以减轻机外带带宽度的带宽度,又可以减少输入元素在芯片上的记忆要求。在多种模型中,FLAT提供1.94x(1.76x)加速和49 %以及(42%)节能节约操作,而比Sn-Artime 平面的模型,可以显示我们Flock-tradeal 数据流到Flal 5 数据流。