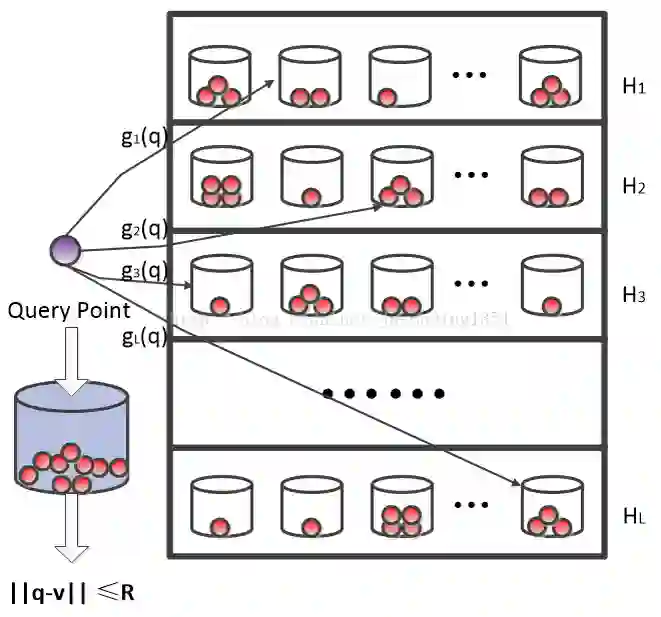

Embedding representation learning via neural networks is at the core foundation of modern similarity based search. While much effort has been put in developing algorithms for learning binary hamming code representations for search efficiency, this still requires a linear scan of the entire dataset per each query and trades off the search accuracy through binarization. To this end, we consider the problem of directly learning a quantizable embedding representation and the sparse binary hash code end-to-end which can be used to construct an efficient hash table not only providing significant search reduction in the number of data but also achieving the state of the art search accuracy outperforming previous state of the art deep metric learning methods. We also show that finding the optimal sparse binary hash code in a mini-batch can be computed exactly in polynomial time by solving a minimum cost flow problem. Our results on Cifar-100 and on ImageNet datasets show the state of the art search accuracy in precision@k and NMI metrics while providing up to 98X and 478X search speedup respectively over exhaustive linear search.

翻译:通过神经网络进行嵌入式表达式学习是现代类似搜索的核心基础。 虽然在开发算法以学习二进制模拟代号表达式以提高搜索效率方面已经付出了很大努力, 但仍需要对每个查询的数据集进行线性扫描, 并通过二进制转换来交换搜索精度。 为此, 我们考虑直接学习一个可量化嵌入式表达式和稀疏的二进制散散散散散散散散散散散分代码端对端的问题, 可用于构建一个高效的散列表, 不仅能显著减少数据的搜索数量, 还能实现艺术搜索准确性, 超过艺术深度的计量学习方法的以往状态。 我们还显示, 在微型批中找到最佳的稀少二进制代号可以通过解决最低成本流问题的方式在多元时间完全计算。 我们在Cifar- 100和图像网络数据集上的结果显示精度@ k 和 NMI 度的艺术搜索精度, 同时提供最多98X 和 478X 搜索速度, 并分别超过详尽的线性搜索。