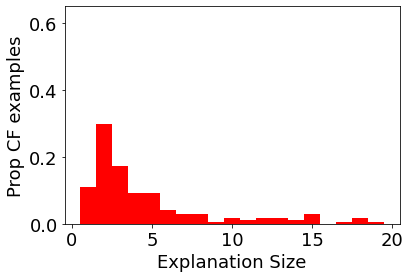

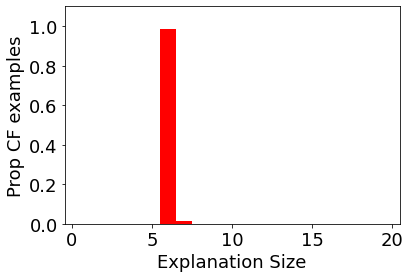

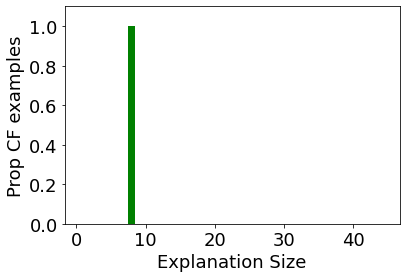

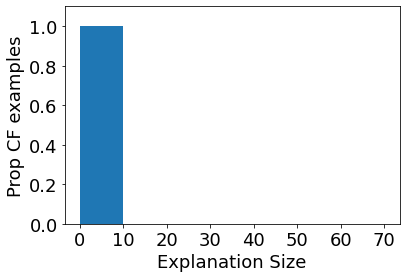

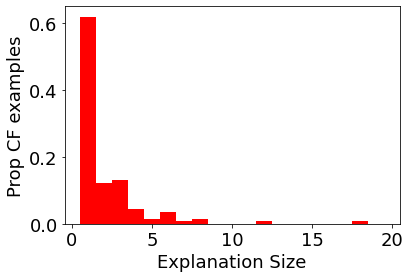

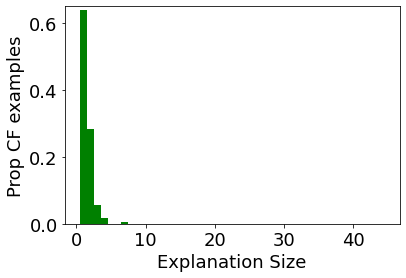

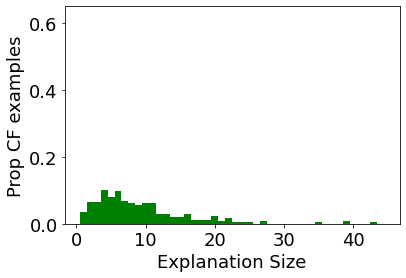

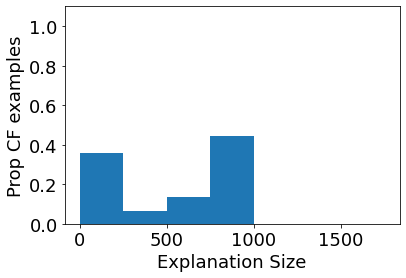

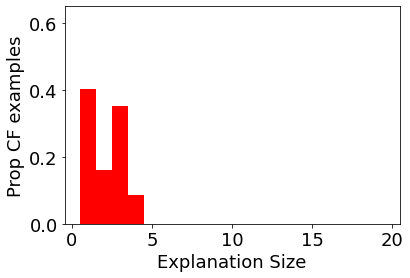

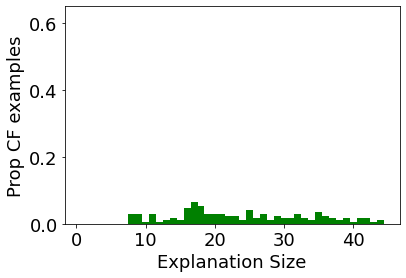

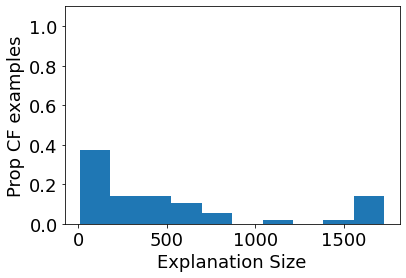

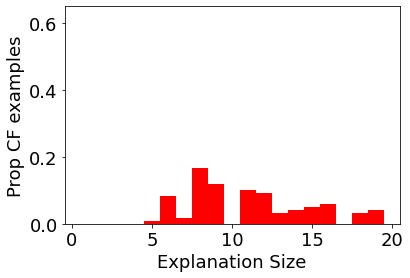

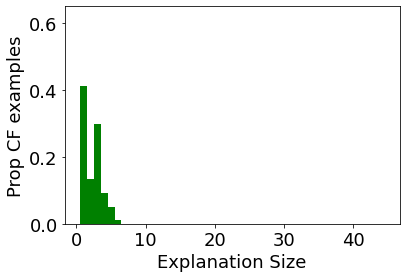

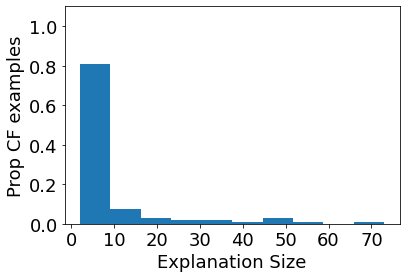

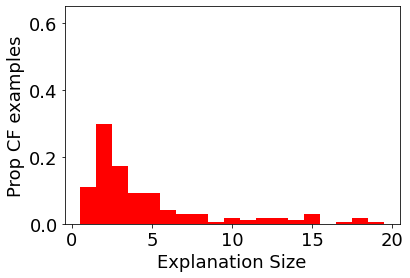

Given the increasing promise of Graph Neural Networks (GNNs) in real-world applications, several methods have been developed for explaining their predictions. So far, these methods have primarily focused on generating subgraphs that are especially relevant for a particular prediction. However, such methods do not provide a clear opportunity for recourse: given a prediction, we want to understand how the prediction can be changed in order to achieve a more desirable outcome. In this work, we propose a method for generating counterfactual (CF) explanations for GNNs: the minimal perturbation to the input (graph) data such that the prediction changes. Using only edge deletions, we find that our method, CF-GNNExplainer can generate CF explanations for the majority of instances across three widely used datasets for GNN explanations, while removing less than 3 edges on average, with at least 94\% accuracy. This indicates that CF-GNNExplainer primarily removes edges that are crucial for the original predictions, resulting in minimal CF explanations.

翻译:鉴于在现实世界应用中图形神经网络(GNNs)的前景日益光明,已经开发了几种方法来解释其预测。到目前为止,这些方法主要侧重于生成与特定预测特别相关的子集。然而,这些方法并没有提供一个明确的追索机会:根据预测,我们想了解如何改变预测,以取得更理想的结果。在这项工作中,我们提出了一种方法来为GNS提供反事实(CF)解释:输入(graph)数据的最小扰动,如预测的变化。我们发现,CF-GNNExplainer(CF-GNN)仅使用边缘删除,就能为三种广泛使用的数据集中的大多数案例生成CFC解释,同时平均去除不到3个边缘,至少达到94个精确度。这表明,CF-GNNPerlainer(C-GNN)主要去除对最初预测至关重要的边缘,导致最小的CF解释。