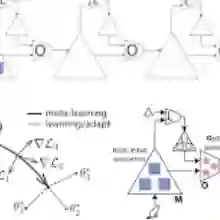

Meta-learning usually refers to a learning algorithm that learns from other learning algorithms. The problem of uncertainty in the predictions of neural networks shows that the world is only partially predictable and a learned neural network cannot generalize to its ever-changing surrounding environments. Therefore, the question is how a predictive model can represent multiple predictions simultaneously. We aim to provide a fundamental understanding of learning to learn in the contents of Decentralized Neural Networks (Decentralized NNs) and we believe this is one of the most important questions and prerequisites to building an autonomous intelligence machine. To this end, we shall demonstrate several pieces of evidence for tackling the problems above with Meta Learning in Decentralized NNs. In particular, we will present three different approaches to building such a decentralized learning system: (1) learning from many replica neural networks, (2) building the hierarchy of neural networks for different functions, and (3) leveraging different modality experts to learn cross-modal representations.

翻译:元学习通常是指学习从其他学习算法中学习的学习算法。神经网络预测中的不确定性问题表明,世界只是部分可以预测的,而一个学到的神经网络不能概括其不断变化的周围环境。因此,问题在于预测模型如何同时代表多重预测。我们的目标是对学习从分散神经网络(分散的NNPs)的内容中学习提供根本的了解,我们认为这是建立自主智能机器的最重要的问题和先决条件之一。为此,我们将展示若干证据,用以解决在分散的NNPs中进行元学习的问题。特别是,我们将提出建立这种分散学习系统的三种不同方法:(1) 从许多复制的神经网络学习,(2) 为不同功能建立神经网络的等级,(3) 利用不同模式的专家学习跨模式的表述。