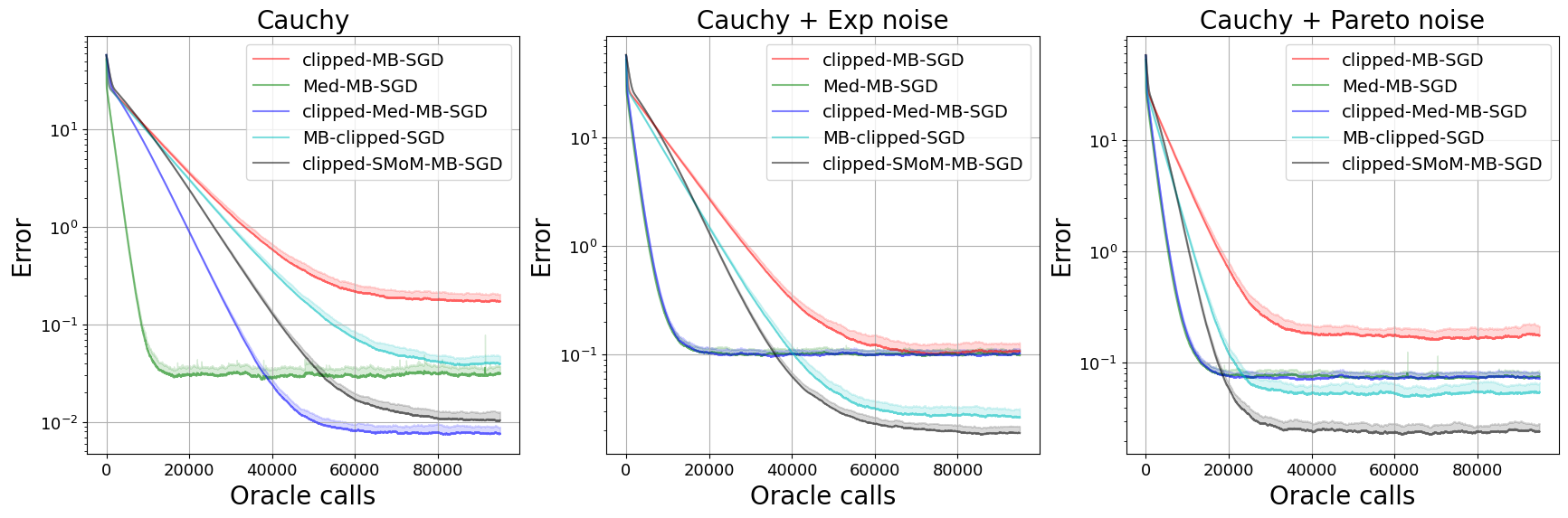

We consider stochastic optimization problems with heavy-tailed noise with structured density. For such problems, we show that it is possible to get faster rates of convergence than $\mathcal{O}(K^{-2(\alpha - 1)/\alpha})$, when the stochastic gradients have finite moments of order $\alpha \in (1, 2]$. In particular, our analysis allows the noise norm to have an unbounded expectation. To achieve these results, we stabilize stochastic gradients, using smoothed medians of means. We prove that the resulting estimates have negligible bias and controllable variance. This allows us to carefully incorporate them into clipped-SGD and clipped-SSTM and derive new high-probability complexity bounds in the considered setup.

翻译:暂无翻译

相关内容

专知会员服务

34+阅读 · 2019年10月18日

专知会员服务

36+阅读 · 2019年10月17日

Arxiv

0+阅读 · 2023年12月26日

Arxiv

0+阅读 · 2023年12月25日

Arxiv

0+阅读 · 2023年12月22日

Arxiv

14+阅读 · 2019年1月17日