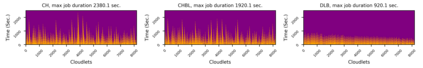

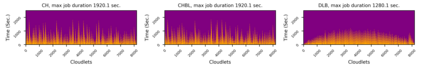

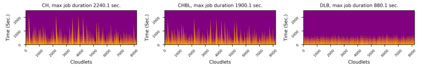

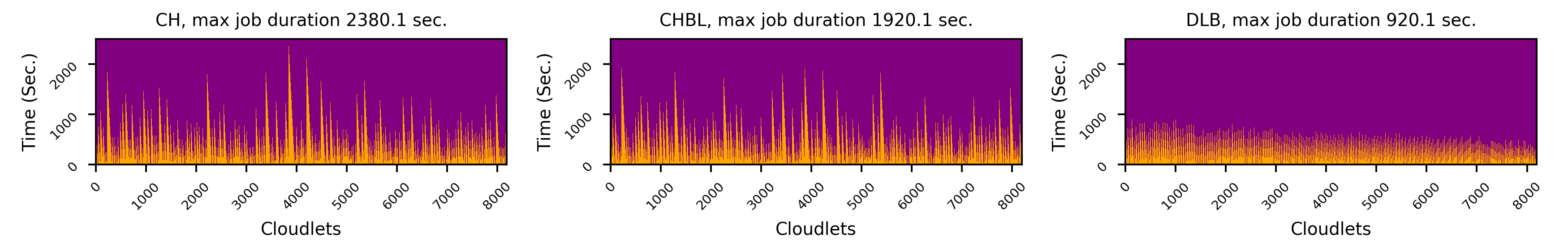

In this paper, we introduce DLB, a Deep Learning based load Balancing mechanism, to effectively address the data skew problem. The key idea of DLB is to replace hash functions in the load balancing mechanisms with deep learning models, which are trained to be able to map different distributions of workloads and data to the servers in a uniformed manner. We implemented DLB and deployed it on a practical Cloud environment using CloudSim. Experimental results using both synthetic and real-world data sets show that compared with traditional hash function based load balancing methods, DLB is able to achieve more balanced mappings, especially when the workload is highly skewed.

翻译:在本文中,我们引入了基于深学习的负载平衡机制DLB, 以有效解决数据扭曲问题。 DLB的关键理念是用深学习模型取代负载平衡机制中的散列函数,这些模型经过培训,能够以统一的方式向服务器绘制工作量和数据的不同分布图。我们实施了DLB,并使用CloudSim将其部署在实用的云层环境中。 使用合成和真实世界数据集的实验结果表明,与传统的散列函数制负载平衡方法相比,DLB能够实现更平衡的绘图,特别是在工作量高度倾斜的情况下。