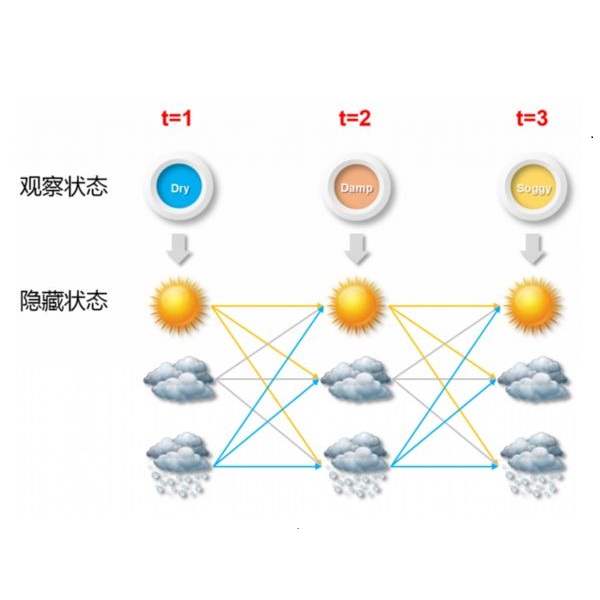

In pursuit of explainability, we develop generative models for sequential data. The proposed models provide state-of-the-art classification results and robust performance for speech phone classification. We combine modern neural networks (normalizing flows) and traditional generative models (hidden Markov models - HMMs). Normalizing flow-based mixture models (NMMs) are used to model the conditional probability distribution given the hidden state in the HMMs. Model parameters are learned through judicious combinations of time-tested Bayesian learning methods and contemporary neural network learning methods. We mainly combine expectation-maximization (EM) and mini-batch gradient descent. The proposed generative models can compute likelihood of a data and hence directly suitable for maximum-likelihood (ML) classification approach. Due to structural flexibility of HMMs, we can use different normalizing flow models. This leads to different types of HMMs providing diversity in data modeling capacity. The diversity provides an opportunity for easy decision fusion from different models. For a standard speech phone classification setup involving 39 phones (classes) and the TIMIT dataset, we show that the use of standard features called mel-frequency-cepstral-coeffcients (MFCCs), the proposed generative models, and the decision fusion together can achieve $86.6\%$ accuracy by generative training only. This result is close to state-of-the-art results, for examples, $86.2\%$ accuracy of PyTorch-Kaldi toolkit [1], and $85.1\%$ accuracy using light gated recurrent units [2]. We do not use any discriminative learning approach and related sophisticated features in this article.

翻译:在寻求解释性时,我们为连续数据开发了基因模型。拟议模型为语音电话分类提供了最先进的分类结果和稳健性能。我们将现代神经网络(正常流动)和传统基因模型(Hidden Markov 模型-HMMs)结合起来。根据HMMs的隐藏状态,标准化流基混合模型(NMMS)用来模拟有条件的概率分布。模型参数通过经过时间考验的巴伊西亚学习方法和现代神经网络学习方法的明智组合学习。我们主要将预期-最大化(EM)和微调梯级梯级下降结合起来。拟议的经常基因分析模型可以计算数据的可能性,从而直接适合最大相似性(MMMs)分类方法。由于HMMs的结构灵活性,我们可以使用不同的正常流模型(NMMs)模型。这导致不同种类的HMMMs提供数据模型能力的多样性。多样性为不同模型的简单决定组合提供了机会。对于包含39个手机(类)和小通度梯队(EM.2) 和小分级梯级梯级梯级梯级梯级梯级的精度下降数据集。我们展示了使用标准标准模型的精确度模型,可以一起学习标准模型。