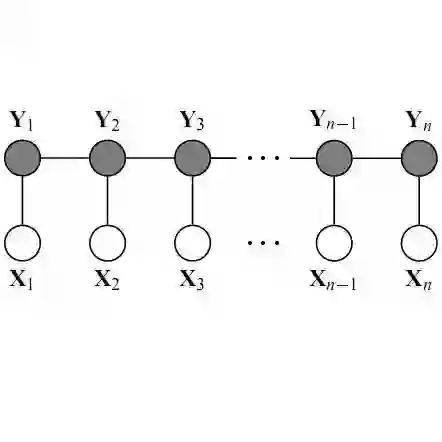

In this paper, we address the hyperspectral image (HSI) classification task with a generative adversarial network and conditional random field (GAN-CRF) -based framework, which integrates a semi-supervised deep learning and a probabilistic graphical model, and make three contributions. First, we design four types of convolutional and transposed convolutional layers that consider the characteristics of HSIs to help with extracting discriminative features from limited numbers of labeled HSI samples. Second, we construct semi-supervised GANs to alleviate the shortage of training samples by adding labels to them and implicitly reconstructing real HSI data distribution through adversarial training. Third, we build dense conditional random fields (CRFs) on top of the random variables that are initialized to the softmax predictions of the trained GANs and are conditioned on HSIs to refine classification maps. This semi-supervised framework leverages the merits of discriminative and generative models through a game-theoretical approach. Moreover, even though we used very small numbers of labeled training HSI samples from the two most challenging and extensively studied datasets, the experimental results demonstrated that spectral-spatial GAN-CRF (SS-GAN-CRF) models achieved top-ranking accuracy for semi-supervised HSI classification.

翻译:在本文中,我们处理超光谱图像(HSI)分类任务,采用基因对抗网络和有条件随机字段(GAN-CRF)-框架(GAN-CRF)处理超光谱图像(HSI)分类任务,将半受监督的深度学习和概率图形模型整合为半监督的深度学习和概率图形模型,并做出三点贡献。首先,我们设计了四种考虑到高光谱图像特征的变异和移植变异层,以帮助从数量有限的贴有标签标签的HSI样本中提取歧视特征。第二,我们建造半受监督的GANA,以缓解培训样本的短缺,为此增加标签,并通过对抗性培训隐含地重建真正的HSI数据分布。第三,我们在经过培训的GANS的软式预测中,除了随机变量外,还建立了密集的有条件随机随机字段(CRFRFs),以HSI为条件,以便通过游戏-理论方法利用有区别和基因化模型的优点。此外,尽管我们从两个最具挑战性和广泛研究的GRC级的G-RC级模型上,我们使用了非常少量的HSI的标签培训的HSI样本样本样本样本样本样本样本样本样本样本样本,以展示的G-RC-C获得的结果。