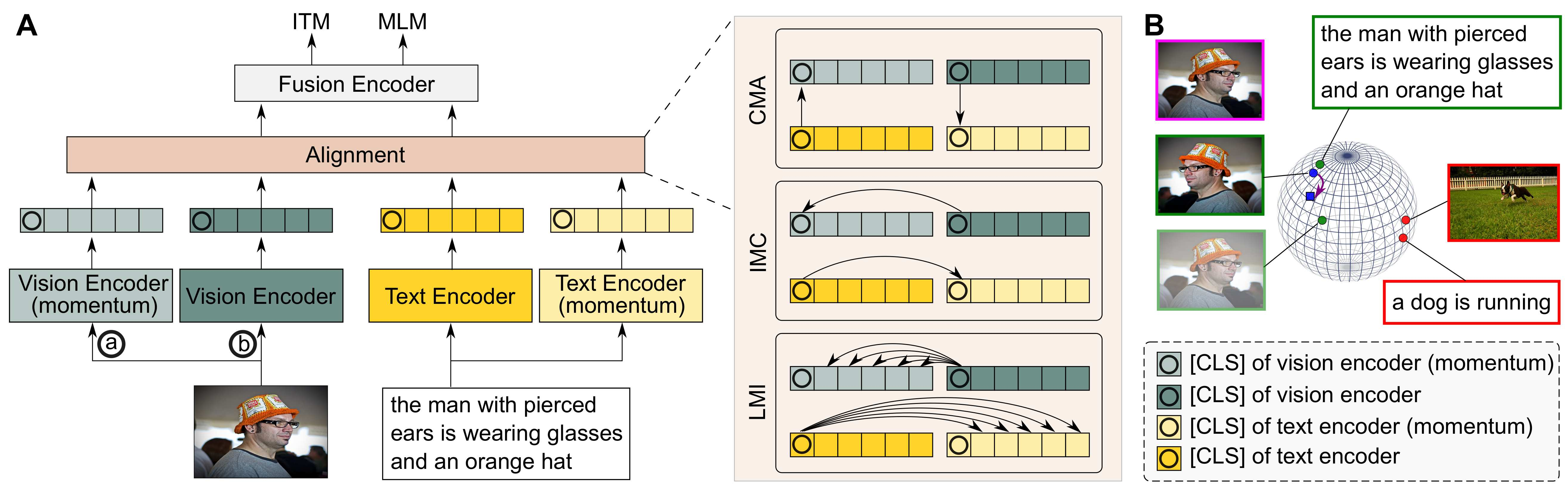

Vision-language representation learning largely benefits from image-text alignment through contrastive losses (e.g., InfoNCE loss). The success of this alignment strategy is attributed to its capability in maximizing the mutual information (MI) between an image and its matched text. However, simply performing cross-modal alignment (CMA) ignores data potential within each modality, which may result in degraded representations. For instance, although CMA-based models are able to map image-text pairs close together in the embedding space, they fail to ensure that similar inputs from the same modality stay close by. This problem can get even worse when the pre-training data is noisy. In this paper, we propose triple contrastive learning (TCL) for vision-language pre-training by leveraging both cross-modal and intra-modal self-supervision. Besides CMA, TCL introduces an intra-modal contrastive objective to provide complementary benefits in representation learning. To take advantage of localized and structural information from image and text input, TCL further maximizes the average MI between local regions of image/text and their global summary. To the best of our knowledge, ours is the first work that takes into account local structure information for multi-modality representation learning. Experimental evaluations show that our approach is competitive and achieve the new state of the art on various common down-stream vision-language tasks such as image-text retrieval and visual question answering.

翻译:通过对比性损失(例如,InfoNCE损失),通过图像-文字调整学习视觉-语言代表法,在很大程度上从图像-文字调整中受益。这一调整战略的成功归功于其最大限度地扩大图像与其匹配文本之间的相互信息(MI)的能力。然而,仅仅进行跨模式调整(CMA),就忽略了每种模式中的数据潜力,这可能导致代表性的退化。例如,虽然基于CMA的模型能够绘制在嵌入空间的图像-文字配对图,但却无法确保同一模式的类似投入密切接近。当培训前数据噪音时,这一问题会变得更为糟糕。在本文件中,我们建议通过利用跨模式和内部自上式自我监督,为愿景-语言前培训进行三度对比学习(TCL)。除了CMA外,TL还引入了内部对比性对比性目标,以便为代表性学习提供互补的好处。为利用来自图像和文字输入的本地和结构的类似信息,TCLLL进一步使当地图像/文字区域及其全球摘要之间的平均MI达到最大化。在我们的知识中,最佳的视觉-视觉-历史-历史-历史-历史-历史-历史-理解中,我们作为常规-语言-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-历史-