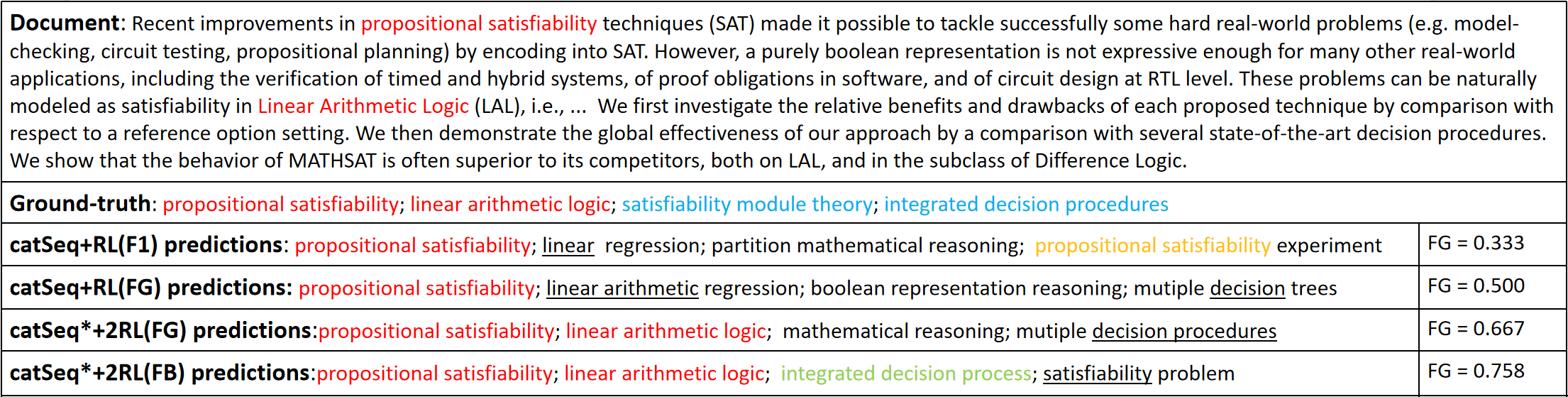

Aiming to generate a set of keyphrases, Keyphrase Generation (KG) is a classical task for capturing the central idea from a given document. Based on Seq2Seq models, the previous reinforcement learning framework on KG tasks utilizes the evaluation metrics to further improve the well-trained neural models. However, these KG evaluation metrics such as $F_1@5$ and $F_1@M$ are only aware of the exact correctness of predictions on phrase-level and ignore the semantic similarities between similar predictions and targets, which inhibits the model from learning deep linguistic patterns. In response to this problem, we propose a new fine-grained evaluation metric to improve the RL framework, which considers different granularities: token-level $F_1$ score, edit distance, duplication, and prediction quantities. On the whole, the new framework includes two reward functions: the fine-grained evaluation score and the vanilla $F_1$ score. This framework helps the model identifying some partial match phrases which can be further optimized as the exact match ones. Experiments on KG benchmarks show that our proposed training framework outperforms the previous RL training frameworks among all evaluation scores. In addition, our method can effectively ease the synonym problem and generate a higher quality prediction. The source code is available at \url{https://github.com/xuyige/FGRL4KG}.

翻译:想要生成一套关键词句, Keyshand Page (KG) 是一个经典任务, 用来从给定文件获取中心思想。 基于 Seq2Sequeq 模型, 先前的KG任务强化学习框架利用评估指标来进一步改进训练有素的神经模型。 然而, 这些KG评价指标, 如$F_ 1@ 5$和$F_ 1@M$, 仅知道对语句水平的预测准确无误, 忽略了类似预测和目标之间的语义相似性, 从而阻碍了模型学习深层语言模式。 针对这一问题, 我们提出了一个新的精细精细化评价指标, 以改进RL 框架, 考虑不同的颗粒值: 象征性值$1$, 编辑距离、 重复和预测数量。 总的来说, 新框架包含两个奖励功能: 精细度评价分和香力 $F_ 1$。 这个框架帮助模型确定一些部分匹配的词句, 可以进一步优化模型与精确的语言模式匹配。 在 KG+ 质量框架上, 实验所有 基准 显示我们提议的G_ 测试方法 能够生成我们先前的评估方法 。 。 在 RG_ 测试中, 格式中, 测试中, 测试框架可以使我们之前的系统 测试框架 能够 生成系统 改进我们之前的系统 格式 。