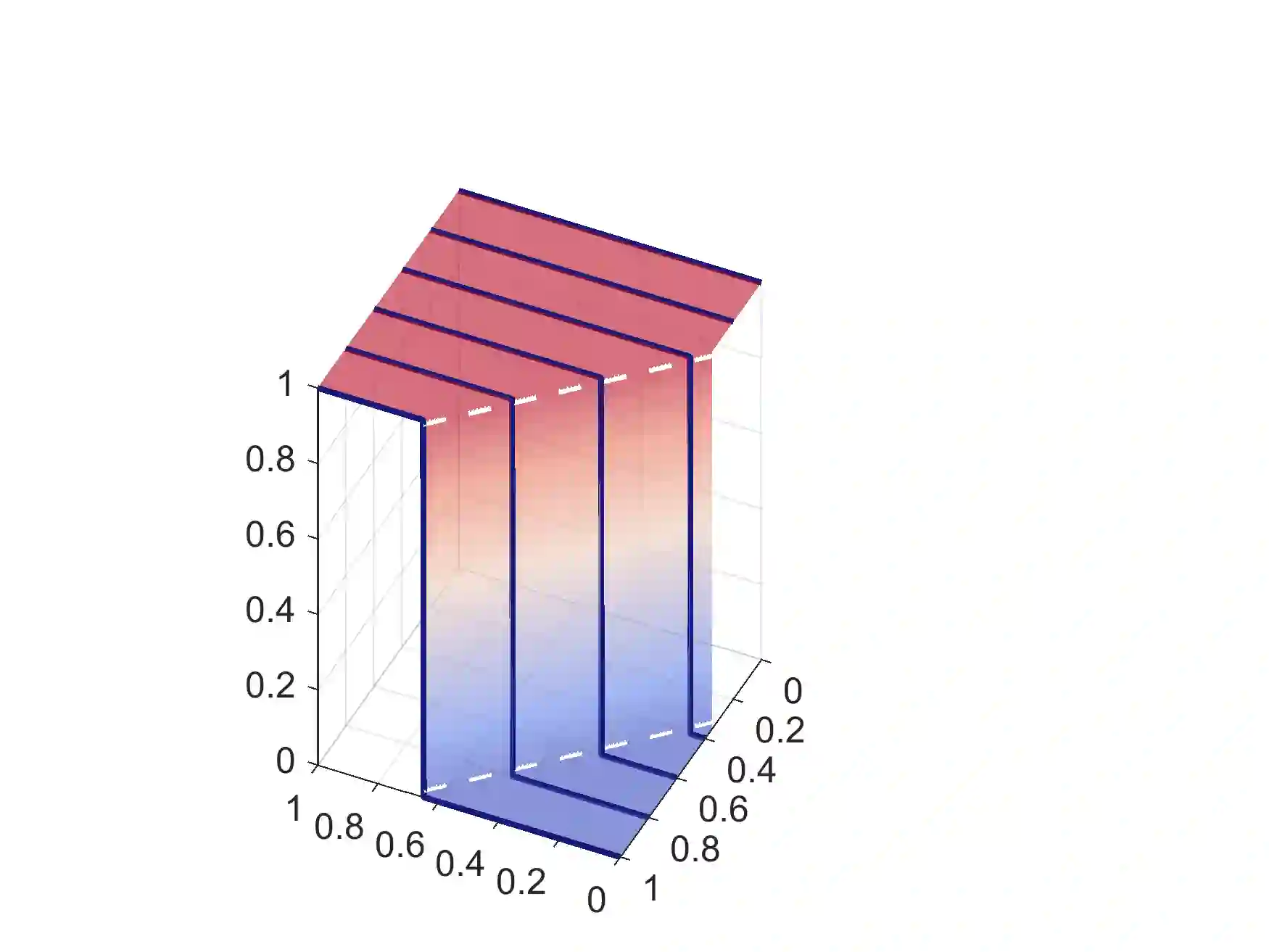

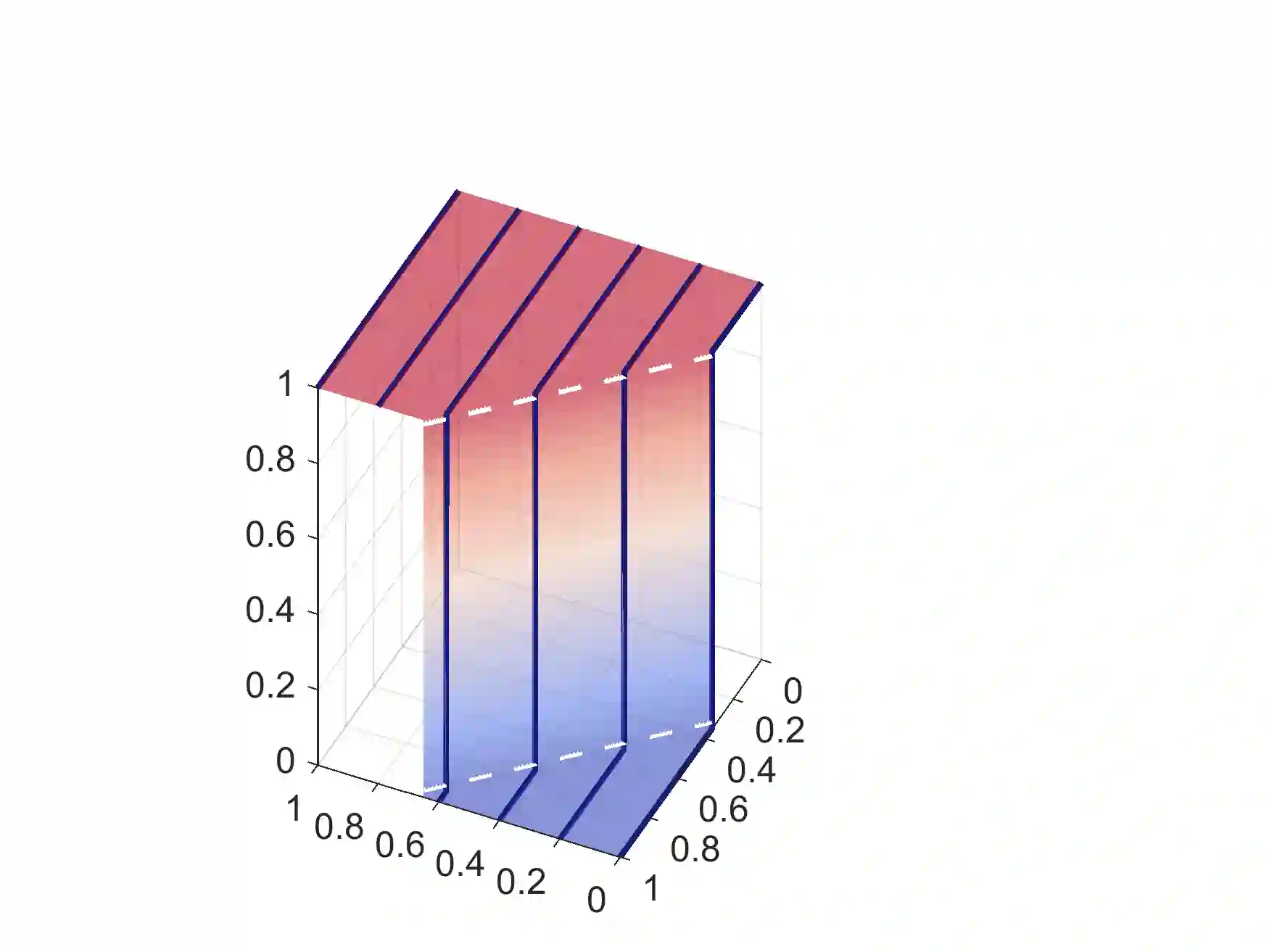

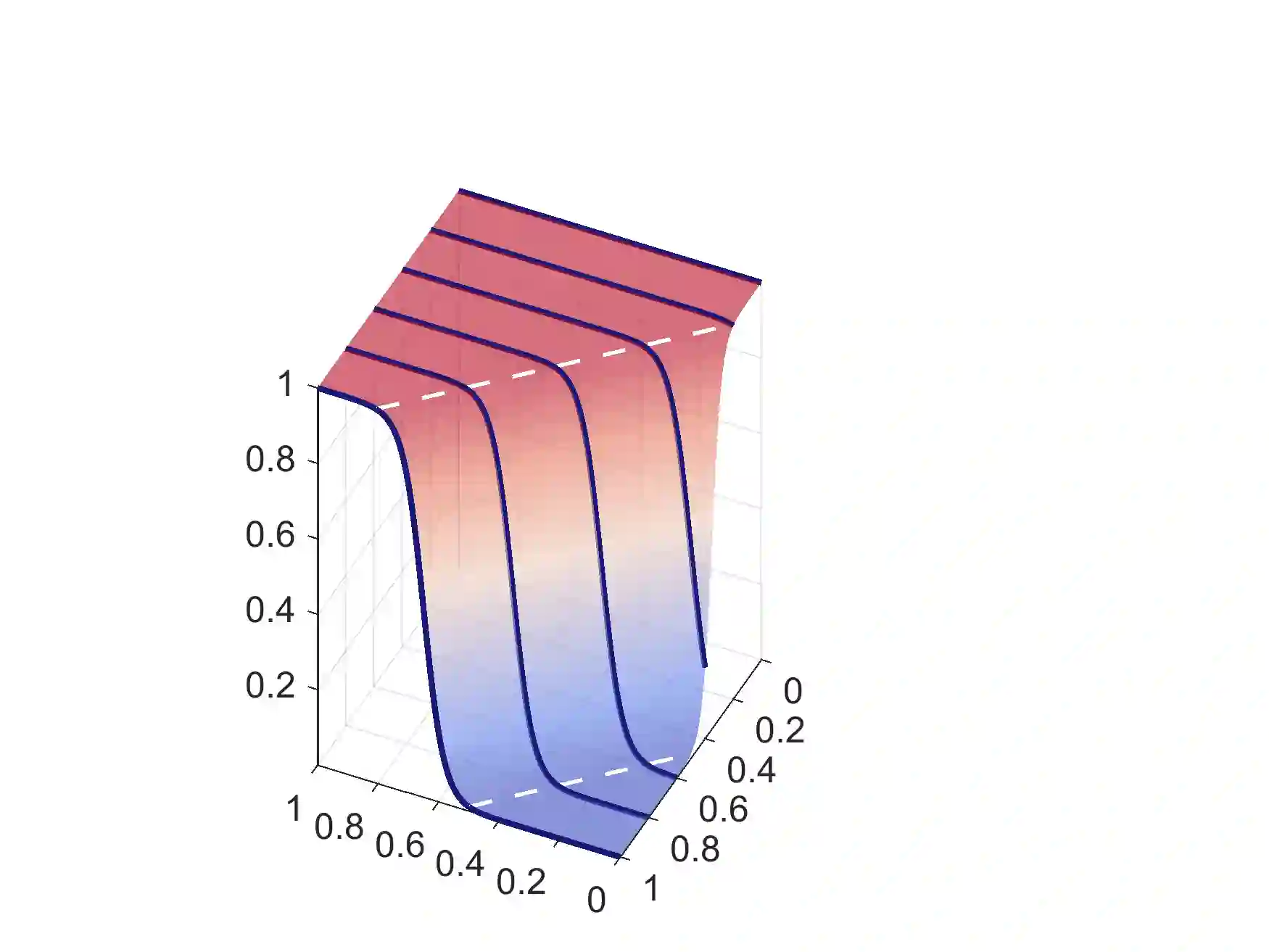

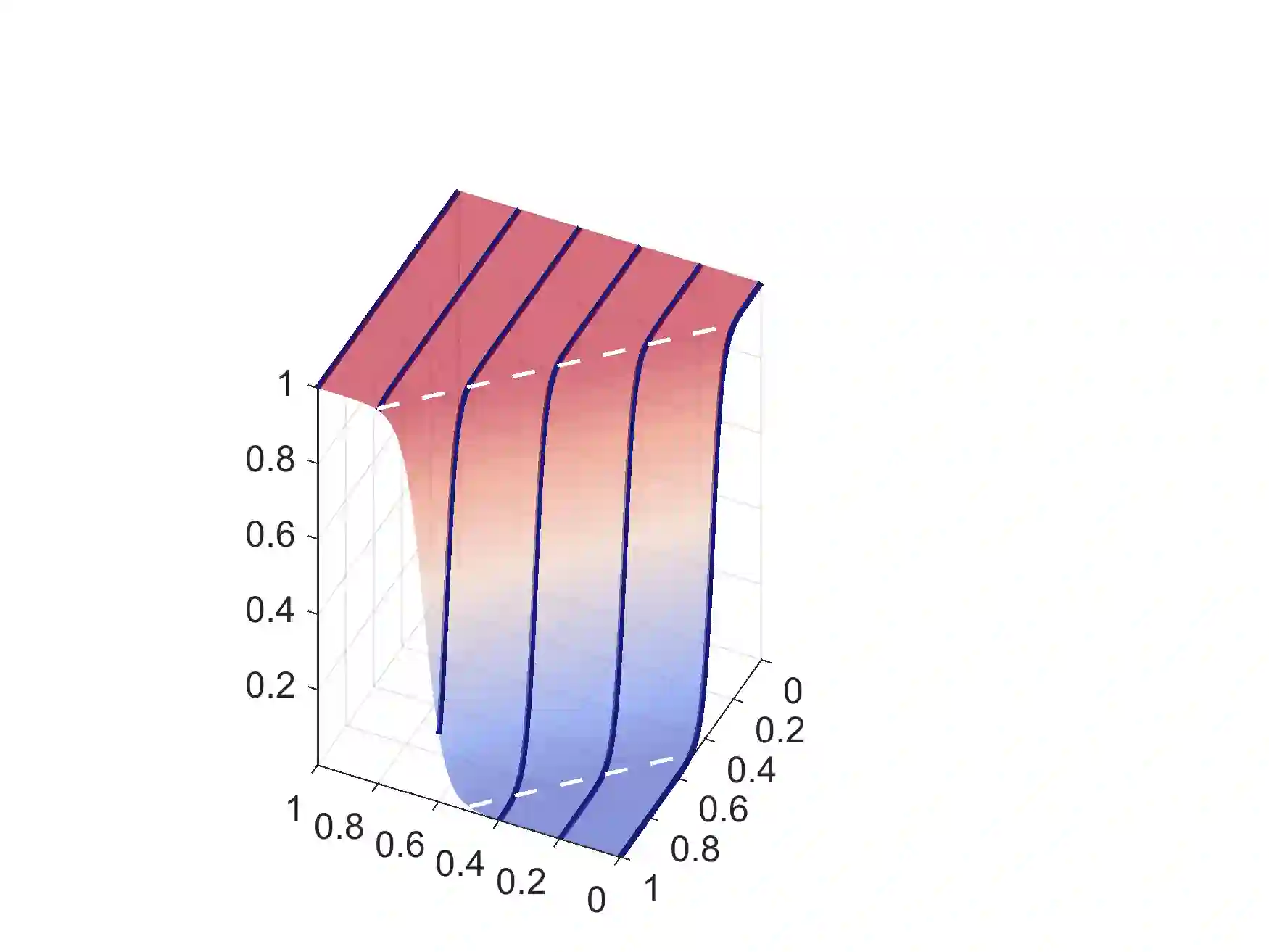

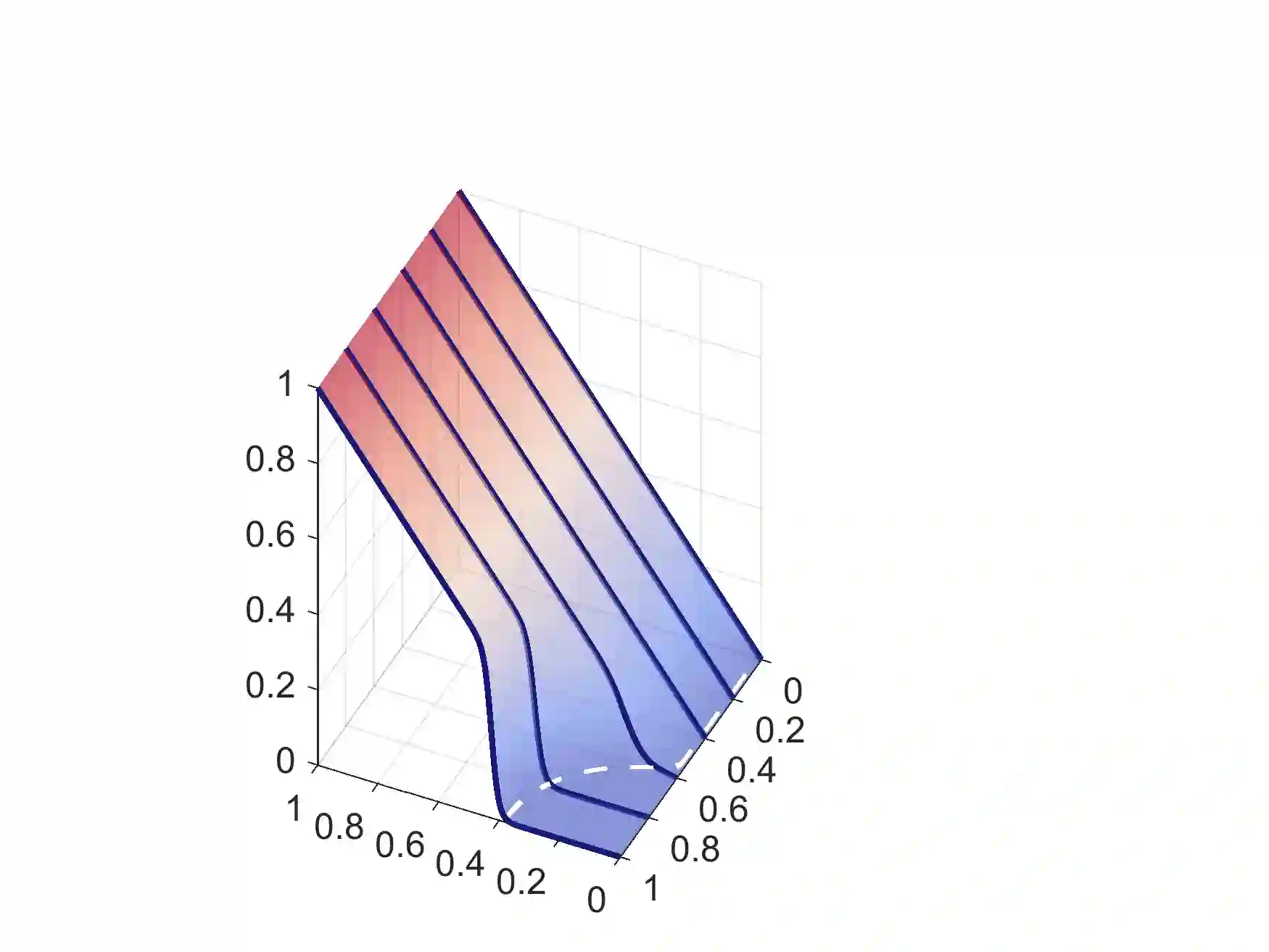

This paper provides a pair similarity optimization viewpoint on deep feature learning, aiming to maximize the within-class similarity $s_p$ and minimize the between-class similarity $s_n$. We find a majority of loss functions, including the triplet loss and the softmax plus cross-entropy loss, embed $s_n$ and $s_p$ into similarity pairs and seek to reduce $(s_n-s_p)$. Such an optimization manner is inflexible, because the penalty strength on every single similarity score is restricted to be equal. Our intuition is that if a similarity score deviates far from the optimum, it should be emphasized. To this end, we simply re-weight each similarity to highlight the less-optimized similarity scores. It results in a Circle loss, which is named due to its circular decision boundary. The Circle loss has a unified formula for two elemental deep feature learning approaches, i.e. learning with class-level labels and pair-wise labels. Analytically, we show that the Circle loss offers a more flexible optimization approach towards a more definite convergence target, compared with the loss functions optimizing $(s_n-s_p)$. Experimentally, we demonstrate the superiority of the Circle loss on a variety of deep feature learning tasks. On face recognition, person re-identification, as well as several fine-grained image retrieval datasets, the achieved performance is on par with the state of the art.

翻译:本文对深相似特性学习提供了一对相似的优化观点, 目的是最大限度地提高类内相似值$_ p$, 并最大限度地减少类内相似值$_ p$ 美元。 我们发现大部分损失功能, 包括三重损失和柔性马克斯加上交叉性激损失, 将 $_ n$ 和 $_ p$ 折成相近的对配, 并试图降低 $ (s_ n- s_ p) 。 这种优化方式不灵活, 因为每个类相似得分的处罚强度都有限, 以等值为准。 我们的直觉是, 如果相似得分远远偏离最佳, 应该强调。 为此, 我们简单地对每个相似的功能进行重新加权, 以突出不太理想的相似性相似性得分数。 它导致圆形损失, 以其循环决定界限命名, 并试图降低两个元素深度特征学习方法的统一公式, 即学习等级标签和配对标签。 我们的分析表明, 圆形损失提供了一种更灵活的最佳优化的比值方法, 以更精确的面面面平整平平平平平的比 。