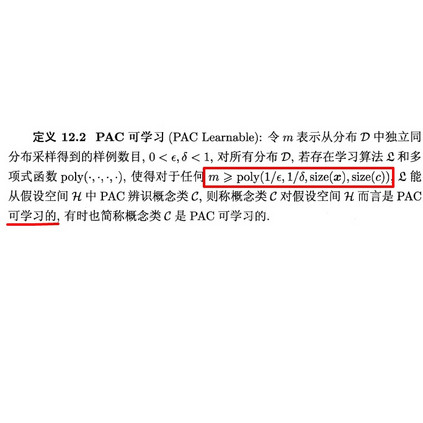

Recently regular decision processes have been proposed as a well-behaved form of non-Markov decision process. Regular decision processes are characterised by a transition function and a reward function that depend on the whole history, though regularly (as in regular languages). In practice both the transition and the reward functions can be seen as finite transducers. We study reinforcement learning in regular decision processes. Our main contribution is to show that a near-optimal policy can be PAC-learned in polynomial time in a set of parameters that describe the underlying decision process. We argue that the identified set of parameters is minimal and it reasonably captures the difficulty of a regular decision process.

翻译:最近提出的常规决策程序是非马尔科夫决策程序的一种良好形式的非马尔科夫决策程序,经常决定程序的特点是过渡职能和奖励职能,尽管这种职能经常取决于整个历史(如通常语文),实际上过渡和奖励职能都可被视为有限的传输者。我们研究经常决策程序中的强化学习。我们的主要贡献是表明,在描述基本决策程序的一套参数中,在多元时间里从PAC学到了接近最佳的政策。我们争辩说,已确定的一套参数是最低限度的,它合理地反映了正常决策程序的困难。