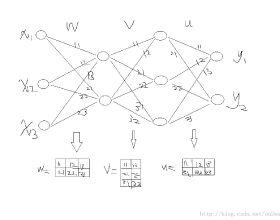

A neural network model of a differential equation, namely neural ODE, has enabled the learning of continuous-time dynamical systems and probabilistic distributions with high accuracy. The neural ODE uses the same network repeatedly during a numerical integration. The memory consumption of the backpropagation algorithm is proportional to the number of uses times the network size. This is true even if a checkpointing scheme divides the computation graph into sub-graphs. Otherwise, the adjoint method obtains a gradient by a numerical integration backward in time. Although this method consumes memory only for a single network use, it requires high computational cost to suppress numerical errors. This study proposes the symplectic adjoint method, which is an adjoint method solved by a symplectic integrator. The symplectic adjoint method obtains the exact gradient (up to rounding error) with memory proportional to the number of uses plus the network size. The experimental results demonstrate that the symplectic adjoint method consumes much less memory than the naive backpropagation algorithm and checkpointing schemes, performs faster than the adjoint method, and is more robust to rounding errors.

翻译:差异方程式的神经网络模型,即神经元数据,使得能够学习连续时间动态系统和概率分布的精确度很高。神经元数据在数字集成期间反复使用相同的网络。反偏偏算法的内存消耗量与网络大小的使用次数成正比。即使一个加点方案将计算图分为子图表,这也是正确的。否则,联合方法通过数字集成在时间上后退获得梯度。虽然这种方法只消耗单一网络使用的内存,但需要高计算成本来抑制数字错误。本研究提出了静默反对称法,这是由静脉反射混合法解决的交互方法。混合法获得了精确的梯度(最多为圆形误差),与使用次数和网络大小成正比。实验结果显示,静脉对接法的内存量要小得多,比天性反正反调算法和检查方法要快得多,而且比反切法的误差要强。