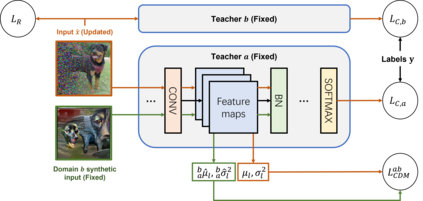

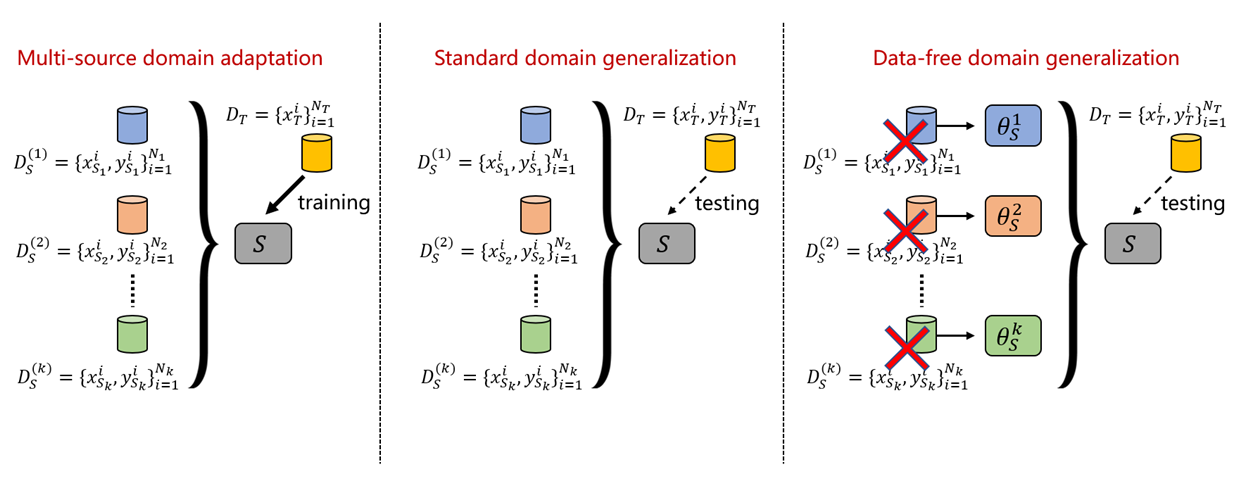

In this work, we investigate the unexplored intersection of domain generalization (DG) and data-free learning. In particular, we address the question: How can knowledge contained in models trained on different source domains be merged into a single model that generalizes well to unseen target domains, in the absence of source and target domain data? Machine learning models that can cope with domain shift are essential for real-world scenarios with often changing data distributions. Prior DG methods typically rely on using source domain data, making them unsuitable for private decentralized data. We define the novel problem of Data-Free Domain Generalization (DFDG), a practical setting where models trained on the source domains separately are available instead of the original datasets, and investigate how to effectively solve the domain generalization problem in that case. We propose DEKAN, an approach that extracts and fuses domain-specific knowledge from the available teacher models into a student model robust to domain shift. Our empirical evaluation demonstrates the effectiveness of our method which achieves first state-of-the-art results in DFDG by significantly outperforming data-free knowledge distillation and ensemble baselines.

翻译:在这项工作中,我们调查了域通用(DG)和无数据学习之间尚未探索的交叉点,特别是,我们探讨了以下问题:在没有源和目标域数据的情况下,如何将不同源域培训模型中所含的知识合并成一个单一的模式,在没有源和目标域数据的情况下,该模式能够很好地概括到看不见的目标域?能够应对域变的机械学习模型对于现实世界情景和数据分配经常变化的情况至关重要。以前的DG方法通常依赖于使用源域数据,使其不适合于私人分散的数据。我们界定了数据无源域通用(DFDDG)这个新问题,这是一个实际的设置,可以单独提供源域培训模型,而不是原始数据集,并调查在这种情况下如何有效地解决域化问题。我们建议DEKAN,一种从现有教师模型中提取和整合特定领域知识的方法,可以强有力地变。我们的经验评估表明,我们的方法通过显著超过无数据知识蒸馏和组合基准,在DFDGDG中取得初步的状态成果。