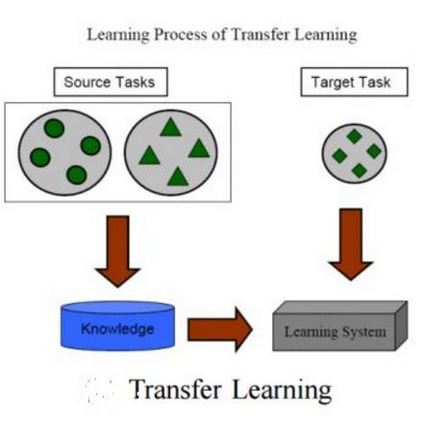

This paper describes a hands-on comparison on using state-of-the-art music source separation deep neural networks (DNNs) before and after task-specific fine-tuning for separating speech content from non-speech content in broadcast audio (i.e., dialog separation). The music separation models are selected as they share the number of channels (2) and sampling rate (44.1 kHz or higher) with the considered broadcast content, and vocals separation in music is considered as a parallel for dialog separation in the target application domain. These similarities are assumed to enable transfer learning between the tasks. Three models pre-trained on music (Open-Unmix, Spleeter, and Conv-TasNet) are considered in the experiments, and fine-tuned with real broadcast data. The performance of the models is evaluated before and after fine-tuning with computational evaluation metrics (SI-SIRi, SI-SDRi, 2f-model), as well as with a listening test simulating an application where the non-speech signal is partially attenuated, e.g., for better speech intelligibility. The evaluations include two reference systems specifically developed for dialog separation. The results indicate that pre-trained music source separation models can be used for dialog separation to some degree, and that they benefit from the fine-tuning, reaching a performance close to task-specific solutions.

翻译:本文介绍了对在广播音频(即对话分离)中将语音内容与非语音内容分离的任务特定微调(即对话分离)之前和之后使用最先进的音乐源分离深神经网络(DNNS)的亲手比较。音乐分离模式的选择是因为它们共享频道数量(2)和采样率(44.1 kHz或更高)与考虑的广播内容,音乐中的声频分离被视为目标应用领域对话分离的平行内容。这些相似之处被假定为能够使任务之间相互转换学习。三种预先培训的音乐模型(Open-Unmix、Speleter和Conv-TasNet)在实验中加以考虑,并用真实广播数据进行微调。模型的性能在与计算评估指标(SI-SIRi、SI-SDRI、2f-model)进行微调后经过微调后被评比照,以及监听测试可以模拟非speech信号部分被淡化的应用,例如,以便更精确的语音不具有可达性。评估所使用的两个参考系统是用于分化的精确的分化度,用于分解的分级分析。用于分级的分级分析。用于分级的两种分级的分级分析结果。