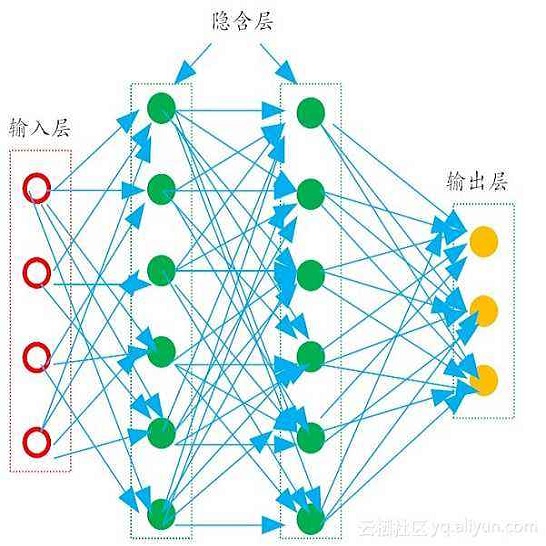

Reliable robotic grasping, especially with deformable objects, (e.g. fruit), remains a challenging task due to underactuated contact interactions with a gripper, unknown object dynamics, and variable object geometries. In this study, we propose a Transformer-based robotic grasping framework for rigid grippers that leverage tactile and visual information for safe object grasping. Specifically, the Transformer models learn physical feature embeddings with sensor feedback through performing two pre-defined explorative actions (pinching and sliding) and predict a final grasping outcome through a multilayer perceptron (MLP) with a given grasping strength. Using these predictions, the gripper is commanded with a safe grasping strength for the grasping tasks via inference. Compared with convolutional recurrent networks, the Transformer models can capture the long-term dependencies across the image sequences and process the spatial-temporal features simultaneously. We first benchmark the proposed Transformer models on a public dataset for slip detection. Following that, we show that the Transformer models outperform a CNN+LSTM model in terms of grasping accuracy and computational efficiency. We also collect our own fruit grasping dataset and conduct the online grasping experiments using the proposed framework for both seen and unseen fruits. Our codes and dataset are made public on GitHub.

翻译:可靠的机器人捕捉,特别是变形物体(例如水果)的捕捉,仍然是一项艰巨的任务,因为与抓取器、不明物体动态和可变天体的几何的接触互动不足。在本研究中,我们提议为僵硬的抓抓器建立一个以变压器为基础的机器人捕捉框架,以利用触动和视觉信息来安全捕捉物体。具体地说,变压器模型通过执行两个预先定义的探索动作(悬浮和滑动),学习与传感器反馈相嵌的物理特征,并预测通过一个具有一定捕捉力的多层透视器(MLP)获得最后的捕捉捉摸结果。使用这些预测,控制器被以安全掌握掌握能力来通过猜测掌握任务。与变压器经常网络相比,变压器模型可以同时捕捉图像序列之间的长期依赖性关系,并处理空间时空特征。我们首先将拟议的变压器模型基准放在一个公共数据集上,以便侦测。随后,我们显示变压器模型在掌握我们公共的精确度和掌握结果的模型上,同时收集我们所看到的数据。