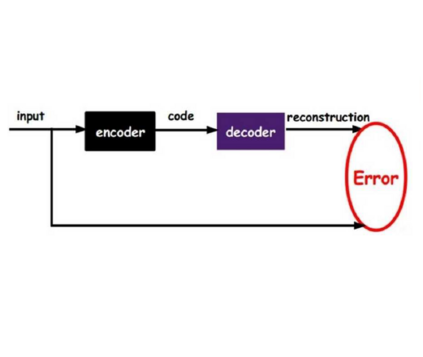

Face anti-spoofing (FAS) method performs well under the intra-domain setups. But cross-domain performance of the model is not satisfying. Domain generalization method has been used to align the feature from different domain extracted by convolutional neural network (CNN) backbone. However, the improvement is limited. Recently, the Vision Transformer (ViT) model has performed well on various visual tasks. But ViT model relies heavily on pre-training of large-scale dataset, which cannot be satisfied by existing FAS datasets. In this paper, taking the FAS task as an example, we propose Masked Contrastive Autoencoder (MCAE) method to solve this problem using only limited data. Meanwhile in order for a feature extractor to extract common features in live samples from different domains, we combine Masked Image Model (MIM) with supervised contrastive learning to train our model.Some intriguing design principles are summarized for performing MIM pre-training for downstream tasks.We also provide insightful analysis for our method from an information theory perspective. Experimental results show our approach has good performance on extensive public datasets and outperforms the state-of-the-art methods.

翻译:外观反面涂鸦( FAS) 方法在内部内部设置下表现良好。 但模型的跨域性能并不令人满意。 外观一般化方法( MCAE ) 已被用于调和由 convolutional 神经网络( CNN) 骨干提取的不同域的特性。 然而, 改进有限 。 最近, 视觉变形器( VIT) 模型在各种视觉任务中表现良好 。 但是 ViT 模型严重依赖大型数据集的预培训, 而现有FAS 数据集无法满足。 本文以 FAS 为例, 我们以 FAS 任务为例, 提出了用于解决这一问题的掩码反向自动编码( MCAE ) 方法 。 同时, 为了让一个特征提取器从不同域的活样中提取共同特性, 我们将遮蔽图像模型( MIM ) 模型( VIT) 和 监督的对比性学习结合起来来训练我们的模型。 一些令人感兴趣的设计原则被总结为进行 MIM 下游任务预培训。 我们还从信息理论角度为我们的方法提供了深刻的分析。 实验结果显示我们的方法在广泛的公共数据设置和状态上的表现。 。