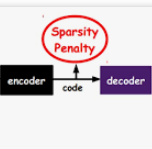

Sparse document representations have been widely used to retrieve relevant documents via exact lexical matching. Owing to the pre-computed inverted index, it supports fast ad-hoc search but incurs the vocabulary mismatch problem. Although recent neural ranking models using pre-trained language models can address this problem, they usually require expensive query inference costs, implying the trade-off between effectiveness and efficiency. Tackling the trade-off, we propose a novel uni-encoder ranking model, Sparse retriever using a Dual document Encoder (SpaDE), learning document representation via the dual encoder. Each encoder plays a central role in (i) adjusting the importance of terms to improve lexical matching and (ii) expanding additional terms to support semantic matching. Furthermore, our co-training strategy trains the dual encoder effectively and avoids unnecessary intervention in training each other. Experimental results on several benchmarks show that SpaDE outperforms existing uni-encoder ranking models.

翻译:稀疏文档表示法已被广泛应用于通过精确词汇匹配检索相关文档。由于预计算的反向索引,它支持快速的即席搜索,但会引发词汇量不匹配的问题。虽然最近使用预训练语言模型的神经排名模型可以解决这个问题,但它们通常需要昂贵的查询推断成本,意味着效率和效果之间的平衡。为了解决这个问题,我们提出了一种新颖的单编码器排名模型:使用双文档编码器的稀疏检索器(SpaDE),通过双编码器学习文本表示。每个编码器在(i)调整词汇的重要性以提高词汇匹配和(ii)展开其他术语以支持语义匹配方面发挥了核心作用。此外,我们的协同训练策略有效地训练了双编码器,并避免了不必要的干预彼此的训练。在几个基准测试中的实验结果显示,SpaDE优于现有的单编码器排名模型。