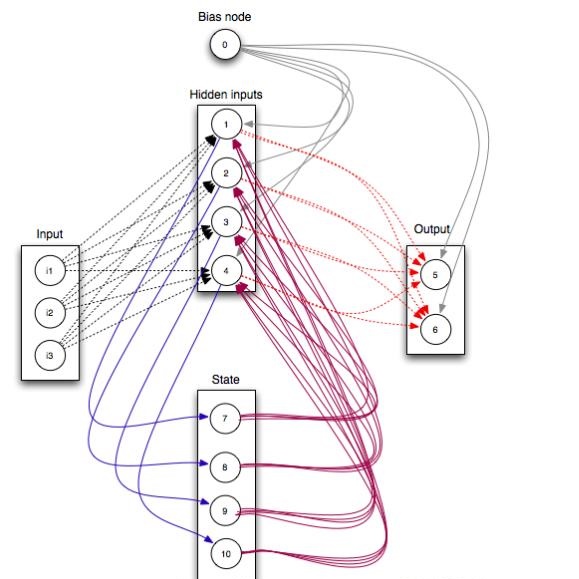

Restricted Boltzmann machines (RBM) and deep Boltzmann machines (DBM) are important models in machine learning, and recently found numerous applications in quantum many-body physics. We show that there are fundamental connections between them and tensor networks. In particular, we demonstrate that any RBM and DBM can be exactly represented as a two-dimensional tensor network. This representation gives an understanding of the expressive power of RBM and DBM using entanglement structures of the tensor networks, also provides an efficient tensor network contraction algorithm for the computing partition function of RBM and DBM. Using numerical experiments, we demonstrate that the proposed algorithm is much more accurate than the state-of-the-art machine learning methods in estimating the partition function of restricted Boltzmann machines and deep Boltzmann machines, and have potential applications in training deep Boltzmann machines for general machine learning tasks.

翻译:限制的波尔兹曼机器(RBM)和深波尔兹曼机器(DBM)是机器学习的重要模型,最近在量子多体物理学中发现了许多应用。 我们显示它们与高温网络之间有着基本的联系。 特别是, 我们证明任何按成果管理制和DBM都可以完全代表为二维的高压网络。 这个表示可以理解使用抗拉网络的缠绕结构的按成果管理制和DBM的表达力和DBM, 也为BBD和DBM的计算分布功能提供了高效的抗拉网络收缩算法。 我们用数字实验来证明,在估计受限制的波尔兹曼机器和深波尔茨曼机器的分流功能时,拟议的算法比最先进的机器学习方法要精确得多,并且有可能用于培训深波尔兹曼机器的一般机器学习任务。