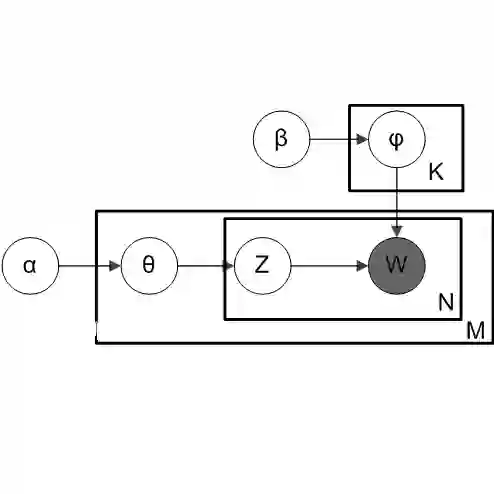

Discriminant analysis, including linear discriminant analysis (LDA) and quadratic discriminant analysis (QDA), is a popular approach to classification problems. It is well known that LDA is suboptimal to analyze heteroscedastic data, for which QDA would be an ideal tool. However, QDA is less helpful when the number of features in a data set is moderate or high, and LDA and its variants often perform better due to their robustness against dimensionality. In this work, we introduce a new dimension reduction and classification method based on QDA. In particular, we define and estimate the optimal one-dimensional (1D) subspace for QDA, which is a novel hybrid approach to discriminant analysis. The new method can handle data heteroscedasticity with number of parameters equal to that of LDA. Therefore, it is more stable than the standard QDA and works well for data in moderate dimensions. We show an estimation consistency property of our method, and compare it with LDA, QDA, regularized discriminant analysis (RDA) and a few other competitors by simulated and real data examples.

翻译:然而,当数据集中或高的特征数量为中或高时,QDA及其变异性分析,包括线性差异分析(LDA)和四光性分析(QDA)等差异性分析(QDA)是处理分类问题的一种理想工具,但当数据集的特征数量中或高时,QDA的效用就不太那么有用,而LDA及其变体由于其对维度的强力而往往表现更好。在这项工作中,我们采用了基于QDA的新的减少和分类方法。特别是,我们界定和估计QDA的QDA最佳一维(D)子空间,这是分析差异性分析的一种新颖的混合方法。新方法可以处理与LDA相同参数数的数据的高度异性,因此,它比标准的QDADA及其变体在中度数据方面效果较好,因此它比标准QDA、QDA、定期的DADA、定期的QDADA、定期的QDDA的QDA(RDA)和少数其他数据模拟和(RDA)的模拟和(RDA)和(RDRDA)和/RDA)和(DRDA)和(其他数据模拟和(DRA)和(DRA)和(DRA)和(其他)和(其他)的)和(其他)的)和(DRDRDRDRA)和(或)和(或)的)的、A);和(或(或(DR的)和(DR的)和(DR的)和(DR的)和(DR的)的)和(或)和(或)和(或)的)和(或)的)数据的)和(或)和(或)和(或)和(或)的)的(或(或)的)和(或(或)其他)的(或)的)的)的(或(或(或(或)的)的(或)的)的)和(或(或)的)的(或)的)和(或)的)和(或)的)和(或(或(或(或)的(或)的)的)的(或)的(或)