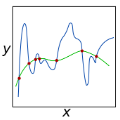

Knowledge distillation is the process of transferring the knowledge from a large model to a small model. In this process, the small model learns the generalization ability of the large model and retains the performance close to that of the large model. Knowledge distillation provides a training means to migrate the knowledge of models, facilitating model deployment and speeding up inference. However, previous distillation methods require pre-trained teacher models, which still bring computational and storage overheads. In this paper, a novel general training framework called Self-Feature Regularization~(SFR) is proposed, which uses features in the deep layers to supervise feature learning in the shallow layers, retains more semantic information. Specifically, we firstly use EMD-l2 loss to match local features and a many-to-one approach to distill features more intensively in the channel dimension. Then dynamic label smoothing is used in the output layer to achieve better performance. Experiments further show the effectiveness of our proposed framework.

翻译:知识蒸馏是将知识从一个大模型转移到一个小模型的过程。在这个过程中,小模型学习了大模型的一般化能力,并保留了接近大模型的性能。知识蒸馏提供了一种培训手段来迁移模型的知识,为模型的部署和加速推导提供了便利。然而,以前的蒸馏方法需要经过预先训练的教师模型,这些模型仍然带来计算和存储间接费用。在本文件中,提议了一个称为自成体系~(SFR)的新颖的一般培训框架,在深层使用特征来监督浅层的特征学习,保留了更多的语义信息。具体地说,我们首先使用EMD-L2损失来匹配本地特性,用多到一个方法来蒸馏频道的特性。然后,在输出层使用动态标签平滑,以取得更好的性能。实验进一步展示了我们提议的框架的有效性。