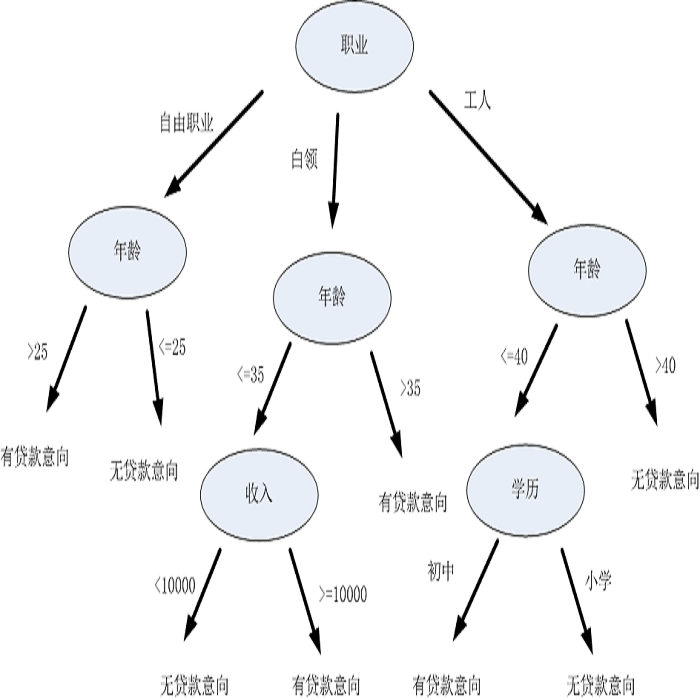

Recent advances and applications of language technology and artificial intelligence have enabled much success across multiple domains like law, medical and mental health. AI-based Language Models, like Judgement Prediction, have recently been proposed for the legal sector. However, these models are strife with encoded social biases picked up from the training data. While bias and fairness have been studied across NLP, most studies primarily locate themselves within a Western context. In this work, we present an initial investigation of fairness from the Indian perspective in the legal domain. We highlight the propagation of learnt algorithmic biases in the bail prediction task for models trained on Hindi legal documents. We evaluate the fairness gap using demographic parity and show that a decision tree model trained for the bail prediction task has an overall fairness disparity of 0.237 between input features associated with Hindus and Muslims. Additionally, we highlight the need for further research and studies in the avenues of fairness/bias in applying AI in the legal sector with a specific focus on the Indian context.

翻译:最近语言技术和人工智能的进步和应用在法律、医疗和精神健康等多个领域取得了很大成功。最近为法律部门提出了基于AI的语文模型,如判决书预测,但是,这些模型与培训数据中摘取的编码社会偏见有冲突。虽然对全国劳工局的偏向和公平性进行了研究,但多数研究主要位于西方范围内。在这项工作中,我们从印度的角度对法律领域的公平性进行了初步调查。我们强调在印地法律文件培训模型的保释预测任务中传播了所学的算法偏见。我们利用人口均等评估了公平差距,并表明为保释预测任务培训的决策树模型在与印度教徒和穆斯林相关的投入特征之间总体上存在0.237的公平性差异。此外,我们强调需要进一步研究在法律部门应用大赦国际的公平/偏见途径,并特别关注印度的情况。</s>