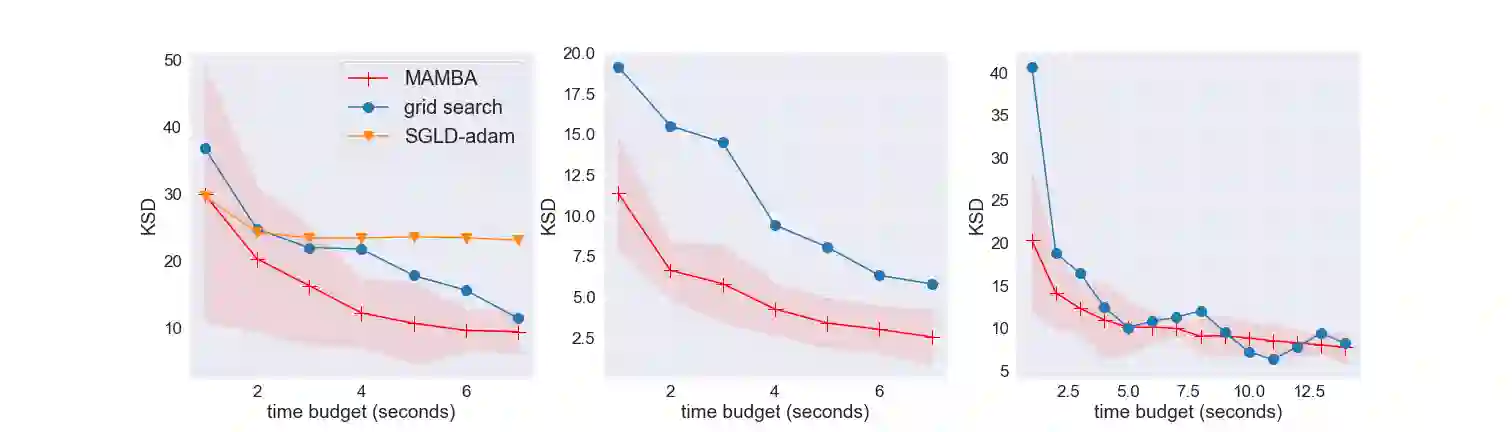

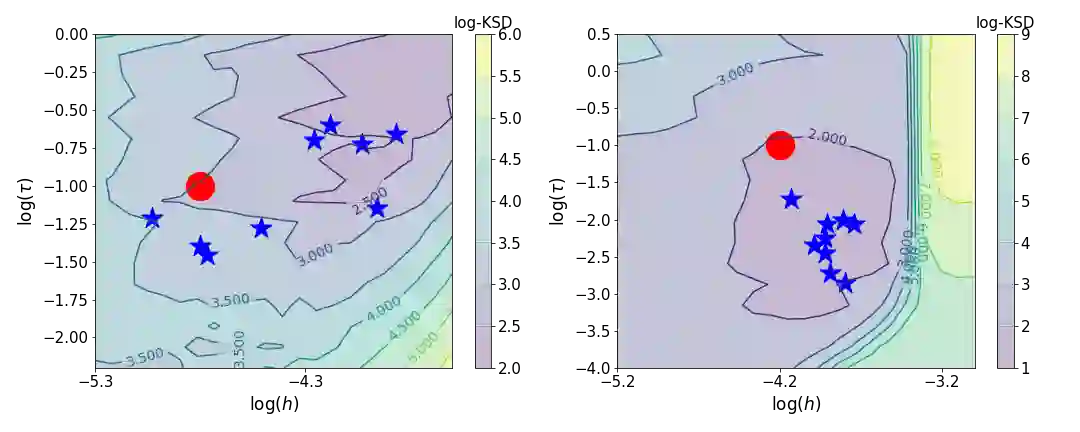

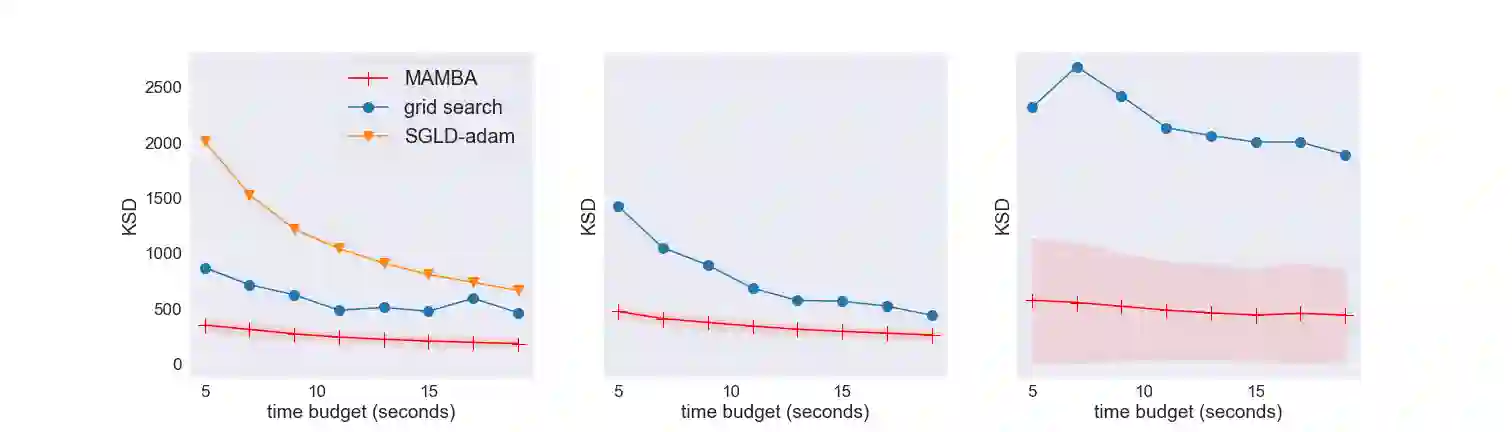

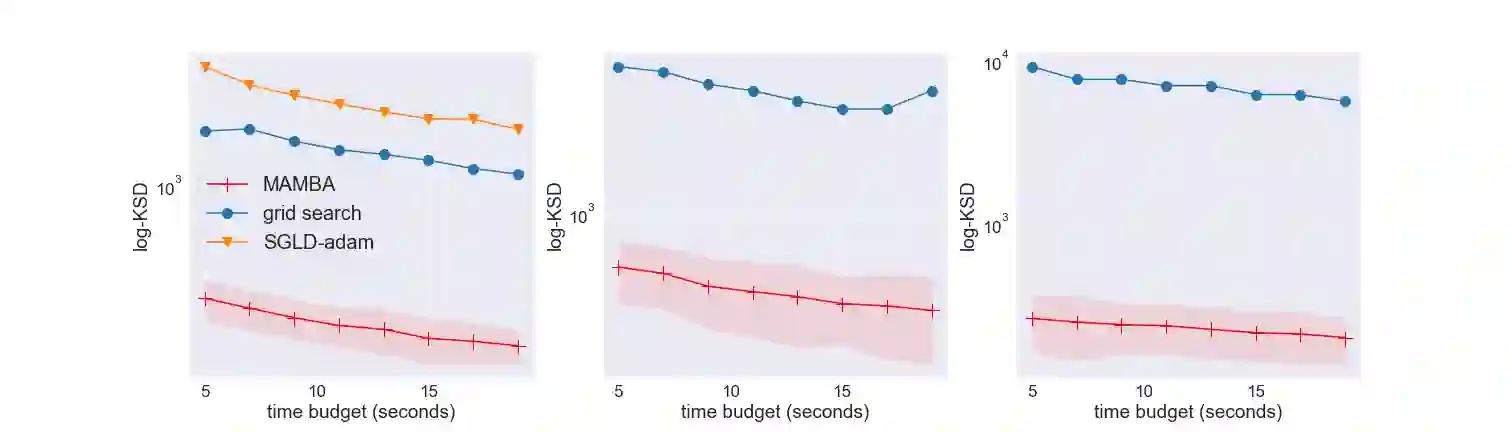

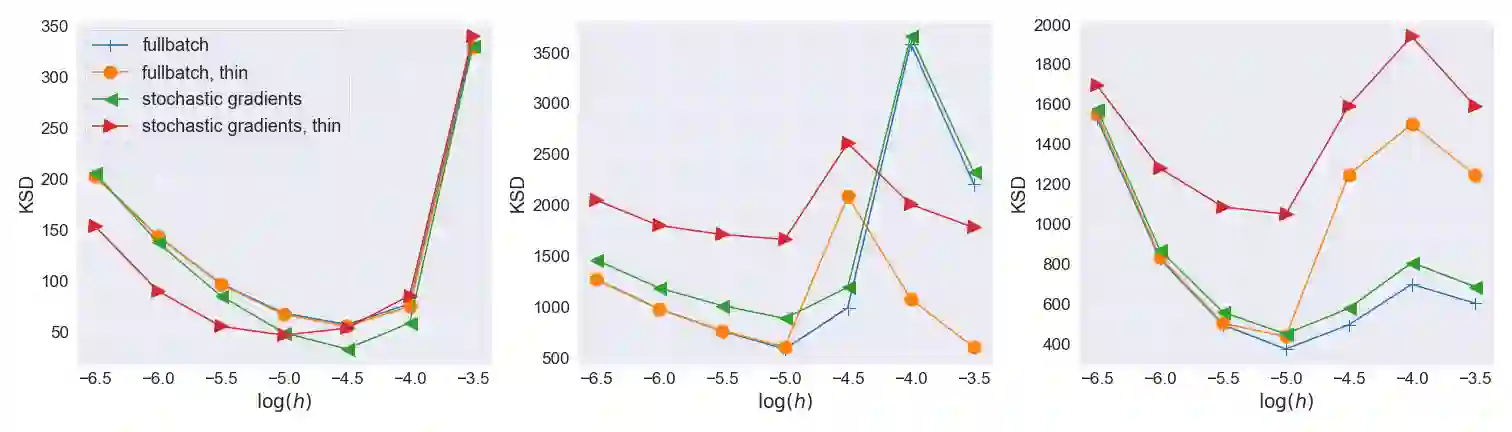

Stochastic gradient Markov chain Monte Carlo (SGMCMC) is a popular class of algorithms for scalable Bayesian inference. However, these algorithms include hyperparameters such as step size or batch size that influence the accuracy of estimators based on the obtained samples. As a result, these hyperparameters must be tuned by the practitioner and currently no principled and automated way to tune them exists. Standard MCMC tuning methods based on acceptance rates cannot be used for SGMCMC, thus requiring alternative tools and diagnostics. We propose a novel bandit-based algorithm that tunes SGMCMC hyperparameters to maximize the accuracy of the posterior approximation by minimizing the kernel Stein discrepancy (KSD). We provide theoretical results supporting this approach and assess alternative metrics to KSD. We support our results with experiments on both simulated and real datasets, and find that this method is practical for a wide range of application areas.

翻译:Stochatic 梯度 Markov 链 Monte Carlo(SGMC ) 是一种流行的可缩放贝叶色推算算法,但是,这些算法包括超参数,如步骤大小或批量大小,影响根据获得的样本测算器的准确性。因此,这些超参数必须由执业者加以调整,目前不存在调和它们的原则性和自动化方法。标准MC调控方法基于接受率,不能用于SGMC,因此需要替代工具和诊断。我们提议一种基于新颖的土匪算法,调控SGMCMC 超参数,以通过尽量减少内核 Stein差异(KSD)来最大限度地提高后部近距离的准确性。我们提供了支持这种方法的理论结果,并评估KSD的替代指标。我们用模拟和真实数据集的实验来支持我们的结果,并发现这一方法在广泛的应用领域是实用的。