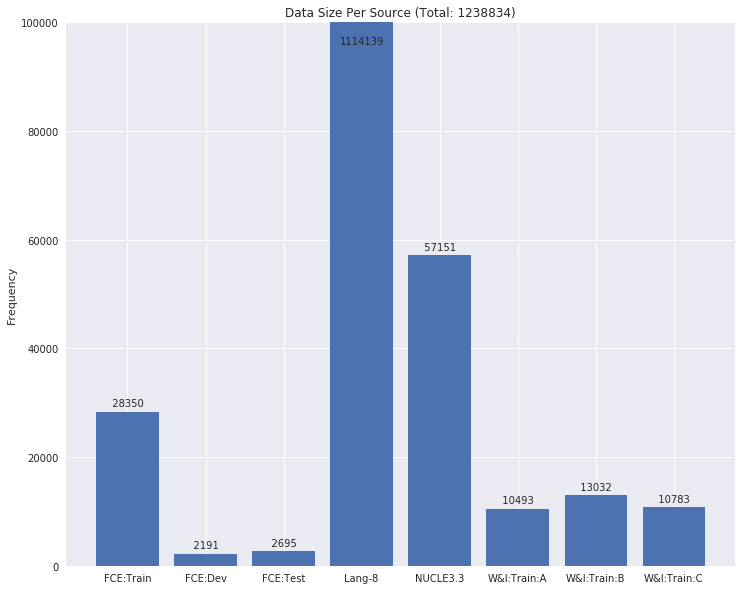

Grammatical error correction can be viewed as a low-resource sequence-to-sequence task, because publicly available parallel corpora are limited. To tackle this challenge, we first generate erroneous versions of large unannotated corpora using a realistic noising function. The resulting parallel corpora are subsequently used to pre-train Transformer models. Then, by sequentially applying transfer learning, we adapt these models to the domain and style of the test set. Combined with a context-aware neural spellchecker, our system achieves competitive results in both restricted and low resource tracks in ACL 2019 BEA Shared Task. We release all of our code and materials for reproducibility.

翻译:语法错误校正可被视为一种低资源序列到顺序的任务, 因为公开提供的平行公司有限。 为了应对这一挑战, 我们首先使用现实的点点头功能生成错误的大型无注释公司版本。 由此形成的平行公司随后被用于培训前变换模型。 然后, 通过按顺序应用转移学习, 我们将这些模型调整到测试集的域域和风格。 结合上下文的神经拼写检查器, 我们的系统在ACL 2019 BEA 共享任务中, 在限制和低资源轨道上都取得了竞争性结果。 我们发布我们所有的代码和材料, 以供复制 。