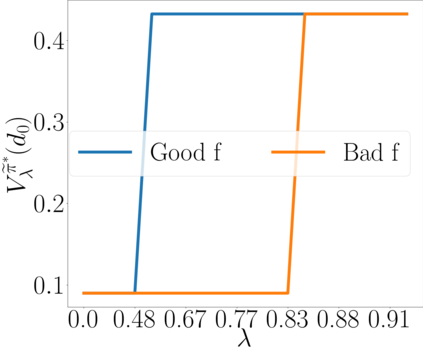

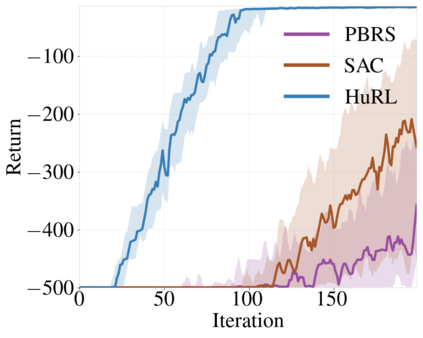

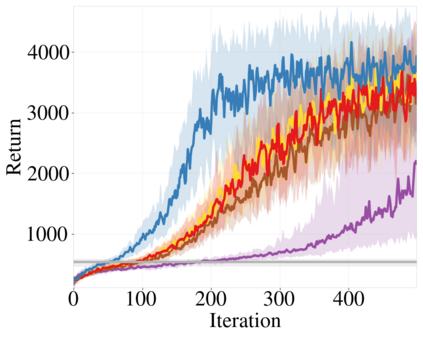

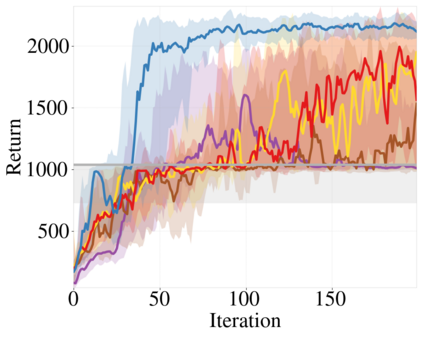

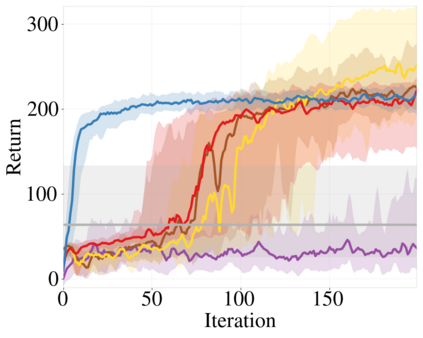

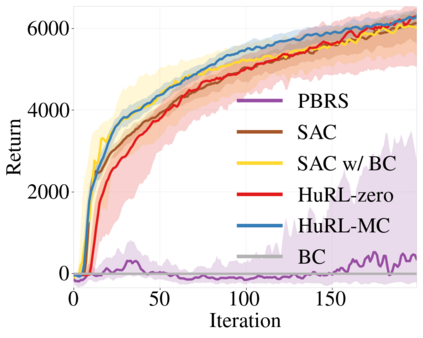

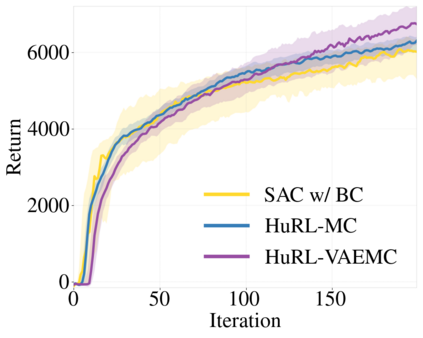

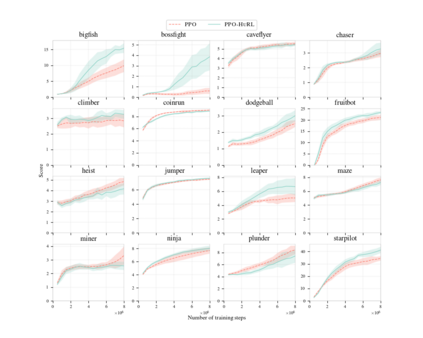

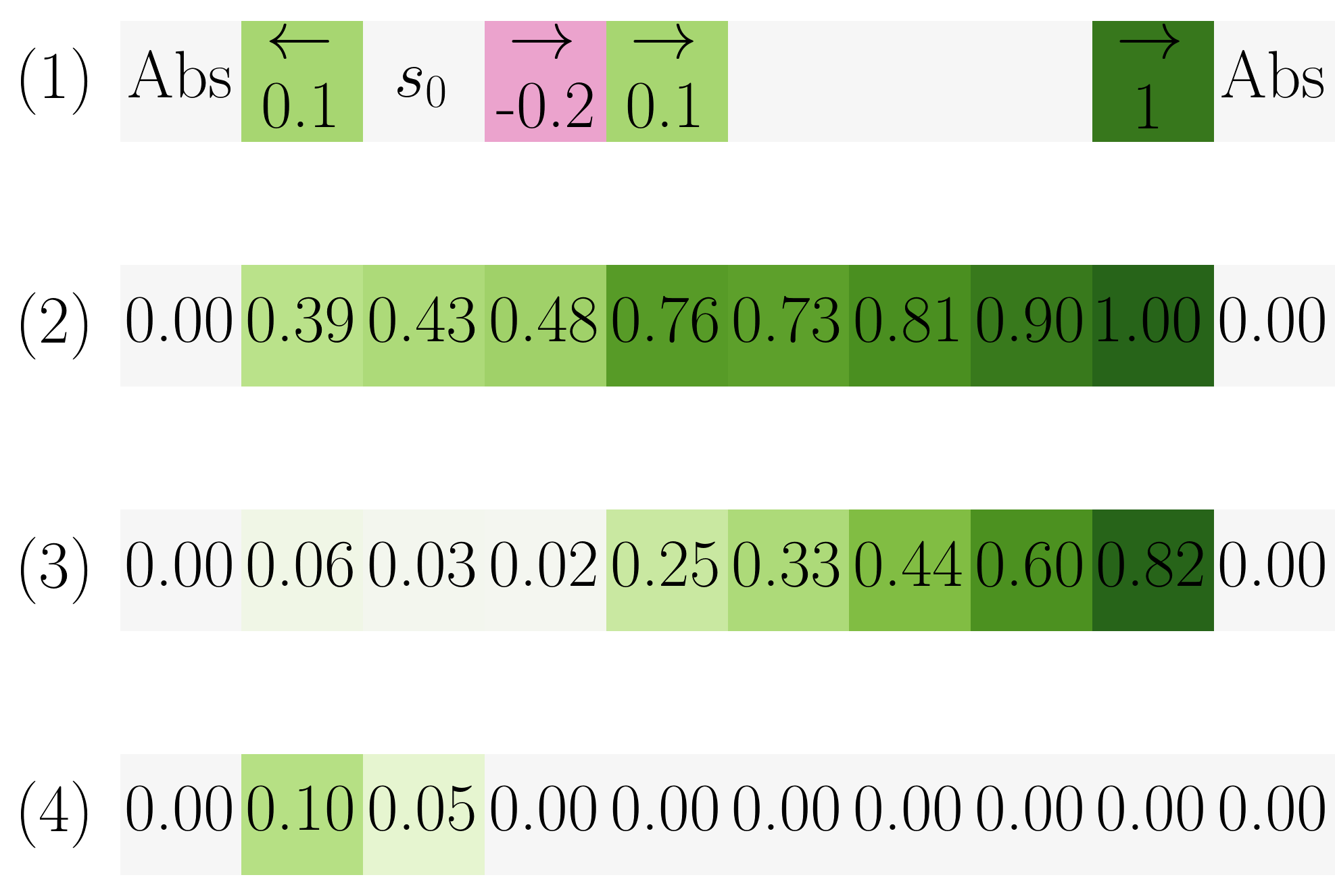

We provide a framework for accelerating reinforcement learning (RL) algorithms by heuristics constructed from domain knowledge or offline data. Tabula rasa RL algorithms require environment interactions or computation that scales with the horizon of the sequential decision-making task. Using our framework, we show how heuristic-guided RL induces a much shorter-horizon subproblem that provably solves the original task. Our framework can be viewed as a horizon-based regularization for controlling bias and variance in RL under a finite interaction budget. On the theoretical side, we characterize properties of a good heuristic and its impact on RL acceleration. In particular, we introduce the novel concept of an improvable heuristic, a heuristic that allows an RL agent to extrapolate beyond its prior knowledge. On the empirical side, we instantiate our framework to accelerate several state-of-the-art algorithms in simulated robotic control tasks and procedurally generated games. Our framework complements the rich literature on warm-starting RL with expert demonstrations or exploratory datasets, and introduces a principled method for injecting prior knowledge into RL.

翻译:我们提供了一个框架,用域知识或离线数据来加速强化学习(RL)算法。 Tabula rasa RL算法要求环境互动或根据顺序决策任务的地平线进行计算。我们利用我们的框架,展示了超自然制导RL如何引出一个更短的对等分问题,可以解决最初的任务。我们的框架可以被视为基于地平线的正规化,在有限的互动预算下控制RL的偏差和差异。在理论方面,我们用专家演示或探索性数据集来补充关于热启动RL的丰富文献,我们特别介绍了一种不易变的超常性概念,一种允许RL代理者超越其先前知识的超常性推论。在经验方面,我们立即将我们的框架用于加速模拟机器人控制任务和程序生成的游戏中的一些最先进的算法。我们的框架以专家演示或探索性数据集补充了热启动RL的丰富文献,并引入了将先前知识注入RL的有原则性的方法。