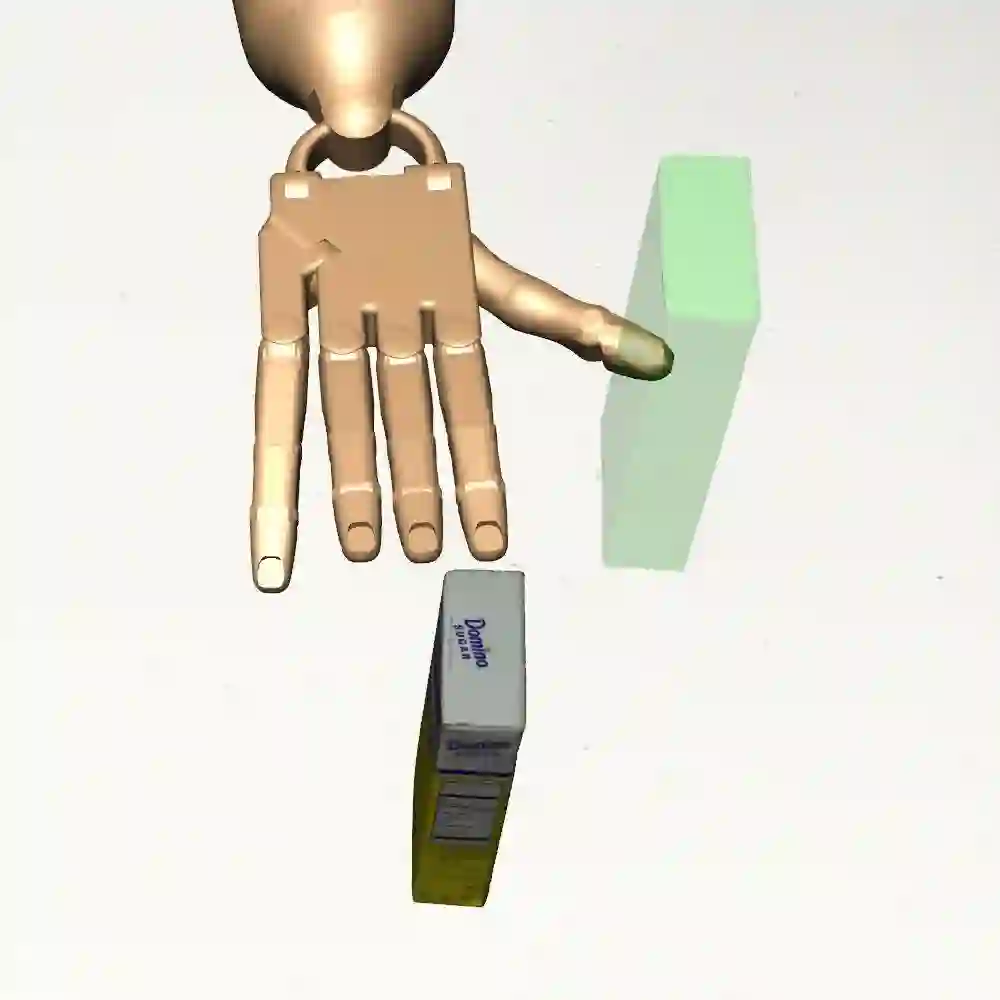

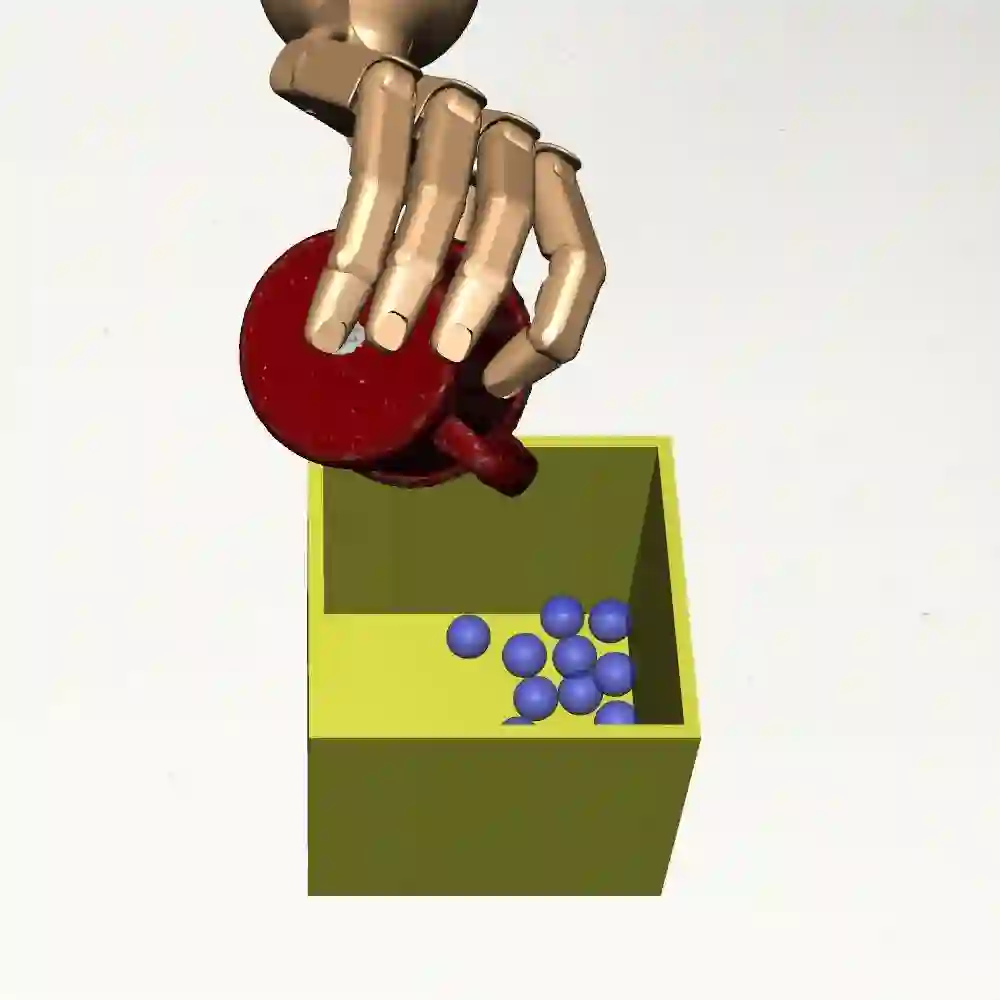

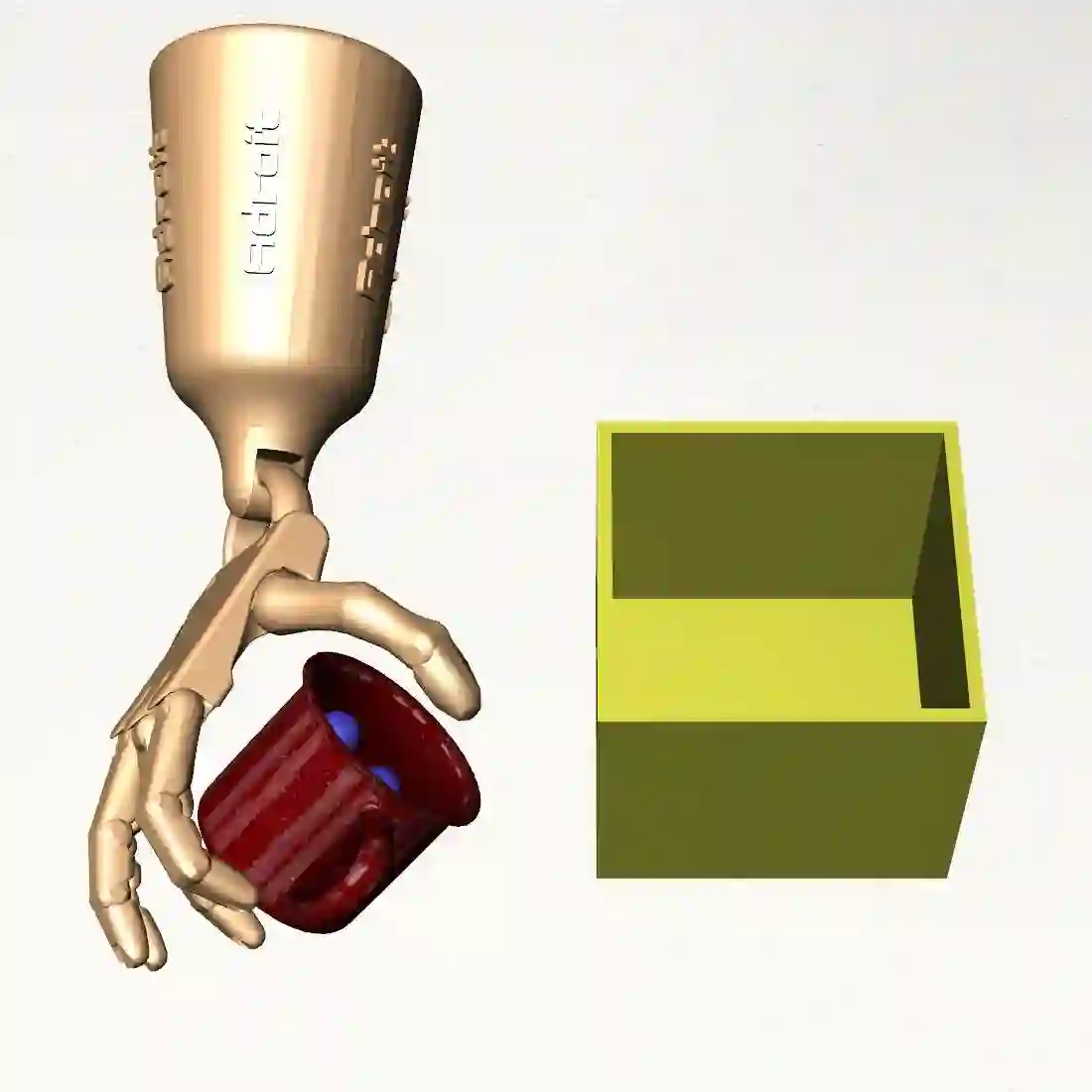

While significant progress has been made on understanding hand-object interactions in computer vision, it is still very challenging for robots to perform complex dexterous manipulation. In this paper, we propose a new platform and pipeline DexMV (Dexterous Manipulation from Videos) for imitation learning. We design a platform with: (i) a simulation system for complex dexterous manipulation tasks with a multi-finger robot hand and (ii) a computer vision system to record large-scale demonstrations of a human hand conducting the same tasks. In our novel pipeline, we extract 3D hand and object poses from videos, and propose a novel demonstration translation method to convert human motion to robot demonstrations. We then apply and benchmark multiple imitation learning algorithms with the demonstrations. We show that the demonstrations can indeed improve robot learning by a large margin and solve the complex tasks which reinforcement learning alone cannot solve. More details can be found in the project page: https://yzqin.github.io/dexmv

翻译:虽然在理解计算机视觉中的手工物体相互作用方面取得了显著进展,但机器人进行复杂的巧肢操纵仍然非常困难。 在本文中,我们提出一个新的平台和管道 DexMV (来自视频的极速操纵), 用于模仿学习。 我们设计了一个平台,包括:(一) 多指机器人手的复杂巧肢操作任务模拟系统,以及(二) 用于记录执行相同任务的人手大规模演示的计算机视觉系统。在我们的新管道中,我们从视频中提取了3D手和物体,并提出了将人类运动转换为机器人演示的新型示范翻译方法。我们随后应用了多个模拟学习算法,并将这些算法作为基准。我们证明这些演示确实可以大大改进机器人的学习,并解决单手强化学习无法解决的复杂任务。 更多细节可以在项目网页上找到 : https://yzqin.githuub.io/dexmv 上找到 。