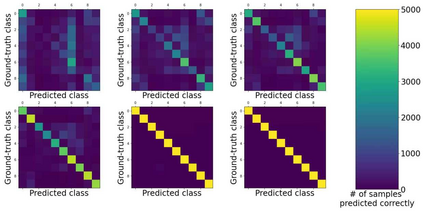

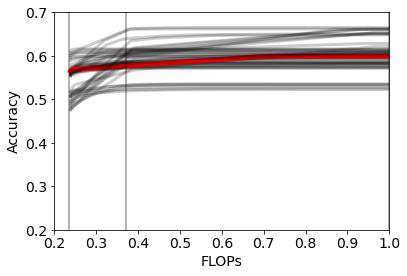

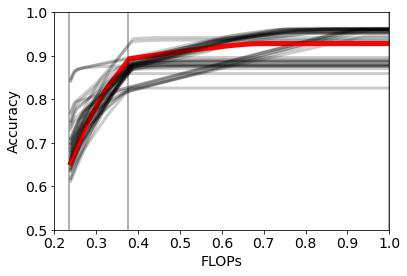

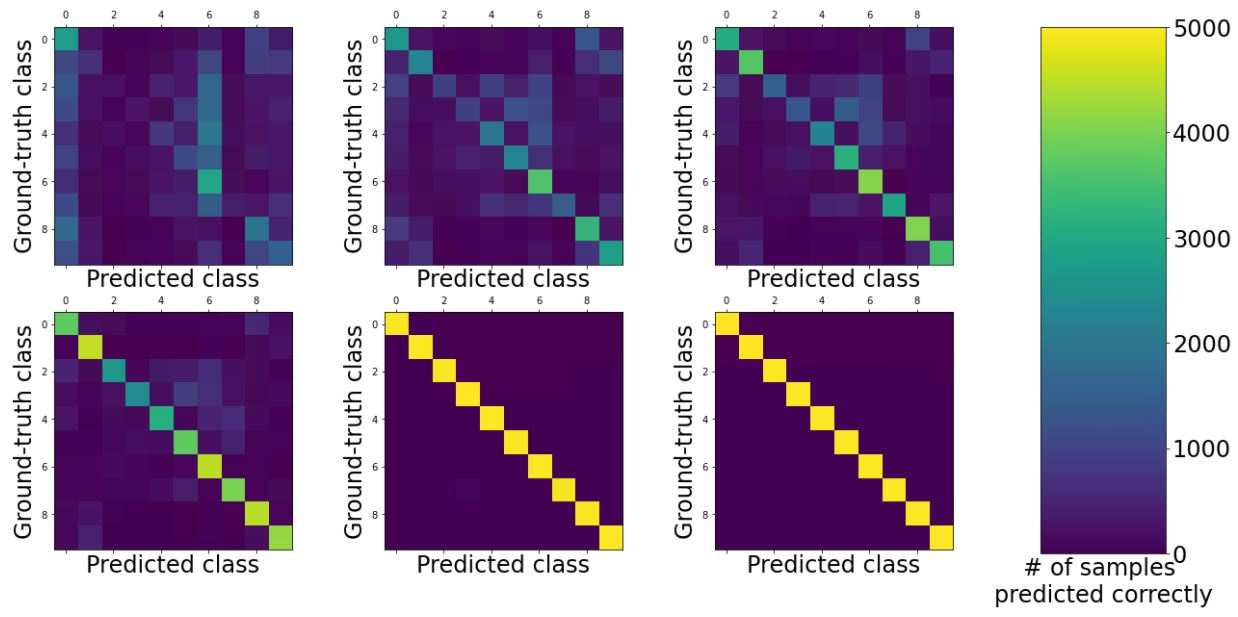

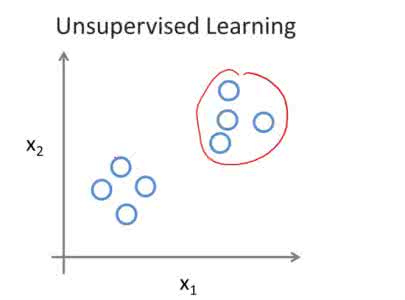

State-of-the-art neural networks with early exit mechanisms often need considerable amount of training and fine tuning to achieve good performance with low computational cost. We propose a novel early exit technique, Early Exit Class Means (E$^2$CM), based on class means of samples. Unlike most existing schemes, E$^2$CM does not require gradient-based training of internal classifiers and it does not modify the base network by any means. This makes it particularly useful for neural network training in low-power devices, as in wireless edge networks. We evaluate the performance and overheads of E$^2$CM over various base neural networks such as MobileNetV3, EfficientNet, ResNet, and datasets such as CIFAR-100, ImageNet, and KMNIST. Our results show that, given a fixed training time budget, E$^2$CM achieves higher accuracy as compared to existing early exit mechanisms. Moreover, if there are no limitations on the training time budget, E$^2$CM can be combined with an existing early exit scheme to boost the latter's performance, achieving a better trade-off between computational cost and network accuracy. We also show that E$^2$CM can be used to decrease the computational cost in unsupervised learning tasks.

翻译:具有早期退出机制的先进神经网络往往需要大量的培训和微调,才能以低计算成本取得良好业绩。我们提议基于类样方法的新型早期退出技术,即早期退出类方法(E$2$CM )。与大多数现行计划不同,E$2$CM并不要求对内部分类人员进行基于梯度的培训,也不以任何方式修改基础网络。这使得它特别有助于低功率装置的神经网络培训,如无线边缘网络。我们评估了E2$CM在移动网络3、高效网络、ResNet等各种基本神经网络的绩效和间接费用,以及CIFAR-100、图像网络和KMNIST等数据集。我们的结果显示,根据固定的培训预算,E2$CM比现有的早期退出机制更加准确。此外,如果培训预算没有限制,E2$CM可以与现有的提前退出计划相结合,以提高后者的绩效,实现更好的贸易成本和成本计算方法之间的精确度。我们还可以显示,在计算成本和网络之间实现更好的贸易成本的降低。