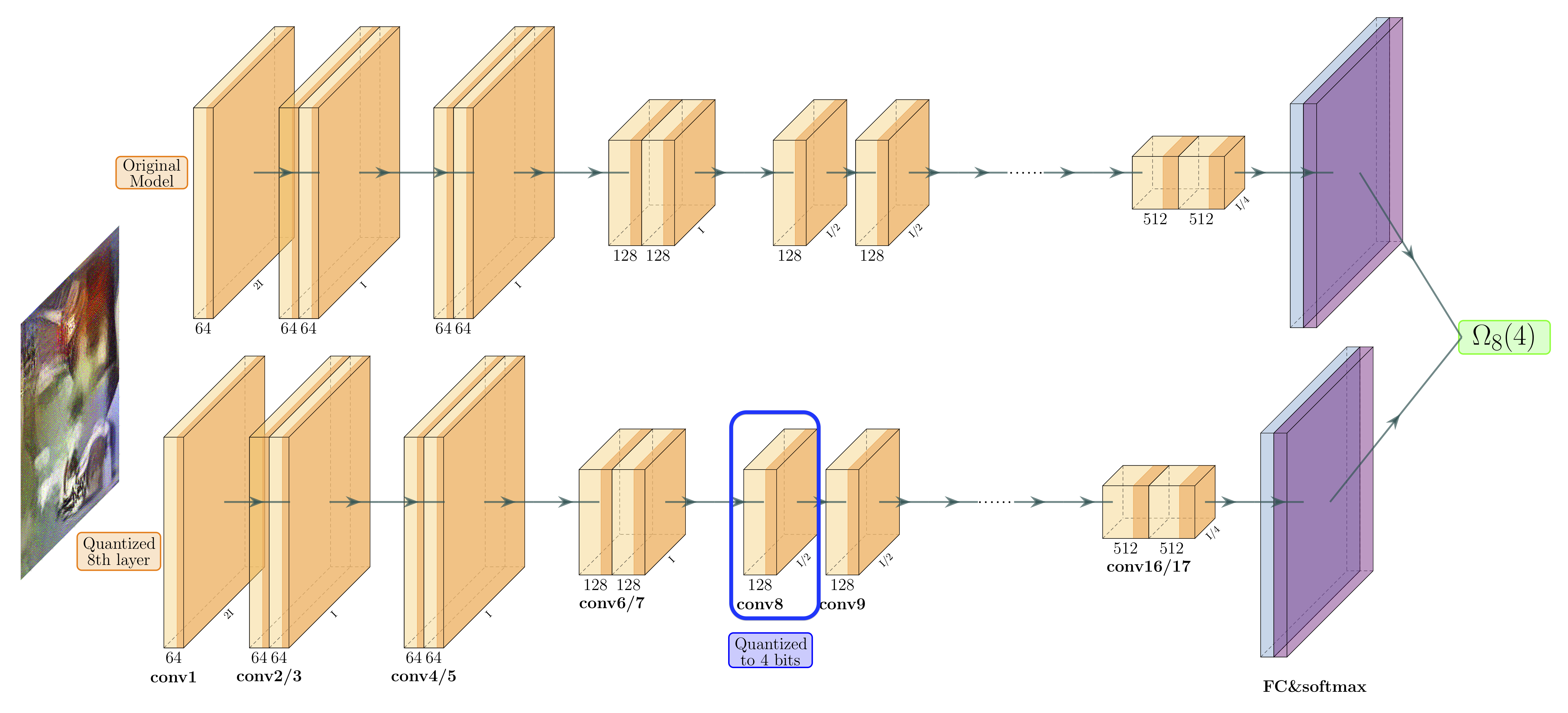

Quantization is a promising approach for reducing the inference time and memory footprint of neural networks. However, most existing quantization methods require access to the original training dataset for retraining during quantization. This is often not possible for applications with sensitive or proprietary data, e.g., due to privacy and security concerns. Existing zero-shot quantization methods use different heuristics to address this, but they result in poor performance, especially when quantizing to ultra-low precision. Here, we propose ZeroQ , a novel zero-shot quantization framework to address this. ZeroQ enables mixed-precision quantization without any access to the training or validation data. This is achieved by optimizing for a Distilled Dataset, which is engineered to match the statistics of batch normalization across different layers of the network. ZeroQ supports both uniform and mixed-precision quantization. For the latter, we introduce a novel Pareto frontier based method to automatically determine the mixed-precision bit setting for all layers, with no manual search involved. We extensively test our proposed method on a diverse set of models, including ResNet18/50/152, MobileNetV2, ShuffleNet, SqueezeNext, and InceptionV3 on ImageNet, as well as RetinaNet-ResNet50 on the Microsoft COCO dataset. In particular, we show that ZeroQ can achieve 1.71\% higher accuracy on MobileNetV2, as compared to the recently proposed DFQ method. Importantly, ZeroQ has a very low computational overhead, and it can finish the entire quantization process in less than 30s (0.5\% of one epoch training time of ResNet50 on ImageNet). We have open-sourced the ZeroQ framework\footnote{https://github.com/amirgholami/ZeroQ}.

翻译:Quartization是减少神经网络的发酵时间和记忆足迹的一个很有希望的方法。 但是,大多数现有的量化方法都需要在量化过程中使用最初的培训数据集进行再培训。 对于使用敏感或专有数据的应用来说,这往往是不可能的,例如,由于隐私和安全方面的考虑。 现有的零点量化方法使用不同的螺旋来解决这个问题,但它们导致性能不佳,特别是当对超低精确度进行量化时。在这里,我们建议采用ZeroQ,这是一个新的低度零点50的量化框架。ZeroQ 使得混合精度网络在量化过程中无法使用任何培训或验证数据。这是通过优化对敏感或专有数据的应用,例如由于隐私和安全方面的考虑。现有的零点量化方法使用不同的螺旋论来解决这个问题,但是它们导致统一和混合度的四分解。 对于后者,我们采用了一种新型的帕雷塔式边界,用来自动确定所有层次的混合精度比值设置,没有手工搜索。 Q内,我们广泛测试了我们移动的网络的内线3,在移动的Slevxxxxal 数据模型上, 。

相关内容

- Today (iOS and OS X): widgets for the Today view of Notification Center

- Share (iOS and OS X): post content to web services or share content with others

- Actions (iOS and OS X): app extensions to view or manipulate inside another app

- Photo Editing (iOS): edit a photo or video in Apple's Photos app with extensions from a third-party apps

- Finder Sync (OS X): remote file storage in the Finder with support for Finder content annotation

- Storage Provider (iOS): an interface between files inside an app and other apps on a user's device

- Custom Keyboard (iOS): system-wide alternative keyboards

Source: iOS 8 Extensions: Apple’s Plan for a Powerful App Ecosystem