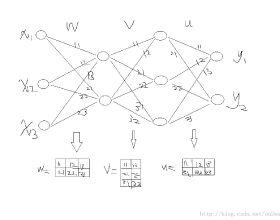

Intersecting neuroscience and deep learning has brought benefits and developments to both fields for several decades, which help to both understand how learning works in the brain, and to achieve the state-of-the-art performances in different AI benchmarks. Backpropagation (BP) is the most widely adopted method for the training of artificial neural networks, which, however, is often criticized for its biological implausibility (e.g., lack of local update rules for the parameters). Therefore, biologically plausible learning methods (e.g., inference learning (IL)) that rely on predictive coding (a framework for describing information processing in the brain) are increasingly studied. Recent works prove that IL can approximate BP up to a certain margin on multilayer perceptrons (MLPs), and asymptotically on any other complex model, and that zero-divergence inference learning (Z-IL), a variant of IL, is able to exactly implement BP on MLPs. However, the recent literature shows also that there is no biologically plausible method yet that can exactly replicate the weight update of BP on complex models. To fill this gap, in this paper, we generalize (IL and) Z-IL by directly defining them on computational graphs. To our knowledge, this is the first biologically plausible algorithm that is shown to be equivalent to BP in the way of updating parameters on any neural network, and it is thus a great breakthrough for the interdisciplinary research of neuroscience and deep learning.

翻译:数十年来,神经科学和深层次的相互交错的神经科学和深层次学习给这两个领域带来了好处和发展,有助于理解大脑的学习如何运作,并在不同的AI基准中实现最先进的表现。 后推法(BP)是培训人工神经网络的最广泛采用的方法,然而,它常常因其生物不可信而受到批评(例如,缺乏当地更新参数的规则)。因此,生物上可信的学习方法(例如,推断学(IL))日益受到研究,这些方法依赖于预测编码(一个描述大脑信息处理的框架),以及在不同AI基准中达到最先进的水平。最近的工作证明,IL可以将BP接近到多层感官(MLPs)的一定的距离,而在任何其他复杂的模型中,零振动引力学习(Z-IL)是IL的变异体,能够精确地在MLPs上执行 BP 。然而,最近的文献也表明,在这种深度的学习方法中,我们没有生物上更接近的深度方法,能够完全地复制BP值更新BP值。