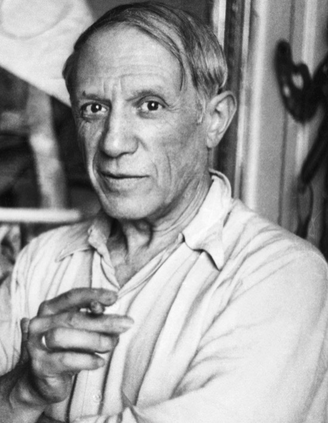

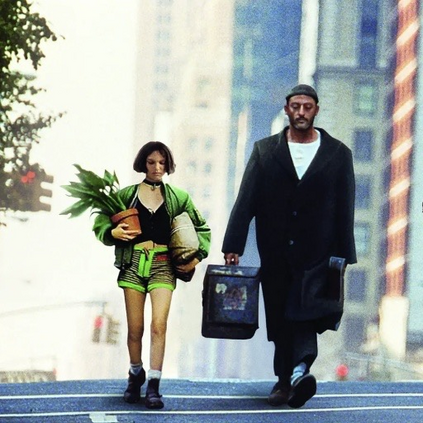

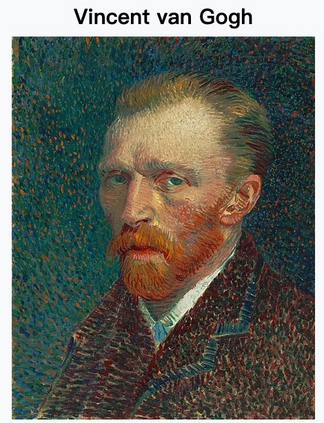

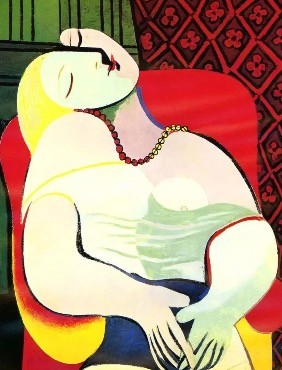

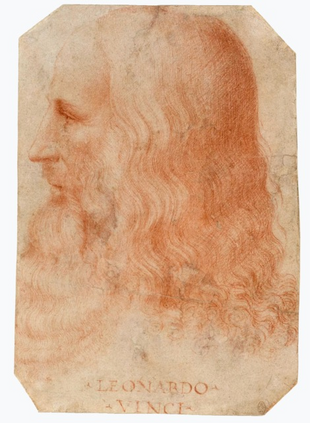

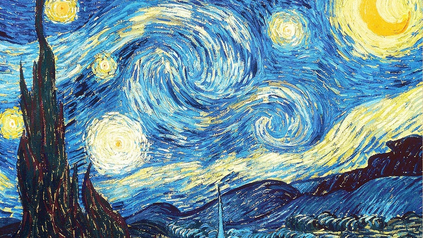

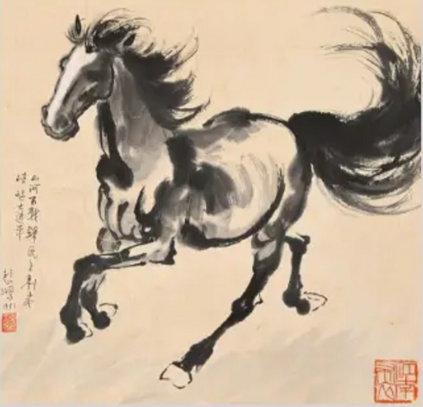

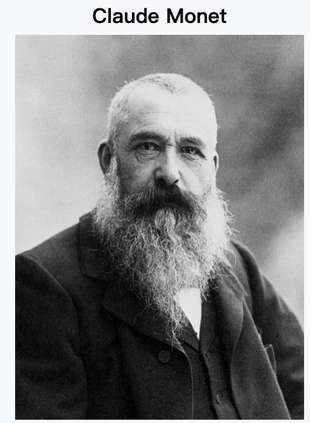

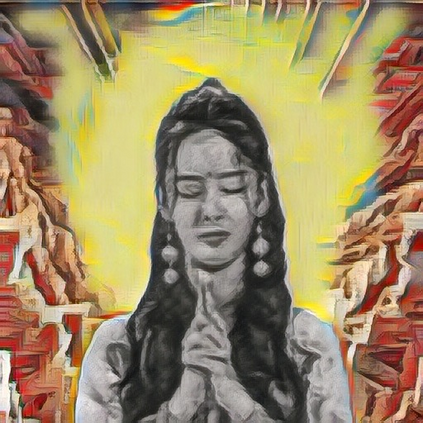

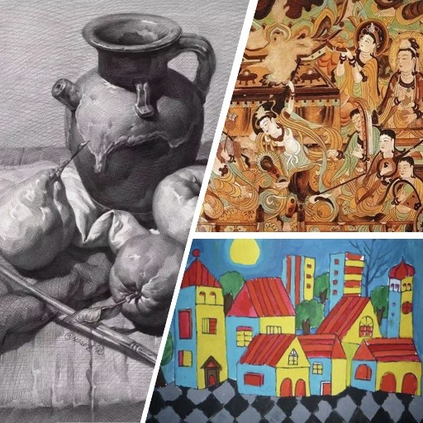

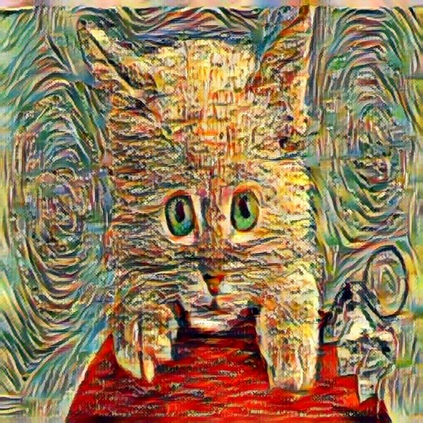

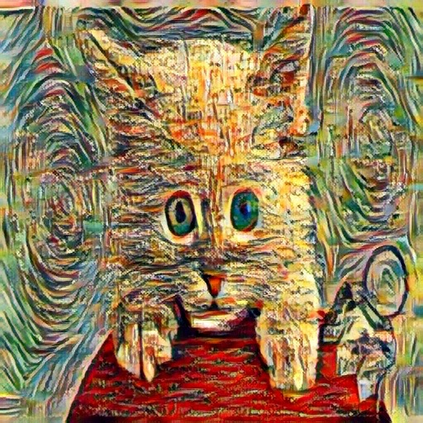

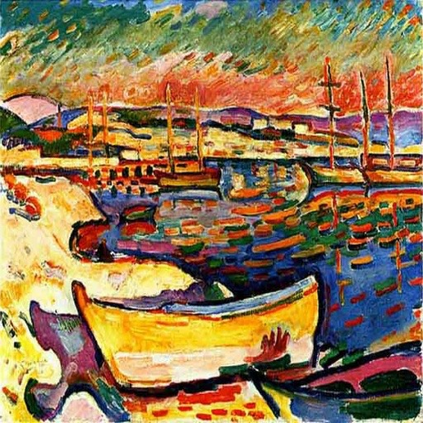

Style transfer aims to render the style of a given image for style reference to another given image for content reference, and has been widely adopted in artistic generation and image editing. Existing approaches either apply the holistic style of the style image in a global manner, or migrate local colors and textures of the style image to the content counterparts in a pre-defined way. In either case, only one result can be generated for a specific pair of content and style images, which therefore lacks flexibility and is hard to satisfy different users with different preferences. We propose here a novel strategy termed Any-to-Any Style Transfer to address this drawback, which enables users to interactively select styles of regions in the style image and apply them to the prescribed content regions. In this way, personalizable style transfer is achieved through human-computer interaction. At the heart of our approach lies in (1) a region segmentation module based on Segment Anything, which supports region selection with only some clicks or drawing on images and thus takes user inputs conveniently and flexibly; (2) and an attention fusion module, which converts inputs from users to controlling signals for the style transfer model. Experiments demonstrate their effectiveness for personalizable style transfer. Notably, our approach performs in a plug-and-play manner portable to any style transfer method and enhance the controllablity. Our code is available \href{https://github.com/Huage001/Transfer-Any-Style}{here}.

翻译:风格转移旨在将给定图片的风格应用于另一张图片的内容,以生成具有艺术性的图片和进行图像编辑。现有方法要么全局应用所有的风格,要么以预定义的方式将风格图片的局部颜色和纹理迁移到内容图像的相应部分。在任一情况下,仅能为特定的内容和风格图像对生成一个结果,因此缺乏灵活性,难以满足不同用户的不同偏好。我们提出了一种新的策略,称为 任意到任意风格转移,以解决这个缺点,这种策略使用户可以交互地选择风格图像中的区域风格,并将其应用于指定的内容区域。通过人机交互实现了个性化的风格转移。我们方法的核心在于(1) Segment Anything 算法的区域分割模块,该模块支持在图像上轻松方便的选择和划分区域;(2)以及注意力融合模块,该模块将来自用户的输入转换为风格转移模型的控制信号。实验结果表明了它们实现个性化风格转移的有效性。值得注意的是,我们的方法适用于任何风格转移方法,并增强了可控性。我们的代码可在 \href{https://github.com/Huage001/Transfer-Any-Style}{此处}找到。