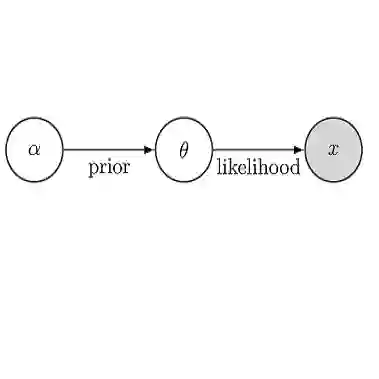

Deep learning is usually described as an experiment-driven field under continuous criticizes of lacking theoretical foundations. This problem has been partially fixed by a large volume of literature which has so far not been well organized. This paper reviews and organizes the recent advances in deep learning theory. The literature is categorized in six groups: (1) complexity and capacity-based approaches for analyzing the generalizability of deep learning; (2) stochastic differential equations and their dynamic systems for modelling stochastic gradient descent and its variants, which characterize the optimization and generalization of deep learning, partially inspired by Bayesian inference; (3) the geometrical structures of the loss landscape that drives the trajectories of the dynamic systems; (4) the roles of over-parameterization of deep neural networks from both positive and negative perspectives; (5) theoretical foundations of several special structures in network architectures; and (6) the increasingly intensive concerns in ethics and security and their relationships with generalizability.

翻译:深层学习通常被描述为在不断批评缺乏理论基础的情况下由实验驱动的领域,这个问题部分地被大量文献所固定,到目前为止,这些文献没有很好地组织起来。本文回顾并组织深层学习理论的最新进展。文献分为六组:(1) 分析深层学习的可普遍性的复杂和基于能力的方法;(2) 随机差异方程式及其动态系统,作为深层学习优化和普遍化的特点,部分受贝耶斯推断的启发;(3) 驱动动态系统轨迹的流失地貌的几何结构;(4) 从正反两方面看深层神经网络的超度参数作用;(5) 网络结构中若干特殊结构的理论基础;(6) 伦理和安全及其与可普遍性的关系日益引起人们的关注。