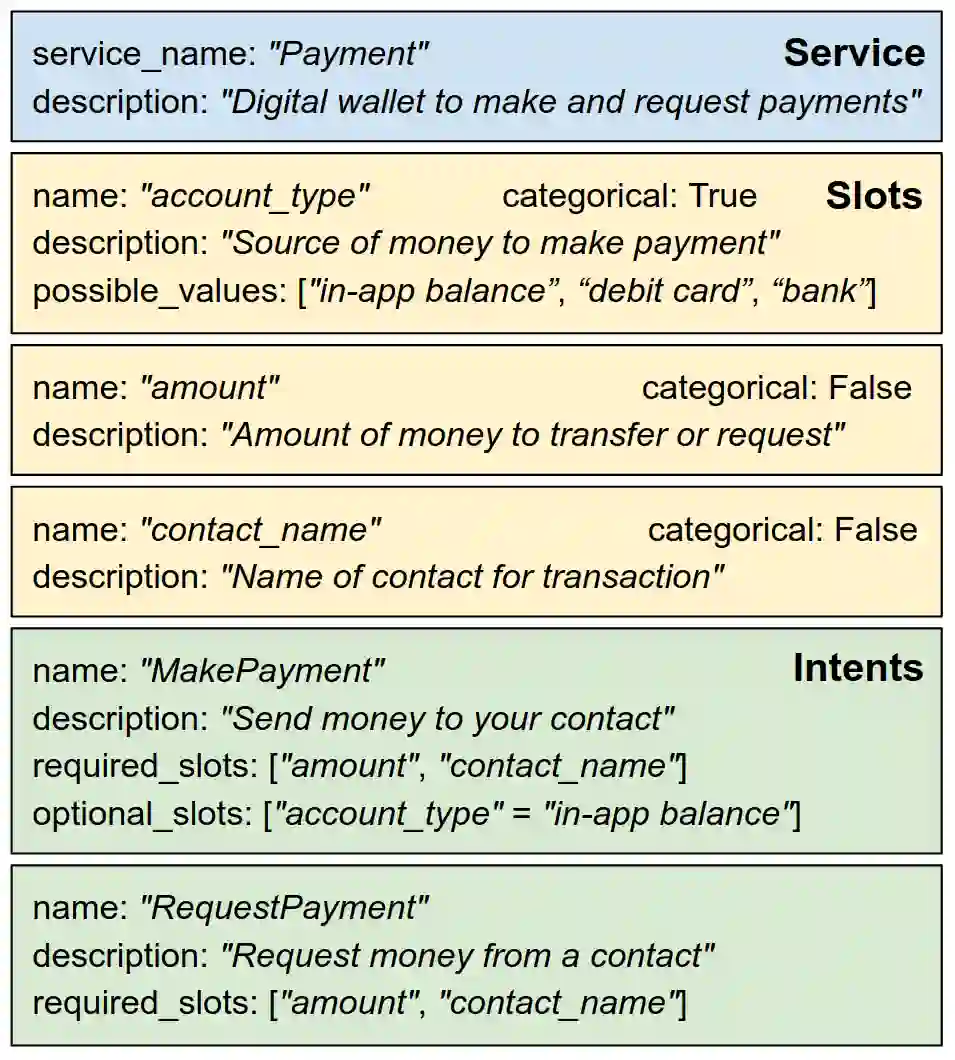

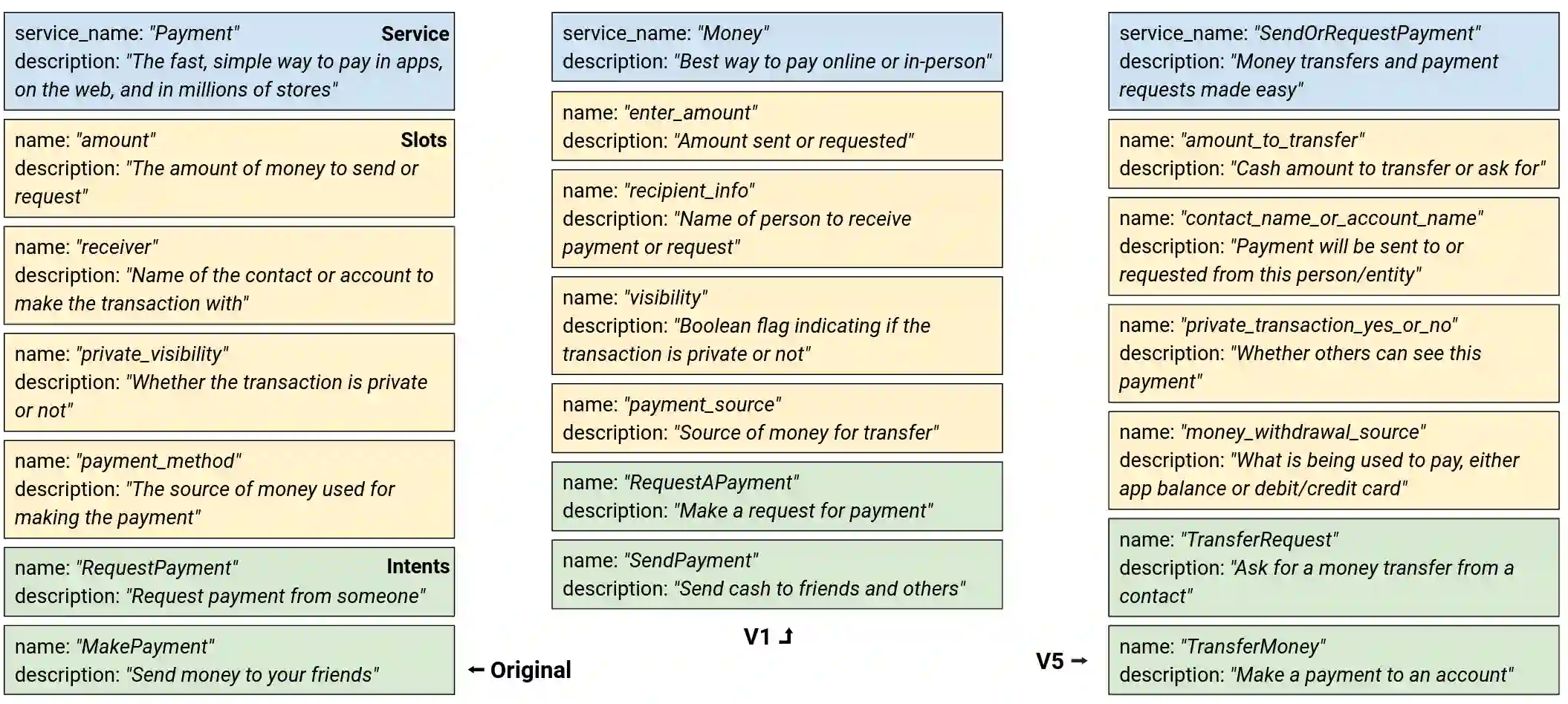

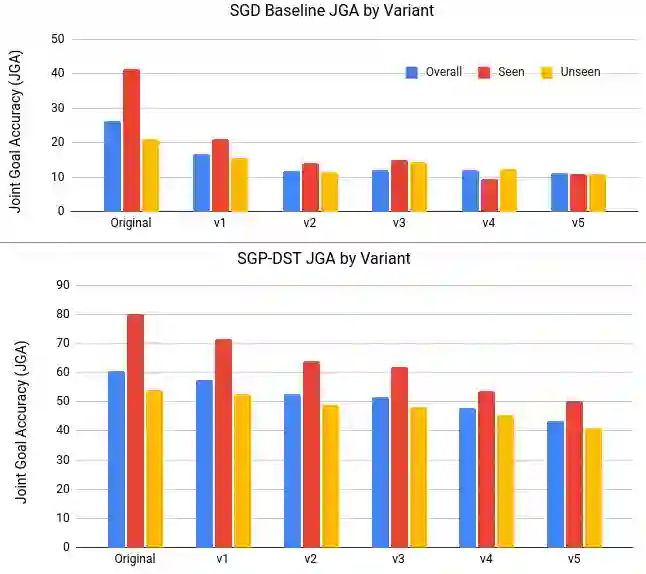

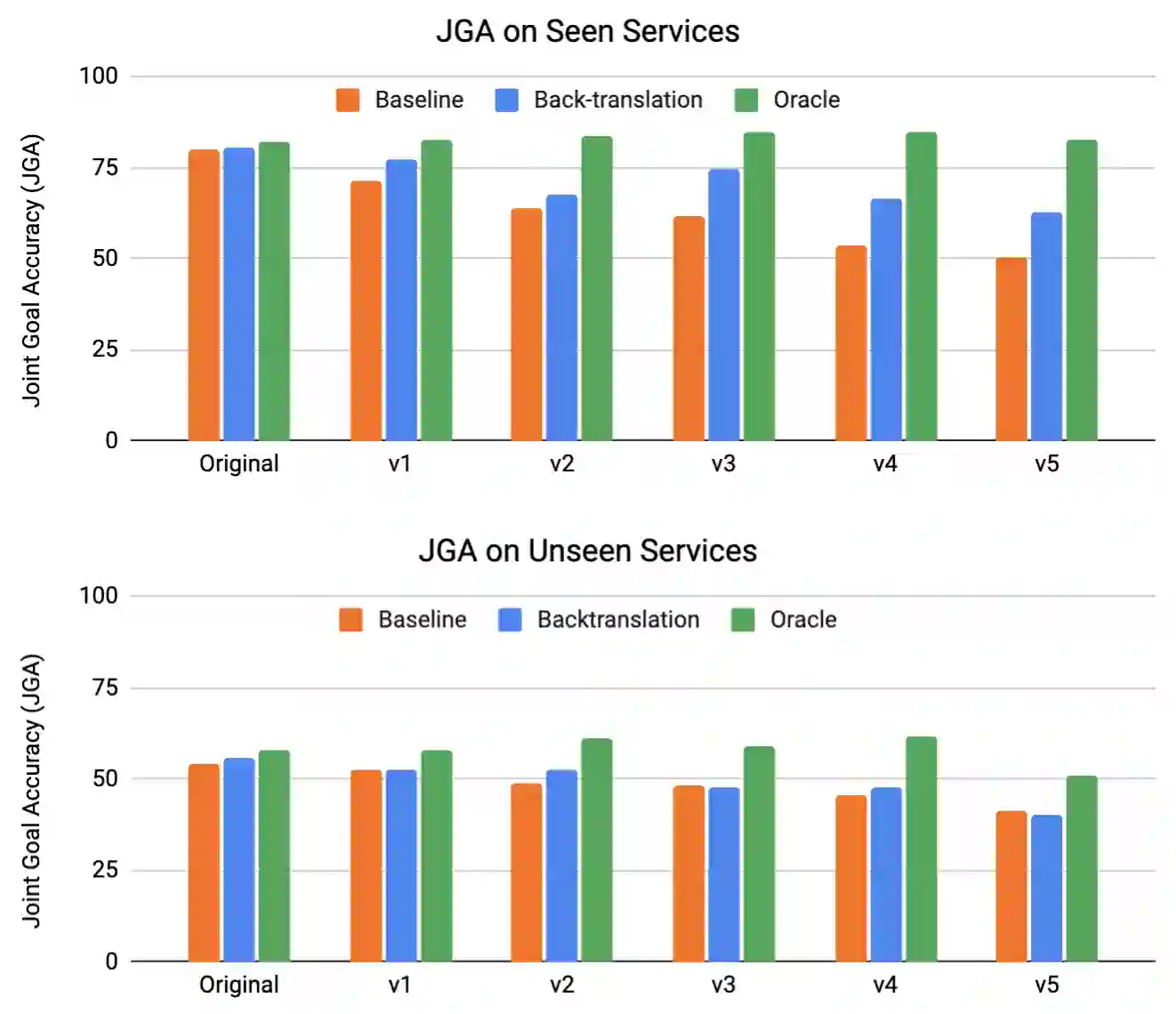

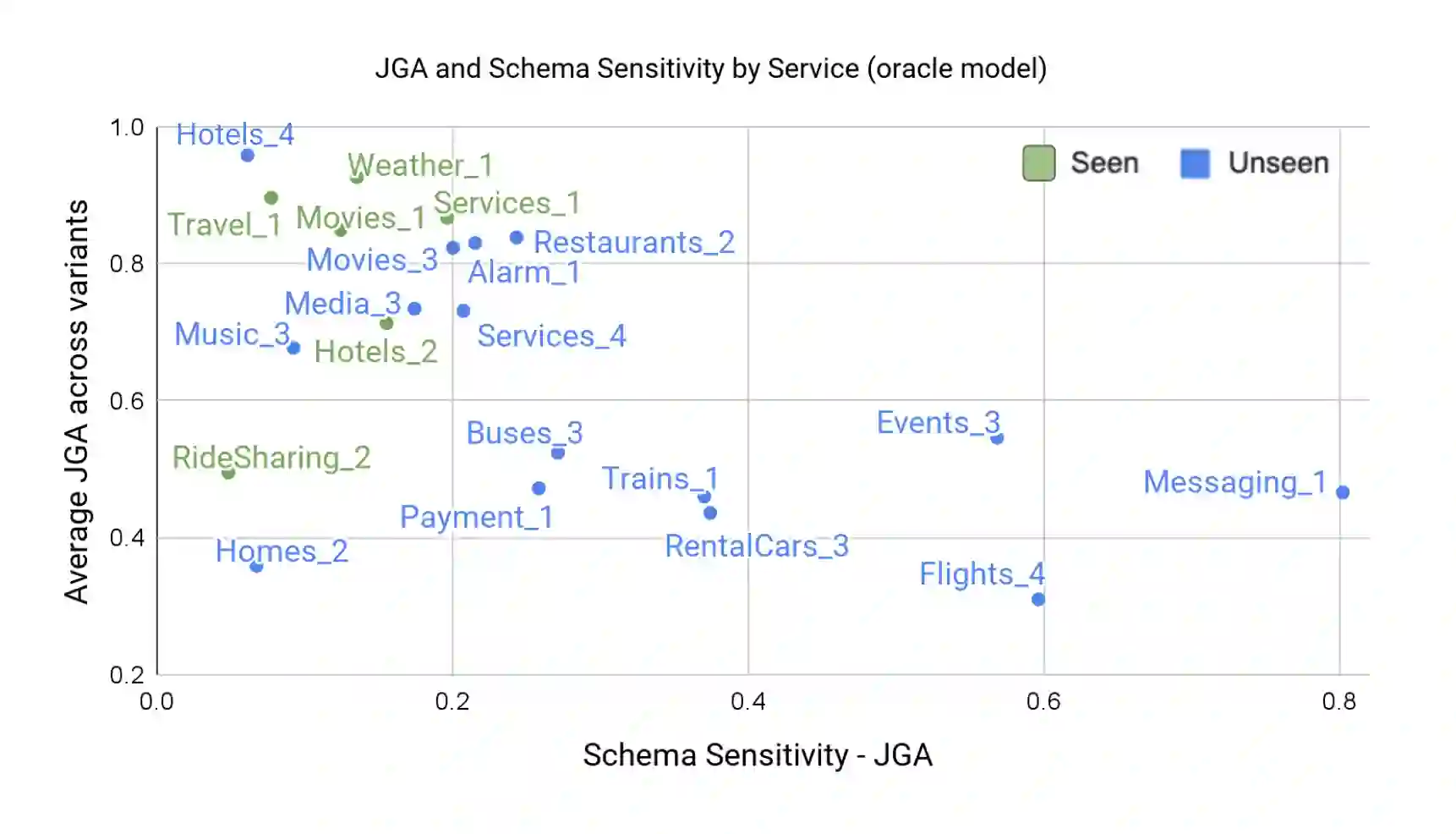

Zero/few-shot transfer to unseen services is a critical challenge in task-oriented dialogue research. The Schema-Guided Dialogue (SGD) dataset introduced a paradigm for enabling models to support an unlimited number of services without additional data collection or re-training through the use of schemas. Schemas describe service APIs in natural language, which models consume to understand the services they need to support. However, the impact of the choice of language in these schemas on model performance remains unexplored. We address this by releasing SGD-X, a benchmark for measuring the robustness of dialogue systems to linguistic variations in schemas. SGD-X extends the SGD dataset with crowdsourced variants for every schema, where variants are semantically similar yet stylistically diverse. We evaluate two dialogue state tracking models on SGD-X and observe that neither generalizes well across schema variations, measured by joint goal accuracy and a novel metric for measuring schema sensitivity. Furthermore, we present a simple model-agnostic data augmentation method to improve schema robustness and zero-shot generalization to unseen services.

翻译:“Schema-Guid Diaction”(SGD)数据集引入了一种模式模式,使模型能够支持数量无限的服务,而无需通过使用 schemas 进行额外的数据收集或再培训。Schemas 描述自然语言的服务API,这些模式用来理解他们需要支持的服务。然而,这些模式选择语言对模式性能的影响仍未得到探讨。我们通过释放SGD-X来解决这个问题,SGD-X是衡量对话系统稳健性与战略性能差异的基准。 SGD-X将SGD数据集扩展为每个系统都有多方源变异的组合式,其中变量在语义上相似,但形式上也各不相同。我们评价了两个对话状态跟踪模型模型,即SGD-X的跟踪模型,并观察到,用联合目标准确度和测算系统灵敏度的新指标来衡量,没有把各种系统变异都很好地概括起来。此外,我们提出了一个简单的模型数据增强模型数据增强方法,以改进系统稳健和零向全视服务。