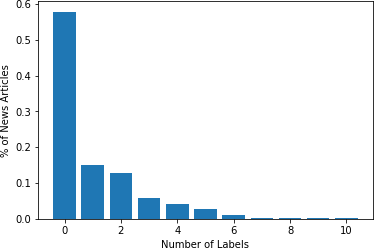

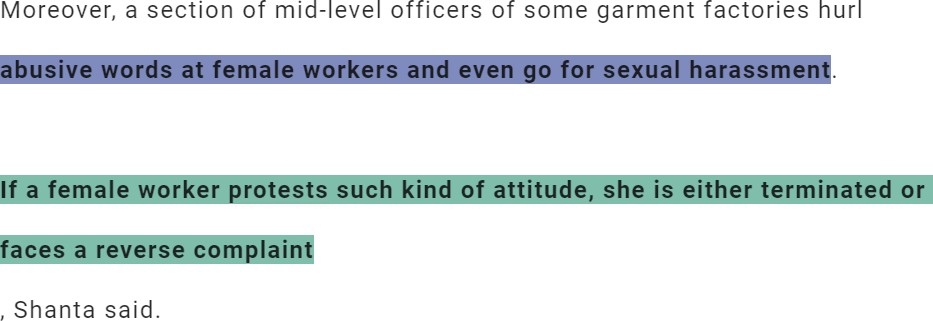

Forced labour is the most common type of modern slavery, and it is increasingly gaining the attention of the research and social community. Recent studies suggest that artificial intelligence (AI) holds immense potential for augmenting anti-slavery action. However, AI tools need to be developed transparently in cooperation with different stakeholders. Such tools are contingent on the availability and access to domain-specific data, which are scarce due to the near-invisible nature of forced labour. To the best of our knowledge, this paper presents the first openly accessible English corpus annotated for multi-class and multi-label forced labour detection. The corpus consists of 989 news articles retrieved from specialised data sources and annotated according to risk indicators defined by the International Labour Organization (ILO). Each news article was annotated for two aspects: (1) indicators of forced labour as classification labels and (2) snippets of the text that justify labelling decisions. We hope that our data set can help promote research on explainability for multi-class and multi-label text classification. In this work, we explain our process for collecting the data underpinning the proposed corpus, describe our annotation guidelines and present some statistical analysis of its content. Finally, we summarise the results of baseline experiments based on different variants of the Bidirectional Encoder Representation from Transformer (BERT) model.

翻译:最近的研究表明,人工智能(AI)具有扩大反奴役行动的巨大潜力,然而,需要与不同的利益攸关方合作透明地开发AI工具。这些工具取决于是否有和能否获得特定领域的数据,而由于强迫劳动几乎不可见的性质,这些数据是很少的。根据我们所知,本文件介绍了第一个公开可获取的英文资料,并附加了多级和多标签强迫劳动检测的附加说明。该资料包括从专门数据来源检索的989条新闻文章,以及根据国际劳工组织(劳工组织)界定的风险指标附加说明。每篇文章都附加说明,涉及两个方面:(1) 强迫劳动指标作为分类标签,(2) 文本的片段,证明作出贴标签决定是合理的。我们希望,我们的数据集能够有助于促进关于多级和多标签文本分类的解释性的研究。在这项工作中,我们解释了我们收集拟议材料所依据的数据的过程,描述了我们的说明性指南,并介绍了对其内容进行的一些统计分析分析。最后,我们根据不同版本的B变式模型,总结了该模型的基准结果。