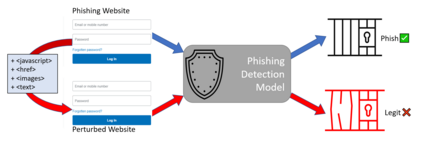

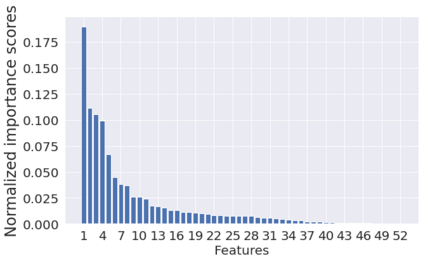

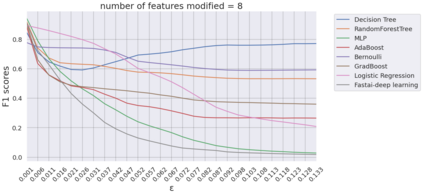

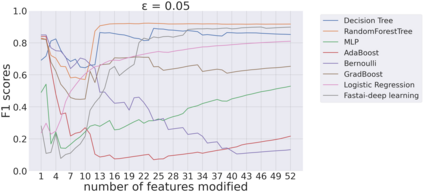

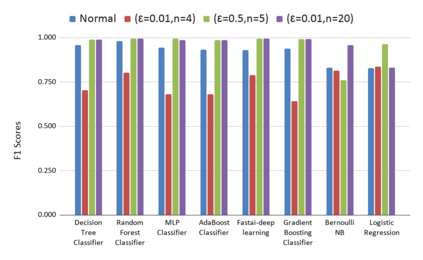

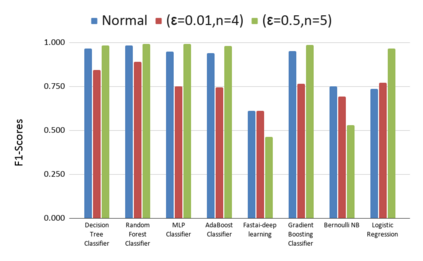

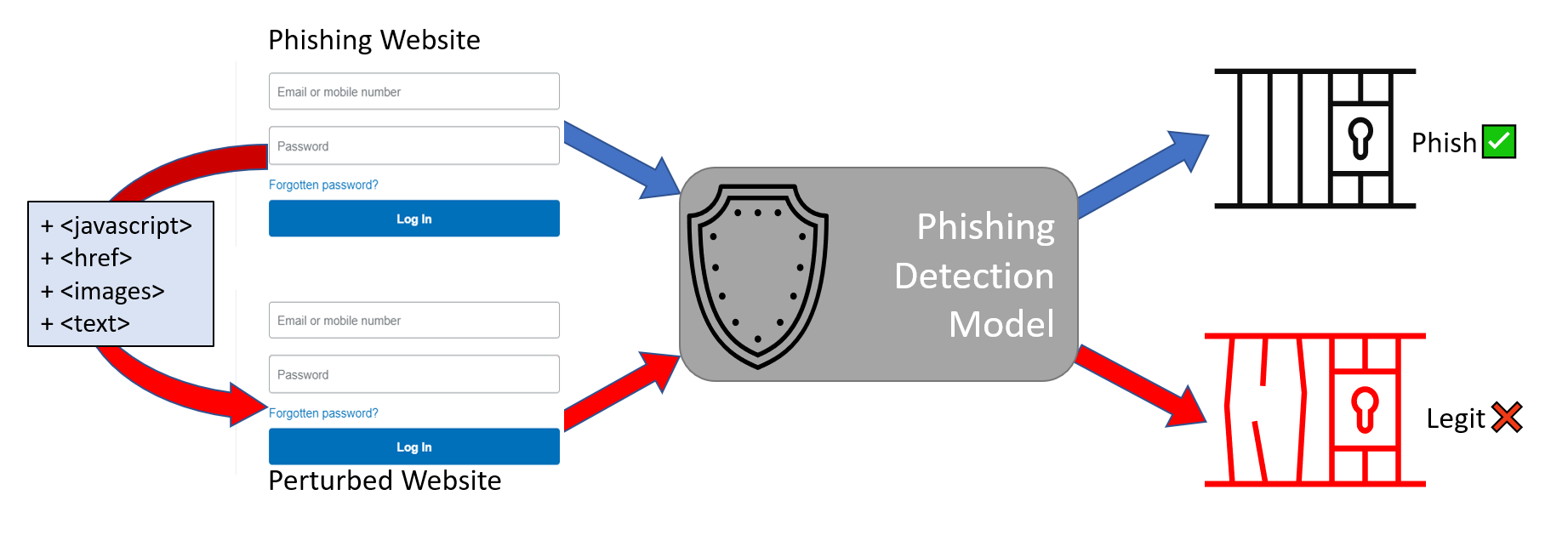

Machine learning models are susceptible to adversarial attacks which dramatically reduce their performance. Reliable defenses to these attacks are an unsolved challenge. In this work, we present a novel evasion attack: the 'Feature Importance Guided Attack' (FIGA) which generates adversarial evasion samples. FIGA is model agnostic, it assumes no prior knowledge of the defending model's learning algorithm, but does assume knowledge of the feature representation. FIGA leverages feature importance rankings; it perturbs the most important features of the input in the direction of the target class we wish to mimic. We demonstrate FIGA against eight phishing detection models. We keep the attack realistic by perturbing phishing website features that an adversary would have control over. Using FIGA we are able to cause a reduction in the F1-score of a phishing detection model from 0.96 to 0.41 on average. Finally, we implement adversarial training as a defense against FIGA and show that while it is sometimes effective, it can be evaded by changing the parameters of FIGA.

翻译:机器学习模型很容易受到对抗性攻击,其性能会大大降低。 对这些攻击的可靠防御是一个尚未解决的挑战。 在这项工作中,我们展示了一种新的规避性攻击:产生对抗性规避样本的“自然重要性引导攻击 ” ( FIGA ) 。 FIGA 是模型不可知性, 它假定对防御性模型的学习算法没有事先了解, 但确实假定了对特征描述的了解。 FIGA 的优势排名是重要; 它渗透了输入到我们想要模仿的目标类别方向的最重要特征。 我们展示了FIGA 对抗八个phishing 探测模型。 我们通过对对手可以控制的网站进行渗透性钓鱼功能来保持攻击的现实性。 使用 FIGA, 我们能够使一个phishing 检测模型的F1- 分数从 0.96 到 0.41 平均减少。 最后, 我们对FIGA 进行对抗性训练, 以对抗FIGA 的防御性能有时有效, 并且通过改变 FIGA 参数来规避它。