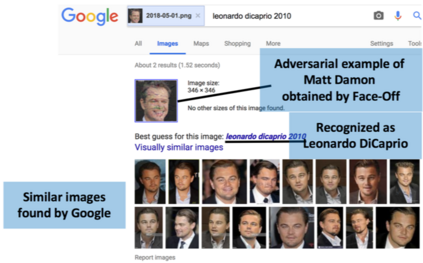

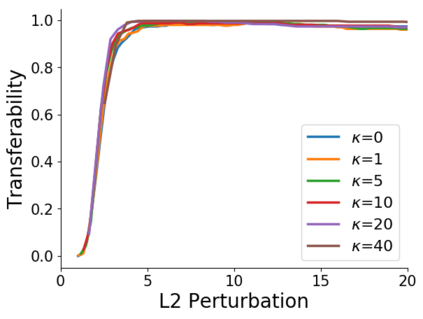

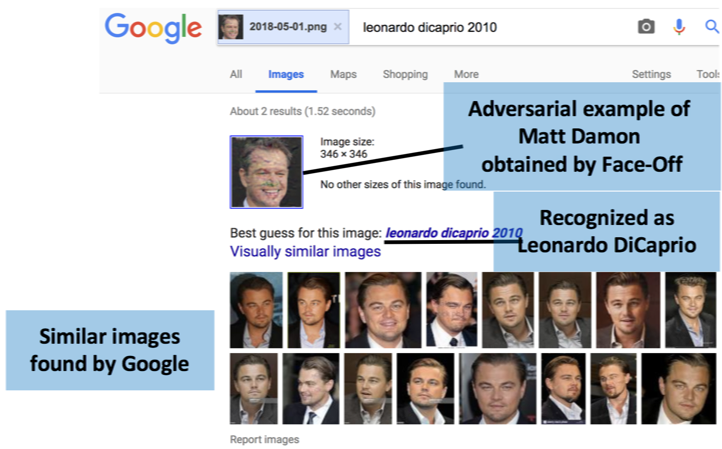

Advances in deep learning have made face recognition increasingly feasible and pervasive. While useful to social media platforms and users, this technology carries significant privacy threats. Coupled with the abundant information they have about users, service providers can associate users with social interactions, visited places, activities, and preferences - some of which the user may not want to share. Additionally, facial recognition models used by various agencies are trained by data scraped from social media platforms. Existing approaches to mitigate these privacy risks from unwanted face recognition result in an imbalanced privacy-utility trade-off to the users. In this paper, we address this trade-off by proposing Face-Off, a privacy-preserving framework that introduces minor perturbations to the user's face to prevent it from being correctly recognized. To realize Face-Off, we overcome a set of challenges related to the black box nature of commercial face recognition services, and the lack literature for adversarial attacks on metric networks. We implement and evaluate Face-Off to find that it deceives three commercial face recognition services from Microsoft, Amazon, and Face++. Our user study with 330 participants further shows that the perturbations come at an acceptable cost for the users.

翻译:深层次学习的进展使得人们越来越容易和普遍地认识自己,在对社交媒体平台和用户有用的同时,这种技术也带来了重大的隐私威胁。服务供应商可以将用户与社会互动、访问过的地方、活动和偏好联系起来,而用户可能不愿意分享其中一些信息。此外,各机构使用的面部识别模型由社交媒体平台的数据来培训,从社交媒体平台上提取数据;现有的减轻这些隐私风险的方法因不想要的面部识别风险,导致对用户的隐私使用权交易不平衡。在本文中,我们通过提出“Face-off”(一个隐私保护框架)来解决这一权衡问题,这个框架为用户面部带来轻微的干扰,以防止其被正确识别。为了实现面对面,我们克服了与商业面部识别服务黑盒性质有关的一系列挑战,并克服了对公制网络进行对抗性攻击的文献的匮乏。我们实施和评估“Face-off”(Face-Face-Face++),以便发现它欺骗了来自微软、亚马孙和Face++的三种商业面面面识别服务。我们的用户研究还显示,与330名参与者进一步显示,这些浏览器的浏览器使用户付出了可接受的成本成本。