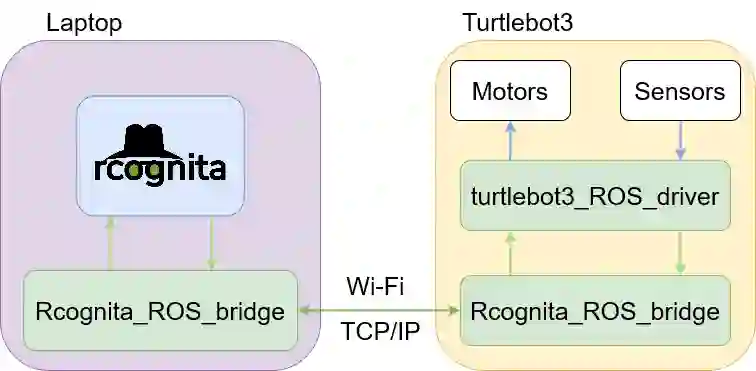

A common setting of reinforcement learning (RL) is a Markov decision process (MDP) in which the environment is a stochastic discrete-time dynamical system. Whereas MDPs are suitable in such applications as video-games or puzzles, physical systems are time-continuous. A general variant of RL is of digital format, where updates of the value and policy are performed at discrete moments in time. The agent-environment loop then amounts to a sampled system, whereby sample-and-hold is a specific case. In this paper, we propose and benchmark two RL methods suitable for sampled systems. Specifically, we hybridize model-predictive control (MPC) with critics learning the Q- and value function. Optimality is analyzed and performance comparison is done in an experimental case study with a mobile robot.

翻译:强化学习的共同设置(RL)是一个Markov决定程序,其中环境是一种随机离散时间动态系统,MDP适合于视频游戏或拼图等应用,而物理系统则具有时间性。RL的通用设置是数字格式,在离散时刻更新价值和政策。代理环境循环随后相当于抽样系统,样本和持有是一个具体案例。在本文中,我们提出并设定了两种适合抽样系统的RL方法。具体地说,我们将模型预测控制(MPC)与批评者学习Q和价值功能相结合。对最佳性进行了分析,并在与移动机器人进行的试验性案例研究中进行了性能比较。