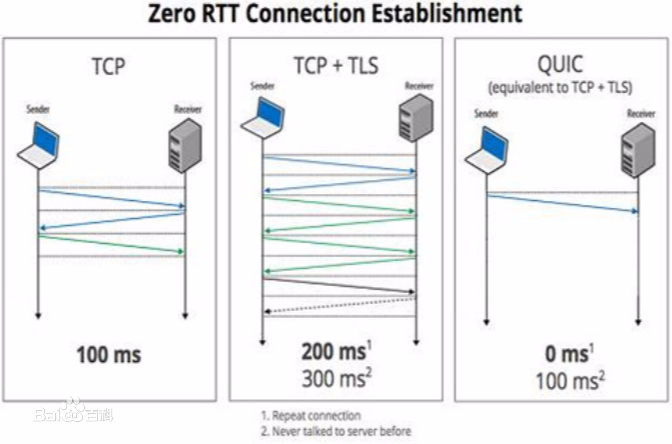

Effective network congestion control strategies are key to keeping the Internet (or any large computer network) operational. Network congestion control has been dominated by hand-crafted heuristics for decades. Recently, ReinforcementLearning (RL) has emerged as an alternative to automatically optimize such control strategies. Research so far has primarily considered RL interfaces which block the sender while an agent considers its next action. This is largely an artifact of building on top of frameworks designed for RL in games (e.g. OpenAI Gym). However, this does not translate to real-world networking environments, where a network sender waiting on a policy without sending data is costly for throughput. We instead propose to formulate congestion control with an asynchronous RL agent that handles delayed actions. We present MVFST-RL, a scalable framework for congestion control in the QUIC transport protocol that leverages state-of-the-art in asynchronous RL training with off-policy correction. We analyze modeling improvements to mitigate the deviation from Markovian dynamics, and evaluate our method on emulated networks from the Pantheon benchmark platform. The source code is publicly available at https://github.com/facebookresearch/mvfst-rl.

翻译:有效的网络拥堵控制战略是保持互联网(或任何大型计算机网络)运作的关键。 网络拥堵控制几十年来一直以手工制作的超光速控制为主。 最近,SergementLinning(RL)已经出现,作为自动优化此类控制战略的替代方案。 到目前为止,研究主要审议了RL界面,在代理商考虑下一步行动时阻塞发送者。这基本上是在为RL设计的游戏框架(如OpenAI Gym)之上建起的工艺品。然而,这并没有转化为真实世界的网络环境,在这样的环境中,一个网络发送者等待一项政策而不发送数据会花费大量精力。我们相反地提议用一个处理延迟行动的无节制RL代理来制定拥堵控制系统。我们提出了MVFST-RL,这是QUIC运输协议中一个可扩展的阻塞控制框架,它利用不连续的RL培训(如Opencronous RL)来进行非政策修正。 但是,我们分析改进模型,以减少对Markovian动态的偏差, 并评估我们在Panthefliflistalbal/ ambalbal base.