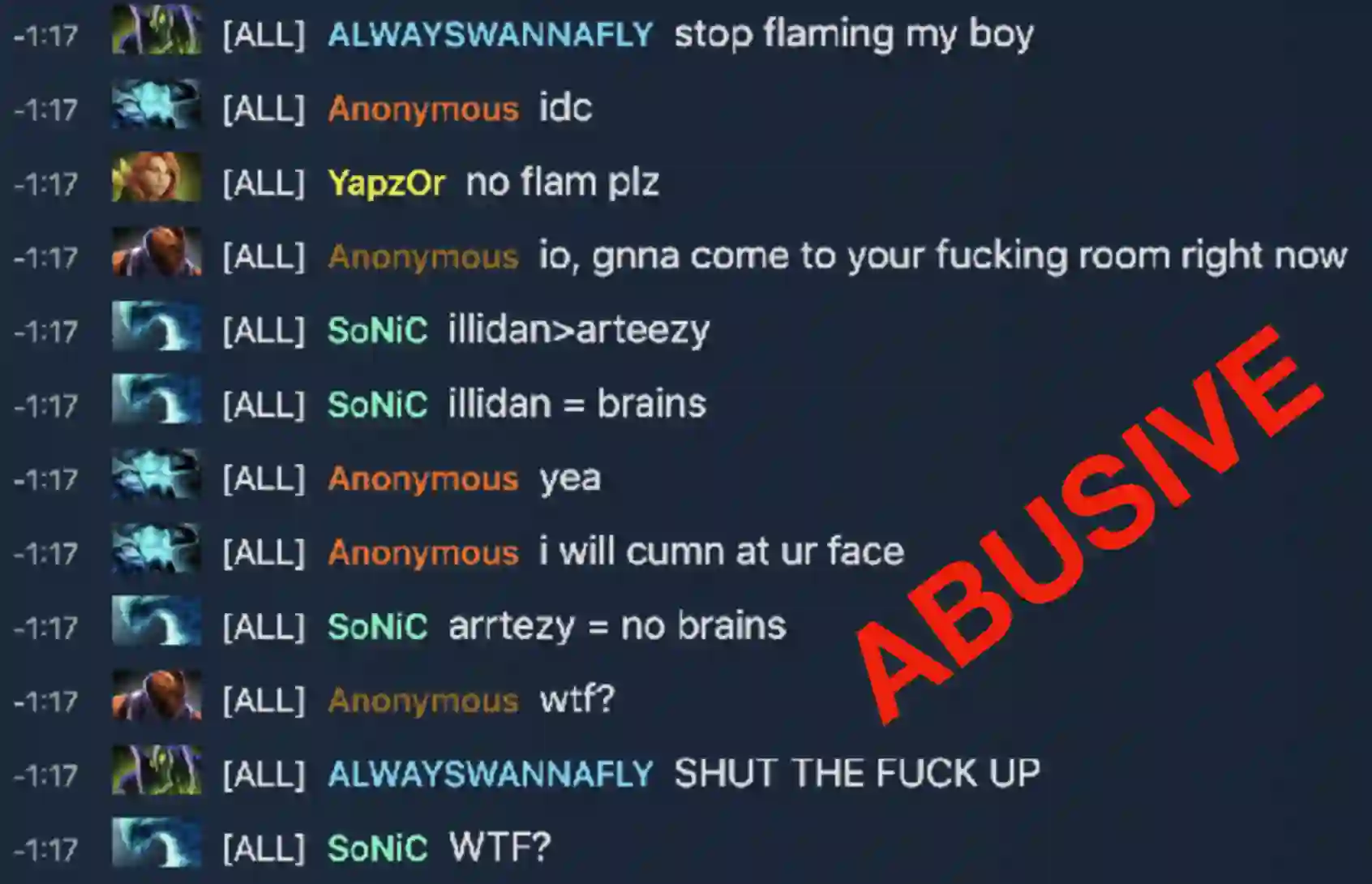

Textual data can pose a risk of serious harm. These harms can be categorised along three axes: (1) the harm type (e.g. misinformation, hate speech or racial stereotypes) (2) whether it is \textit{elicited} as a feature of the research design from directly studying harmful content (e.g. training a hate speech classifier or auditing unfiltered large-scale datasets) versus \textit{spuriously} invoked from working on unrelated problems (e.g. language generation or part of speech tagging) but with datasets that nonetheless contain harmful content, and (3) who it affects, from the humans (mis)represented in the data to those handling or labelling the data to readers and reviewers of publications produced from the data. It is an unsolved problem in NLP as to how textual harms should be handled, presented, and discussed; but, stopping work on content which poses a risk of harm is untenable. Accordingly, we provide practical advice and introduce \textsc{HarmCheck}, a resource for reflecting on research into textual harms. We hope our work encourages ethical, responsible, and respectful research in the NLP community.

翻译:文字数据可能构成严重伤害的风险。这些伤害可以分为三个轴线:(1) 伤害类型(如错误信息、仇恨言论或种族陈规定型观念)(2) 作为直接研究有害内容(如培训仇恨言论分类者或审计未过滤的大比例数据集)与\ Textit{净}直接研究有害内容的研究设计特征(如培训仇恨言论分类者或审计未过滤的大片数据集)与从处理不相关的问题(如语言生成或部分语音标记)中援引的伤害类型(如语言生成或部分语音标记),但包含有害内容的数据集和(3) 从处理数据或将数据标记给数据产生的出版物的读者和审查者的人(米)对伤害类型(mis)的影响。NLP在如何处理、介绍和讨论文字伤害方面是一个未解决的问题;但是,阻止造成伤害风险的内容的工作是站不住脚的。 因此,我们提供实际建议并引入\ textc{Harmguard},这是研究文字伤害问题的资源。我们希望我们的研究能以道德为目的,我们的研究鼓励我们的研究鼓励我们的研究,我们的工作鼓励道德道德。