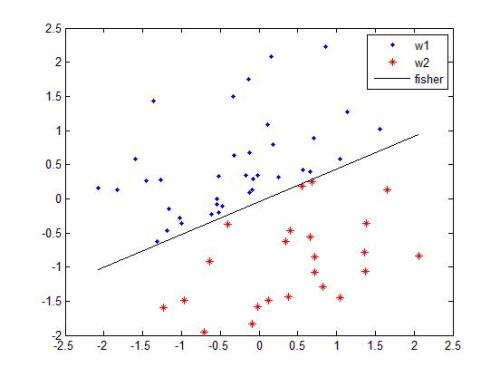

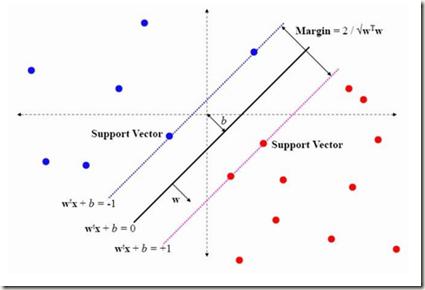

Many classification problems focus on maximizing the performance only on the samples with the highest relevance instead of all samples. As an example, we can mention ranking problems, accuracy at the top or search engines where only the top few queries matter. In our previous work, we derived a general framework including several classes of these linear classification problems. In this paper, we extend the framework to nonlinear classifiers. Utilizing a similarity to SVM, we dualize the problems, add kernels and propose a componentwise dual ascent method.

翻译:许多分类问题侧重于仅在具有最高相关性的样本上最大化性能,而不是所有样本。例如,我们可以提到排名问题、顶部准确度或搜索引擎,只有前几个查询才有意义。在我们以前的工作中,我们推导出一个包括这些线性分类问题的几个类别的通用框架。在本文中,我们将框架扩展到非线性分类器。利用与支持向量机的相似性,我们对问题进行了对偶化,加入了核,并提出了一种组件化对偶上升方法。

相关内容

专知会员服务

46+阅读 · 2020年7月29日

专知会员服务

71+阅读 · 2020年2月5日

专知会员服务

36+阅读 · 2019年10月17日

Arxiv

17+阅读 · 2019年9月9日