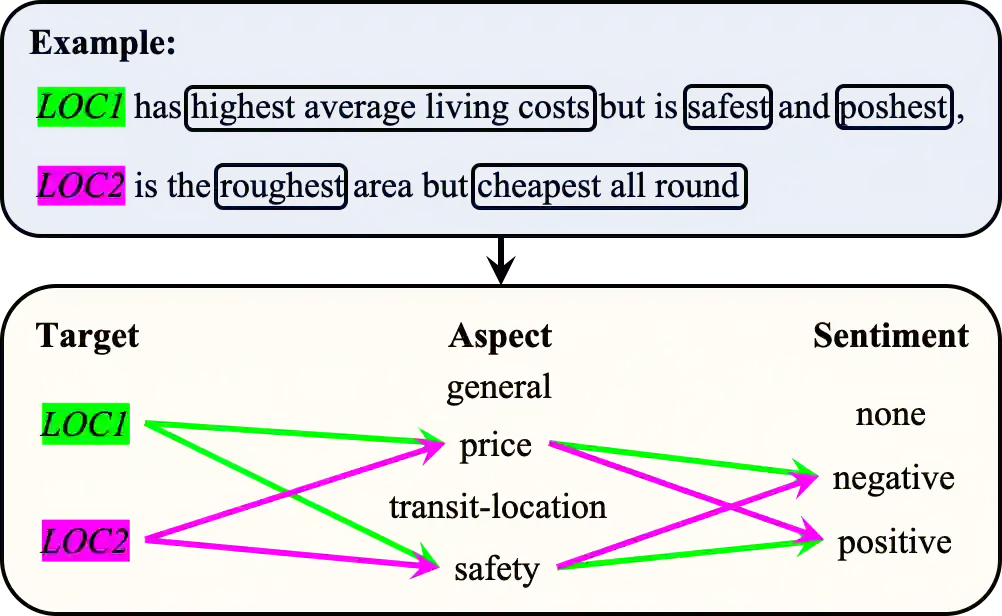

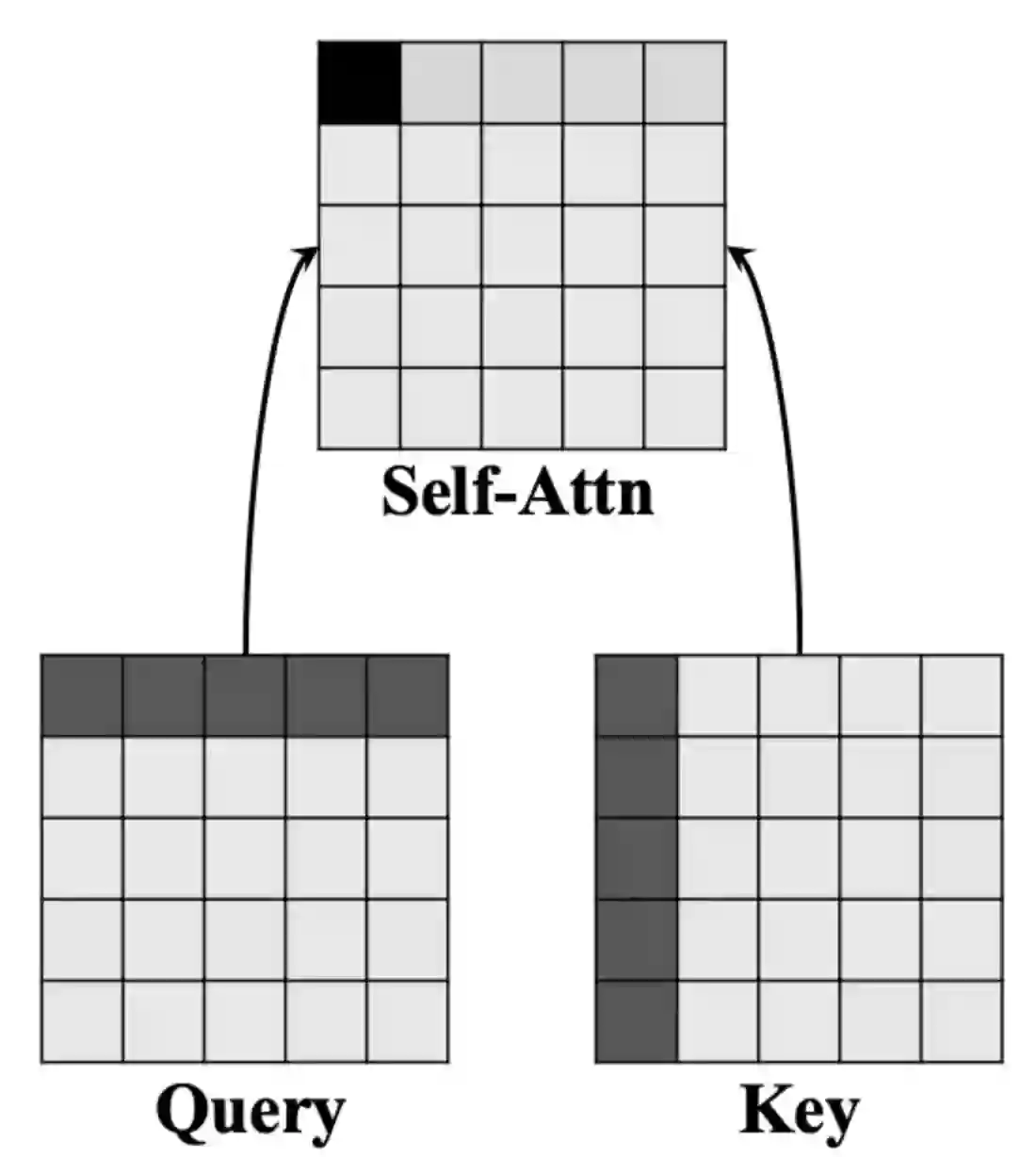

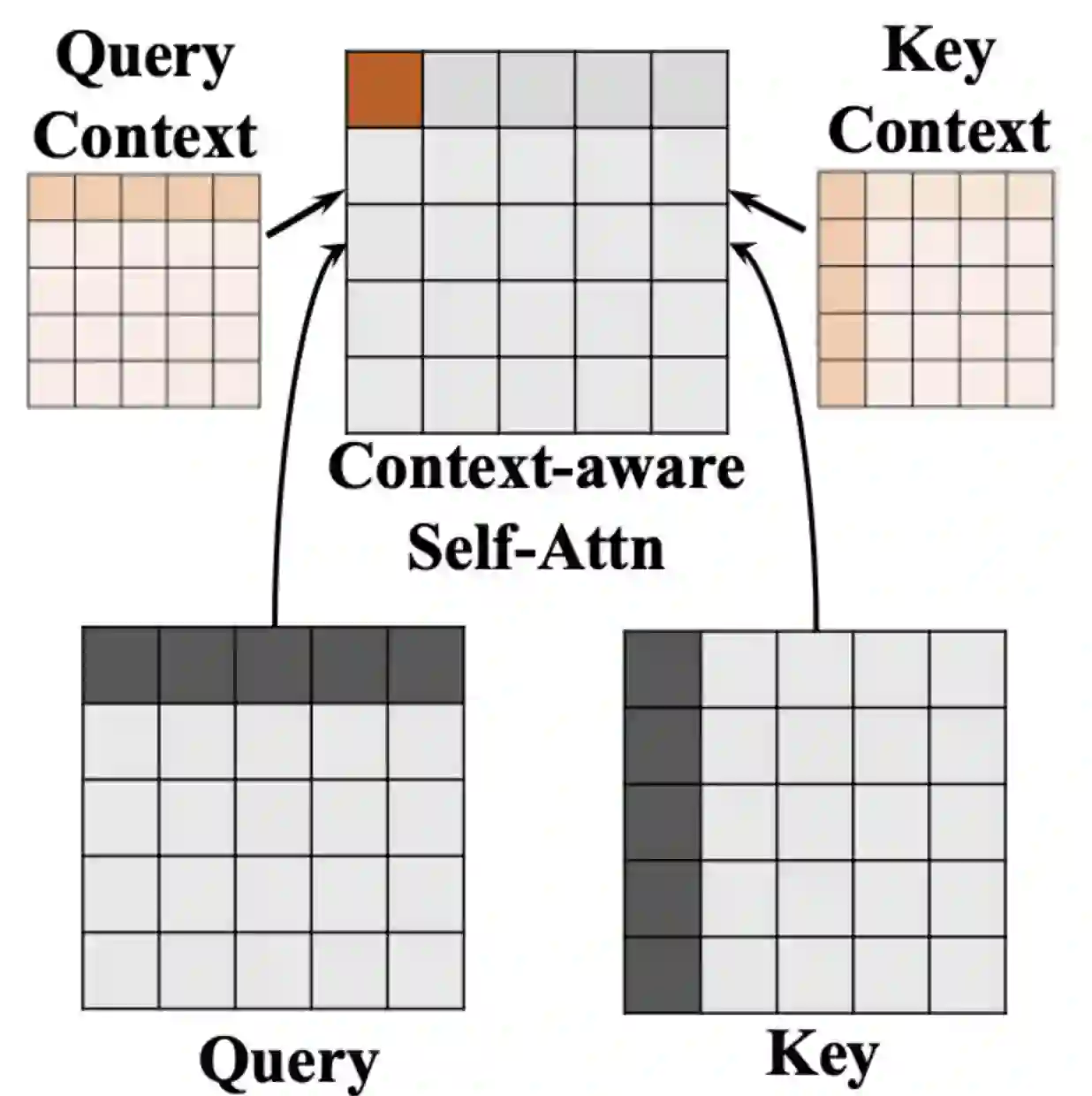

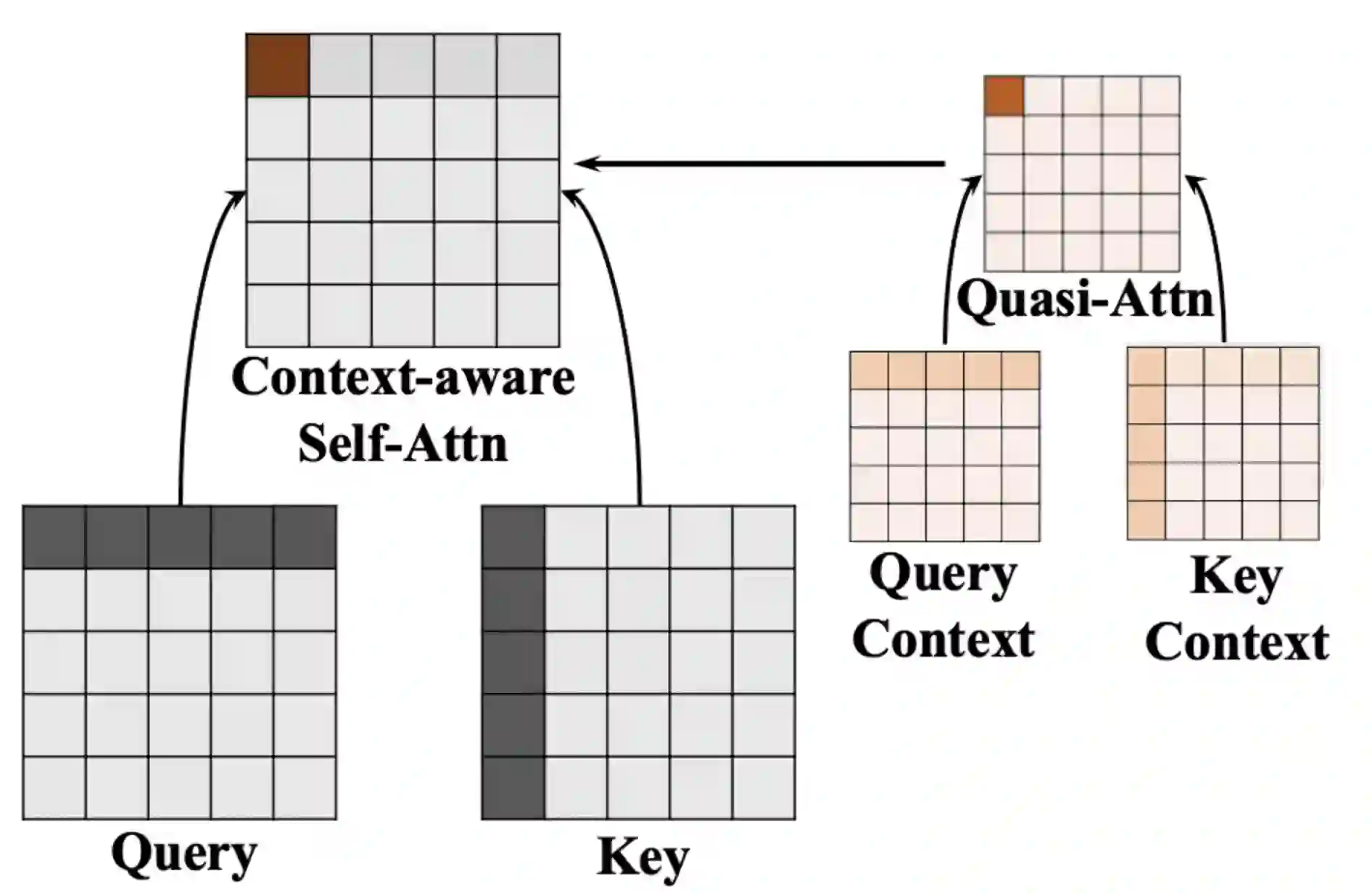

Aspect-based sentiment analysis (ABSA) and Targeted ASBA (TABSA) allow finer-grained inferences about sentiment to be drawn from the same text, depending on context. For example, a given text can have different targets (e.g., neighborhoods) and different aspects (e.g., price or safety), with different sentiment associated with each target-aspect pair. In this paper, we investigate whether adding context to self-attention models improves performance on (T)ABSA. We propose two variants of Context-Guided BERT (CG-BERT) that learn to distribute attention under different contexts. We first adapt a context-aware Transformer to produce a CG-BERT that uses context-guided softmax-attention. Next, we propose an improved Quasi-Attention CG-BERT model that learns a compositional attention that supports subtractive attention. We train both models with pretrained BERT on two (T)ABSA datasets: SentiHood and SemEval-2014 (Task 4). Both models achieve new state-of-the-art results with our QACG-BERT model having the best performance. Furthermore, we provide analyses of the impact of context in the our proposed models. Our work provides more evidence for the utility of adding context-dependencies to pretrained self-attention-based language models for context-based natural language tasks.

翻译:以视觉为基础的情绪分析(ABSA)和有针对性的ABA(TABA)的情绪分析(ABSA)和有针对性的ABA(TABSA)允许根据背景从同一文本中得出对情绪的精细推断,例如,一个特定文本可以有不同的目标(如相邻地区)和不同方面(如价格或安全),与每个目标目标成对有不同的情绪。在本文件中,我们调查在自我注意模型中添加上下文是否改善了(T)ABSA的绩效。我们提出了两个背景指导语言BERT(CG-BERT)的变式,这些变式可以学习在不同背景下传播注意力。我们首先调整了一种环境自觉变换器,以产生一个CG-BERT,使用受环境引导的软性保持。接下来,我们建议改进“易读性CG-BERT”模型,以学会有助于减少关注的构成性关注。我们用预先训练的BERT的两种模式都用两种(T)ABSA数据集:SentiHood和SemEval-2014(TA),我们首先进行自我分析的BER-BE-BER-reserview-resultial-view-A-view-view-view-view-view-view-view-view-A-view-Ial-Ial-Id-IFF-IFF-IA-IA-IA-IFF-IA-S-I-I-IFF-S-S-IFF-res_提供我们的工作背景分析。