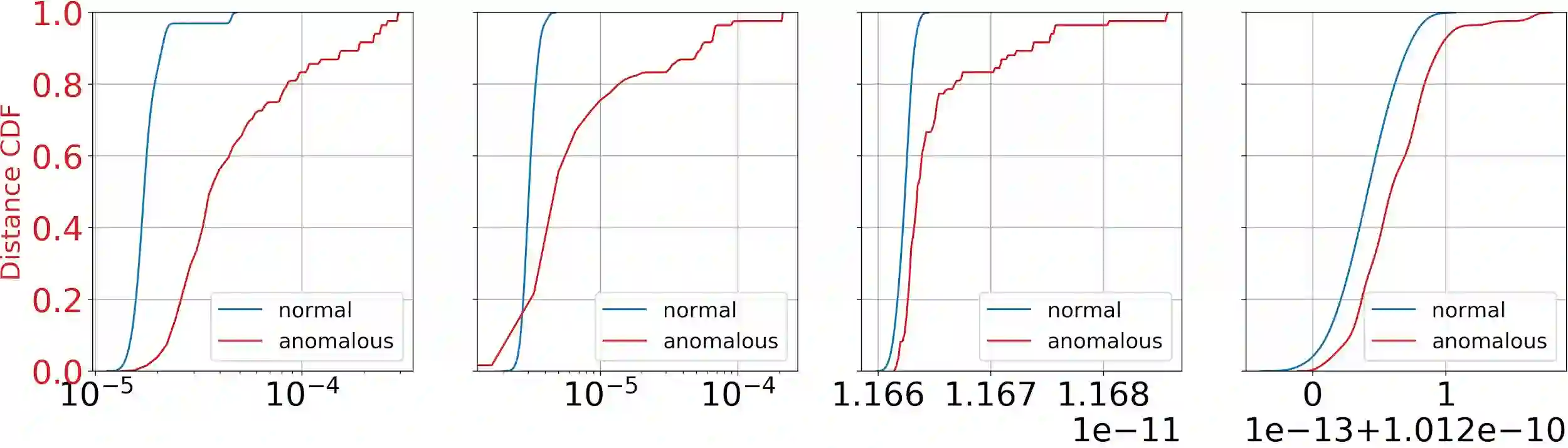

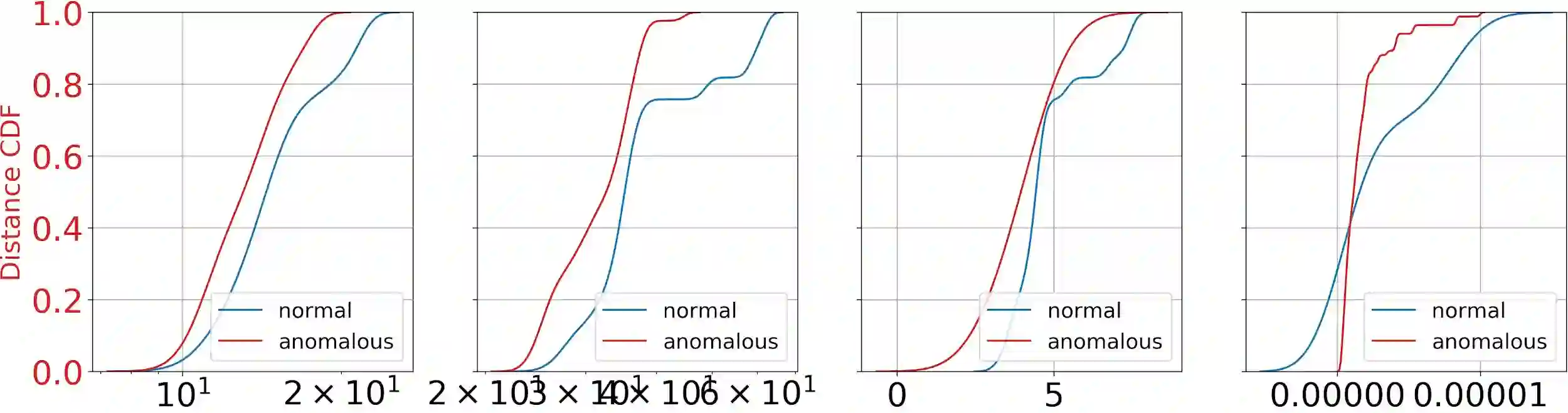

Anomalies are ubiquitous in all scientific fields and can express an unexpected event due to incomplete knowledge about the data distribution or an unknown process that suddenly comes into play and distorts observations. Due to such events' rarity, to train deep learning models on the Anomaly Detection (AD) task, scientists only rely on "normal" data, i.e., non-anomalous samples. Thus, letting the neural network infer the distribution beneath the input data. In such a context, we propose a novel framework, named Multi-layer One-Class ClassificAtion (MOCCA),to train and test deep learning models on the AD task. Specifically, we applied it to autoencoders. A key novelty in our work stems from the explicit optimization of intermediate representations for the AD task. Indeed, differently from commonly used approaches that consider a neural network as a single computational block, i.e., using the output of the last layer only, MOCCA explicitly leverages the multi-layer structure of deep architectures. Each layer's feature space is optimized for AD during training, while in the test phase, the deep representations extracted from the trained layers are combined to detect anomalies. With MOCCA, we split the training process into two steps. First, the autoencoder is trained on the reconstruction task only. Then, we only retain the encoder tasked with minimizing the L_2 distance between the output representation and a reference point, the anomaly-free training data centroid, at each considered layer. Subsequently, we combine the deep features extracted at the various trained layers of the encoder model to detect anomalies at inference time. To assess the performance of the models trained with MOCCA, we conduct extensive experiments on publicly available datasets. We show that our proposed method reaches comparable or superior performance to state-of-the-art approaches available in the literature.

翻译:在所有科学领域,异常现象都是无处不在的, 并且可以表达出一个出乎意料的事件, 原因是对数据分布的不完全了解, 或者一个突然出现并扭曲观察的未知过程。 由于这些事件的罕见性, 培养关于异常检测(AD)任务的深层次学习模型, 科学家只依靠“ 正常” 数据, 即非异常样本。 因此, 我们让神经网络推导输入数据下的分布。 在这样的背景下, 我们提议了一个新颖的框架, 名为多层次的“ 深层分类” (MOCCA), 来培训和测试关于 AD 任务的高级学习模型。 具体地说, 我们把它应用到自动解析器。 我们工作中的一个重要新颖性是, 直接优化对 ADO( AD) 的中间表现。 事实上, 不同于通常使用的方法, 将神经网络视为单一的计算块块, 也就是说, 仅使用最后层的输出, MOCA 明确利用深层结构结构的多层结构结构结构。 我们所训练过的每个层的定位空间在培训过程中被优化为 AD,, 同时, 在测试阶段, 演示阶段, 演示阶段, 演示到 演示阶段, 演示的层次的层次的状态,, 演示到 演示到 演示到 演示阶段, 演示到 演示到 的层次的层次的状态的状态从 演示到, 演示到,, 的状态从 演示到 演示到, 。