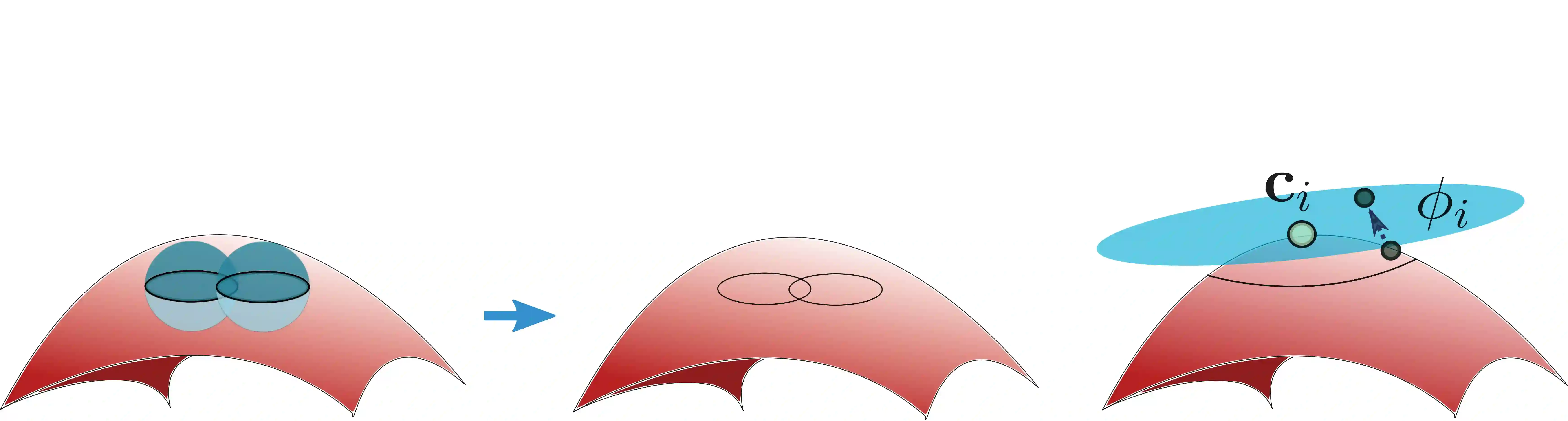

Most of existing statistical theories on deep neural networks have sample complexities cursed by the data dimension and therefore cannot well explain the empirical success of deep learning on high-dimensional data. To bridge this gap, we propose to exploit low-dimensional geometric structures of the real world data sets. We establish theoretical guarantees of convolutional residual networks (ConvResNet) in terms of function approximation and statistical estimation for binary classification. Specifically, given the data lying on a $d$-dimensional manifold isometrically embedded in $\mathbb{R}^D$, we prove that if the network architecture is properly chosen, ConvResNets can (1) approximate Besov functions on manifolds with arbitrary accuracy, and (2) learn a classifier by minimizing the empirical logistic risk, which gives an excess risk in the order of $n^{-\frac{s}{2s+2(s\vee d)}}$, where $s$ is a smoothness parameter. This implies that the sample complexity depends on the intrinsic dimension $d$, instead of the data dimension $D$. Our results demonstrate that ConvResNets are adaptive to low-dimensional structures of data sets.

翻译:有关深神经网络的大多数现有统计理论都有数据层面所诅咒的样本复杂性,因此无法很好地解释对高维数据进行深层学习的经验成功性。 为了弥合这一差距,我们提议利用真实世界数据集的低维几何结构。 我们从功能近似和二元分类统计估计的角度,为富集残余网络(ConvResNet)建立理论保障。 具体地说,鉴于以美元维数为基础的数据嵌入于$mathbb{R ⁇ D$中,我们证明如果网络结构选择得当,ConvResNets能够(1) 任意精确地将Besov 功能与多个元相近,以及(2) 通过最大限度地减少经验性后勤风险来学习一个分类器,从而产生超大的风险,其值为 $-\\\\\\ s%2( s\vee d) $, 美元是一个光度参数。 这意味着样本的复杂性取决于内在维度$D$,而不是数据维值。 我们的结果表明,ConResNet是适应数据组的低维结构的。